Regular readers will likely wonder what more I could have to say about machine learning (ML) in search, after having written How Machine Learning In Search Works just a few months ago.

Let me assure you, this article is different.

Today you won’t be reading the ramblings of an SEO professional who fancies himself reasonably informed in how machine learning works as it’s related to search.

Instead, we’ll be turning the tables and learning about search implementations from the perspective of a machine learning expert.

This article outlines and hopefully expands on some of the core concepts discussed in an amazing interview with fellow Search Engine Journal contributor Jason Barnard and Dan Fagella of Emerj.

For context, Fagella has addressed the UN on deepfakes.

And Emerj, as a company, is an Artificial Intelligence (AI) research firm that helps organizations use AI to:

- Gain a strategic advantage.

- Inform them on how to pick high-ROI AI projects.

- Support strategic AI initiatives.

Basically, I’ll be covering a video interview between a great interviewer and a very smart guy who runs a company focused on helping companies make money from AI.

(Companies like Google, Bing, Amazon, Facebook, etc.)

Before we begin, you may want to watch the video first or you may want to watch it at the end.

I’m hoping this article will stand alone, but with the interview watched it will certainly provide greater benefit and context.

So, to get you started, here’s the video filled with amazing info, some decent humor, and a person named …

The Interview: Machine Learning & AI in Search

The Article Format

I am going to keep things in this article mainly following the order of the interview, discussing specific points as they were discussed.

If you have multiple monitors, you can follow along.

I need to clarify that below I will consider quotes – my “cleaned up” versions of what was said in the interview.

This is for brevity.

My Quick Aside to the Interviewer

Barnard begins his interview by declaring about machine learning, “It’s only one of thousands, hundreds of thousands of uses of AI and not necessarily the most interesting.”

Jason … when we’re all allowed to travel again and I see you at a conference, I have a bone to pick with you.

It’s definitely the most interesting use of machine learning.

Finding alien life, curing disease… these are just peripheral niceties. 😉

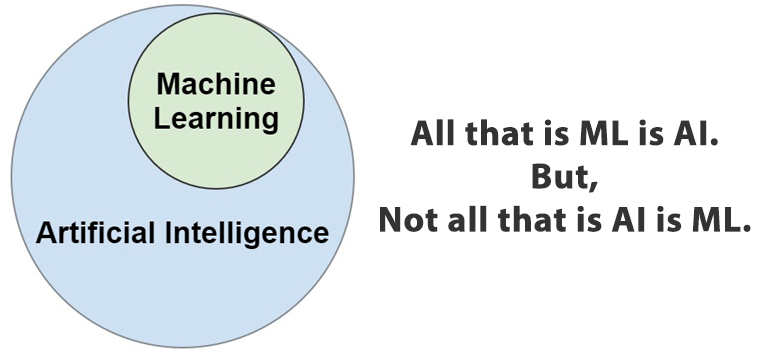

What Is the Difference Between Machine Learning & Artificial Intelligence?

Let’s begin by differentiating between machine learning and artificial intelligence.

According to Dan Fagella:

“Artificial intelligence is a broader umbrella than ML.

So, ML is normally seen as a subset of artificial intelligence.

Artificial intelligence is anytime we can get a computer to do something that otherwise we would have needed human to do.

Very ephemeral, very tough to pin down because as soon as we understand it, we won’t call it AI anymore.”

In short, we see AI when we see computers doing things that normally would require the flexibility of a human’s brain.

And machine learning is just a part of that, as illustrated by:

According to the Emerj website:

Artificial Intelligence Is…

“Artificial intelligence is an entity (or collective set of cooperative entities), able to receive inputs from the environment, interpret and learn from such inputs, and exhibit related and flexible behaviors and actions that help the entity achieve a particular goal or objective over a period of time.”

Machine Learning Is…

“Machine Learning is the science of getting computers to learn and act like humans do, and improve their learning over time in autonomous fashion, by feeding them data and information in the form of observations and real-world interactions.”

So basically, AI covers a broader spectrum of systems designed to replace us than machine learning, which does so in a very specific way.

Does Understanding Machine Learning Help SEO Pros?

Before we dive into this, let me first note that what we’re talking about here is whether machine learning knowledge can be directly applied to SEO.

Not whether great SEO tools can be built with it, etc.

When I was listening to the interview, you can imagine my reaction when Dan – a man I have a great deal of respect for – was so incredibly “wrong” when he said that understanding machine learning is not a useful pastime for SEO professionals.

Turns out though, he wasn’t wrong.

A point I had to acknowledge as he continued by clarifying something incredibly important: it isn’t a silver bullet.

Understanding machine learning does not help you understand ranking signals.

It simply helps you understand the system in which the ranking signals are weighed and measured.

This does not mean you will naturally be the victorious knight, Sir Ulrich Von Lichtenstein.

As even knowing the system, you may find yourself as Count Adhemar did, on the flat of your back.

Measuring Successful AI

So, how does the system work?

How is success measured?

Fagella starts with a great analogy.

He discusses a scenario like Microsoft Bing rolling their search engine into Malaysia – a scenario where they’re bootstrapping a search engine.

Note: Bootstrapping, in this context, refers to the initialization of a system and not starting a business with nothing.

Nor is it the data science technique for making estimates based on smaller samples.

He mentions pulling in a group of native speakers as an initial training group.

They will rate the results that the system is initialized with (presumably pre-launch), and the system will learn from them, as will the engineers.

Once the system is satisfactory – often the point where it simply is superior to the existing results – it would be deployed.

And the training group is reduced to the number needed to keep the system valid, and advance on it in specific areas of interest.

For lack of a better term, let’s just call them “Quality Raters.”

E-A-T in Machine Learning

Barnard brings up an important point in the interview that I want to draw readers’ attention to.

He says:

“A great example is E-A-T or expertise, authority and trust.

Google says, “Is this site or authoritative? Is the person or company expert, and can we trust them?”

And that’s a big part of the Quality Raters Guidelines.

So there’s no real way for us to say what the specific factors are.

But we can say that the algorithm is being trained to respect the feedback, both from users and from the Quality Rates of what they perceive to be E-A-T.

So, we don’t know what the factors are, but we can say this is what people perceive to be E-A-T.

And that’s what we should be focusing on, because that’s where the machine will get the results to.”

An Aside About Machine Learning & the ‘Living Breathing System’

A relevant aspect of machine learning itself, that relates to what Jason and Dan talk about, is rooted in how machine learning works.

Or at least the Supervised Model, which is what we’re generally working with here – and deserves clarification.

In this scenario, the machine learning system would not simply be a static algorithm – trained and then deployed in a final form.

But rather one that is pre-trained before deployment (e.g., during the bootstrapping stage mentioned above).

And then continuously set to check itself and adjust, through a comparison with the desired end goal and previous success and failing results.

At the beginning of some of a search engine’s machine learning introduction, there will be a starting set of “known good” queries and results (queries with a known set of results that satisfied users).

And the algorithm(s) will be trained on that.

It’ll then be given queries without the “known good” result to produce its own “guess.”

And then produce a success score based on the then revealed “known good.”

The system will continue to do this, getting closer and closer to the ideal.

Assigning a value to its accuracy and adjusting for the next attempt.

Always striving to get closer and closer to the “known good.”

Eventually, the “known good” answer needs to be set aside, and the system needs to know how to recognize good from external signals and make that the goal.

For example, a Quality Rater’s grading or a large number of users’ interaction with a SERP result.

If Quality Raters or SERP signals indicate an imperfect result, that is pulled into the system and fine-tuning of signal weights are made – though presumably only on large scales.

A good signal would reinforce success.

Give the system a cookie, so to speak.

Sample Signals

When we think of signals, we tend to think of links, anchors, HTTPS, speed, titles, etc., etc.

In their interview, Barnard and Fagella bring up some additional signal examples that most certainly are used in some queries.

Things we need to be aware of, and use to inspire other ideas (just like Stevie is).

Environmental signals like:

- Day of the week.

- Weekday versus weekend.

- Holiday or not.

- Seasons.

- Geo and how it combines with other signals.

- Weather.

And Dan importantly brings up that something like a spike in searches around “chest pain” on Monday would possibly trigger increased visibility for tertiary data, such as heart attack recognition tips, on that day.

Google’s Goal

Dan also brings up something interesting for us all to consider when he says:

“The fact of the matter is the weights of those factors are always tilting and shifting based on what Google wants to do for better relevance. What Google wants to reduce our ability to gamify the system.

They might want to change their rules just to make sure you don’t know what they are.

Now, if they can do both at the same time and it’s a straight line, that’s what they’re going to do.

But it’s almost certain that they’re making adjustments for both those purposes.

For preventing it to be gamed, and also for improving relevance that they’d love to do both all the time.”

I was surprised, but I’d never thought of that specifically.

Changes to the algorithm made just for the sake of throwing us pesky SEO pros off.

I’m not sure that’s done, but it certainly could be and is worth considering.

Human Input

We mentioned above, a person named Stevie.

It’s critical to remember that while there is no one at Google specifically deciding that something like rain in Boston should result in an augmentation of ranking factors that favor X, there is a person who decides whether weather should be tested as a possible ranking signal, and found ways to set up tests of such.

As Dan puts it:

“[Google is] not God. It’s simply human beings saying, “This Corpus of data we think could be used to inform this category of searches in this geo region, and in this language.

Let’s go ahead and permit that.

And then let’s garner some feedback from it and see if we can expand that use of weather data to other categories of searches and then lets scratch our chin again, and let’s look at user behavior.””

Basically, a human (Stevie) decides what to test and how to test it.

He trains a machine, refines, and monitors the results.

Once complete and if successful, they may consider expanding on the initial use if applicable, as Google did with RankBrain – taking it from previously unseen queries to all queries.

Our Input

And speaking of humans, there’s the role of searchers in the process.

I’m not going to say CTR, or bounce rate, but rather simply list “user satisfaction” not as a signal, but as the goal of the machine.

As discussed, a machine learning system needs to be given a goal – something to rate its result.

And what goal would you give a machine learning system designed to adjust how websites are ranked?

Why some signal or combination of signals that indicate user satisfaction of course!

So, IMO, yes – user satisfaction is a signal insofar as it’s used to grade the success of a SERP result.

And if the signal is good, sites with features like those producing it will be impacted positively (for it and likely similar queries).

If users respond unfavorably to a SERP page, sites with features like those will not be penalized but will be deemed not a good result for those types of queries.

So, user behavior isn’t a signal per se, it just looks and acts like one because of the way machine learning systems work.

And Stevie’s Input

And, of course, there’s Stevie.

The human over at Google who, as Jason puts it:

“… that Stevie person who shows up in the morning saying, “Right,

We’re measuring this today.

And this is a measure of success.

That’s a measure of failure.

What are the metrics we should be focusing on for the right quality?”

Stevie defines the tests, and the signals involved.

Stevie defines the success metrics.

And Stevie defines failure.

The machine does the rest.

It is possible and even probable that the machine learning system is also looking for other signals that correlate to positive success metrics.

And either reporting on those, or allowed to simply adjust their weights accordingly.

Hopefully, that doesn’t go too far or Stevie is out of a job.

But Just Watch the Video

If you didn’t above, I can’t stress enough how much I recommend watching the video and getting the info from the horse’s mouth.

Hopefully you’ve found this article informative.

But I obviously couldn’t get all the info into it, though hopefully, I’ve added some relevant additional explanations where appropriate.

If you didn’t already, enjoy the engaging and entertaining education…

More Resources:

- Why SEO & Machine Learning Are Joining Forces

- A Practical Introduction to Machine Learning for SEO Professionals

- How Search Engines Work

Image Credits

Featured Image: Adobe Stock, edited by author

Stevie Image: Adobe Stock, edited by author

ML versus AI Image: Author

Cookie Image: Adobe Stock