This is a sponsored post written by DeepCrawl. The opinions expressed in this article are the sponsor’s own.

Links aren’t dead. Yet.

Google has been giving mixed messages about the importance of backlinks, but we all know links are still a key signal search engines use to determine the importance of webpages.

While it is up for debate whether you should pro-actively engage in link building activities (as opposed to more general brand building activities that lead to links, social shares, etc.), monitoring and optimizing the existing backlinks pointing to your site is a must.

Let’s look at a few practical ways that you can combine crawl data and backlink data to understand the pages on your site that are receiving backlinks and actions you take to fully exploit their value.

Adding Crawl Data Into the Mix

While backlink tools are highly valuable for keeping on top of your site’s link profile, you can take your link monitoring and optimization to the next level by combining their data with the crawl data you get from a platform like DeepCrawl.

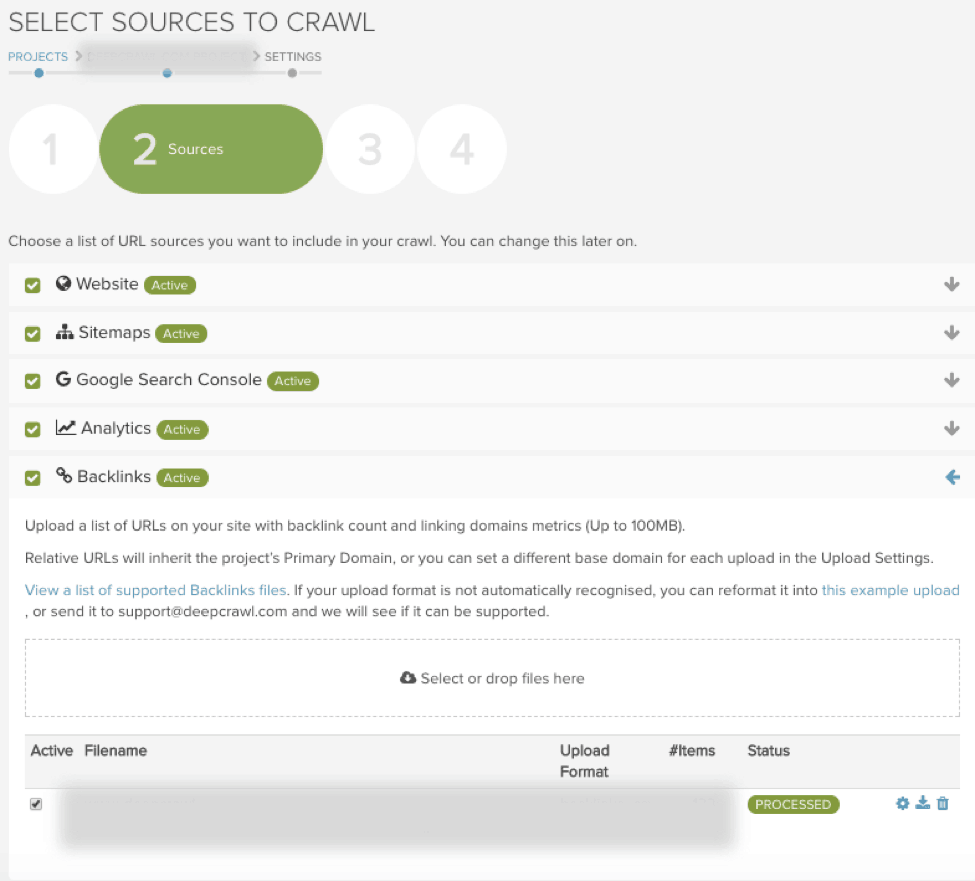

To take advantage of this killer combination, simply export your pages with backlinks data from your link monitoring tools as a CSV, then start a trial with DeepCrawl and upload your exported link data in the second stage of the crawl setup. If you are exporting link data from Majestic, Ahrefs or Moz you won’t even need to reformat the CSV as part of the upload process.

After running a crawl, you can utilize a number of reports in DeepCrawl that will enhance your link data by providing insights about the target pages. You can then go one step further by adding Google Analytics and Search Console data into your crawls to assess the value of your pages with backlinks by seeing if the target pages are driving impressions in search and traffic to your site.

Let’s take a look through a few specific examples of how you can combine link and crawl data.

1. Broken Pages With Backlinks

What can you identify?

Using the Broken Pages With Backlinks Report you can find pages with backlinks that return 4xx or 5xx errors.

Why is it an issue?

You want to avoid having backlinks pointing to broken pages because this means any link equity gained from these links will be lost. Such instances will also result in poor user experience as visitors will land on a broken page rather than a relevant one.

What action can you take?

You can either look to restore the page to a 200 status or set up a 301 to redirect to another relevant page. With the latter you will need to make sure the redirected page makes sense in context of a user clicking on a link and landing on a relevant page.

2. Non-indexable Pages With Backlinks

What can you identify?

Non-indexable Pages with Backlinks is another key report, which will allow you to identify pages that return a 200 response but that aren’t indexable, which could be due to the page having a noindex tag or a canonical pointing to another page.

Why is it an issue?

A page with backlinks that isn’t indexable in search engines can pass link equity to other pages, but this may be less effective than allowing the page to rank in search results.

What action can you take?

With these pages you will need to decide if you want that page and its content to be discoverable by search engines. If you do want the page to be indexed, then you will need to find out why the page isn’t indexable and rectify this (e.g. removing the noindex tag or changing to a self-referencing canonical). If you don’t want the page indexed in search you can try reaching out to the linking domain and asking them to change the link to another relevant page on your site.

3. Redirecting URL With Backlinks

What can you identify?

Sites change over time. It is possible you may change the URL architecture of your site and implement 301 redirects to send search engines and users from the old version of your URL to the new one. Redirecting URLs with Backlinks will flag externally linked pages that redirect to another page.

Why is it an issue?

This isn’t necessarily an issue because Google confirmed PageRank isn’t lost from 301 redirects.

What action can you take?

While backlinks to a redirecting page might not be an issue, you should review these redirecting pages to make sure the redirection target is relevant to the source page and anchor text of the link, and makes sense in terms of experience for user.

4. Orphaned Pages With Backlinks

What can you identify?

At DeepCrawl, we define orphaned pages as ones that do not have any internal links on pages found in the web crawl.

Why is it an issue?

Orphaned pages are still working and may be driving traffic and link equity into the site, but are often forgotten about and may be out of date, or a poor user experience. Normally, you aim to obtain backlinks pointing towards important and unique pages that you want shown in search.

What action can you take?

Orphaned pages with backlinks should be reviewed on a page-by-page basis. If the page is providing value to your users then you should add internal links to this page. This will help Google understand how the previously orphaned page relates to others on your site.

Alternatively, you can redirect the orphaned page to a more relevant one which you want to receive link equity and that provides value to users.

5. Disallowed URLs With Backlinks

What can you identify?

Disallowed URLs with Backlinks will highlight pages with backlinks that are disallowed as specified in your robots.txt file.

Why is it an issue?

Pages in this report are a problem because the link equity cannot be passed to the target page or to any of the pages it links to as Google has been instructed not to crawl the target URL.

What action can you take?

With disallowed pages with backlinks, you will need to consider allowing the page to be crawled by removing it from the robots.txt in order to allow other pages benefit from the link equity from the backlinks.

6. Meta Nofollow Pages With Backlinks

What can you identify?

In the Meta Nofollow with Backlinks report, DeepCrawl will identify pages with a meta nofollow tag that have backlinks pointing to them.

Why is it an issue?

Having a meta nofollow on a page has the effect of saying that there are no links on the page and will mean that the link equity from backlinks doesn’t spread to any of the pages linked from the target page.

What action can you take?

With any nofollowed pages with backlinks you should consider if a nofollow tag is really necessary and consider removing it so that the link equity can be passed through to the rest of your site.

Get Started With DeepCrawl

Hopefully, this post has given you some ideas about what you should be looking for when monitoring and maintaining your link profile to maximize link equity to your site. To start combining link and crawl data, get started with a free two-week trial account with DeepCrawl and get crawling!

About the Author

Sam Marsden is Technical SEO Executive at DeepCrawl and writer on all things SEO.

Sam Marsden is Technical SEO Executive at DeepCrawl and writer on all things SEO.Image Credits

Featured Image: Image by DeepCrawl. Used with permission.

In-post Images: Images by DeepCrawl. Used with permission.