A recent tweet from Google’s Gary Illyes called attention to problem of invalid HTML. Google is fine with invalid HTML. Less well known is that there are negative outcomes associated with invalid HTML. Google’s support pages encourages publishers to code valid HTML.

Gary Illyes tweeted the following message:

“Dear JavaScript frameworks and plugins,

If you could stop putting invalid tags in the HTML head, like IMG and DIV, that would be great.Yours truly,

Browsers”

JavaScript frameworks are code packages that can serve as the building blocks for apps and websites. They speed up the development of websites. Plugins is a reference to add-ons to commonly used content management systems.

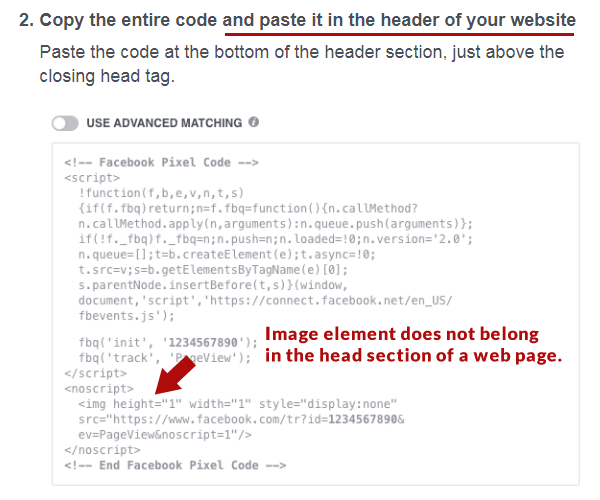

Facebook requires some advertisers to add a tracking image code within the head section of a web page, where it does not belong. This can cause a cascading series of HTML errors and affect how efficiently Google crawls and indexes a web page, particularly with regard to hrfelang tags.

Google Says Valid HTML Doesn’t Matter

Google’s own support page on the importance of browser compatibility states that invalid code is generally just fine.

“Although we do recommend using valid HTML, it’s not likely to be a factor in how Google crawls and indexes your site.”

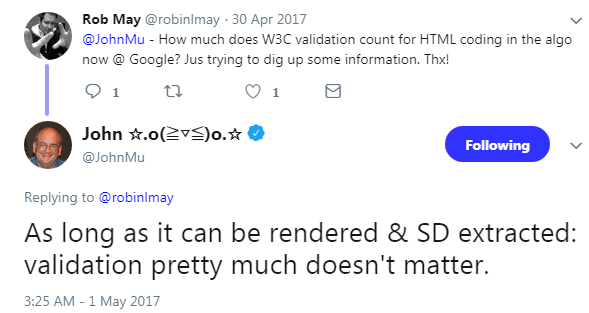

In 2017 John Mueller was asked if valid HTML played a ranking role and Mueller’s response was clear that valid HTML is not important.

“As long as it can be rendered & SD extracted: validation pretty much doesn’t matter.”

Another publisher followed up to ask if valid HTML helped for ranking purposes.

John Mueller’s response was clear and unambiguous:

These are just a few of the statements from Google that encourages publishers to not worry about HTML validation. Most sites don’t validate and the Internet hasn’t collapsed. However there are valid reasons why you should consider valid HTML.

Related: W3C Validator: What It Is & Why It Matters For SEO

6 Reasons Google Advises to Validate HTML

1. Could affect crawl rate

2. Affects browser compatibility

3. Encourages a good user experience

4. Ensures that pages function everywhere

5. Useful for Google Shopping Ads

6. Invalid HTML in head section breaks Hreflang

1. Valid HTML and Crawl Rate

In a Google Search Console support page about drops in crawl rate, Google advises that invalid HTML could affect the crawl and indexing of a web page.

“Broken HTML or unsupported content on your pages: If Googlebot can’t parse the content of the page… it won’t be able to crawl them. Use Fetch as Google to see how Googlebot sees your page.

2. Browser Compatibility

In another official webmaster support page, Google encourages the use of valid HTML in order to ensure proper rendering of web pages.

GoogleBot renders your site as a browser, Chrome version 41 to be specific. Chrome 41 dates to March 15th. Valid HTML code will help assure that your site renders well across all browsers, including the version GoogleBot uses for rendering websites. For example, CSS Custom Properties is not supported by version of Chrome used by GoogleBot for page rendering (Read: Google Engineer Issues Warning About Google Crawler).

“Clean, valid HTML is a good insurance policy, and using CSS separates presentation from content, and can help pages render and load faster.”

Encourages Positive User Experience

It’s clear that Google uses the user experience as a signal in the ranking process. That’s the whole point of mobile friendly requirements and for counting how many ads and popups are on a web page.

It is unlikely that Google directly uses valid HTML as a ranking signal. A speculative argument could be made that valid HTML may indirectly affect the user experience and that in turn can become a positive user experience signal because the page renders perfectly and quickly.

For example, valid HTML can help a web page function across all devices, browsers and operating systems. This user experience factor is so important that it’s in Google’s Webmaster Guidelines:

“Help visitors use your pages

Ensure that all links go to live web pages. Use valid HTML.”

4. Ensures that Pages Function Everywhere

Poorly coded HTML causes the browser to go into “quirks mode.” Quirks mode means the browser is making changes to how the page is rendered. Usually the web page renders fine. But sometimes the page does not function correctly.

5. Valid HTML and Google Merchant Center

Google Merchant Center is a tool for creating Shopping Ads. Google’s Merchant Center support page recommends using valid HTML.

“Use valid HTML.

We also detect the price that you’re displaying based on the structure of your landing page. Using valid HTML helps ensure that we detect the correct price. …Use the W3C validation service to check your HTML

6. Invalid HTML in Head Section Breaks Hreflang

In a Webmaster Hangout from 2016 a web publisher asked why Google wasn’t picking up his Hreflang tags. Mueller responded that invalid code in the head section can break Google’s crawl and cause it to not index the Hreflang tags.

Here’s how Google’s John Mueller explains it:

So it might just be that we don’t recognize the hreflang markup at all on those pages.

For example, what might happen is we can crawl and index those pages. But when we render those pages something in the head section of the pages is added early on and that kind of breaks everything within the head, which includes the hreflang markup.

Valid HTML Matters

Google’s support pages show that valid HTML matters. Google’s Gary Illyes’ recent tweet about using valid HTML in the head section is a reminder of how important it is to validate HTML. Validating web pages can protect a web page from unforeseen errors.

If you’re interested in a deep dive into what breaks HTML, I highly recommend Edward Lewis’ article on the Fatal Error HTML Validation bug that can affect how Google crawls your site.

Advantages of Valid HTML

Matthew Edward of SpringBoardSEO.com has extensive experience manually editing web pages to make them valid. So I asked if he knew of any advantages for coding valid HTML.

“It can help you prevent rendering issues that some browser will forgive, but others with take literally. Most of the errors that would prevent Google from properly crawling and indexing would be obvious looking at a page.”

Valid HTML Doesn’t Matter

One can minimize these six reasons as edge cases. Most web pages appear to be unaffected by poor HTML coding practices. But then again, most things are not important until they become important.

A pragmatic approach would be to make sure there aren’t any inexplicable crawl errors and if not then worry about more important issues.

How do you feel about the issue of valid HTML? What’s your opinion?

Images by Shutterstock, Modified by Author

Screenshots by Author