Monitoring ongoing website changes is critical.

If you make sweeping changes that have disastrous consequences later, you want a paper trail you can go back to.

Either emails or ongoing spreadsheet tracking: if you make a change to the website like new content or sweeping technical changes, track it.

This way, you can figure out what, exactly, is happening later.

Plus, it’s always a good idea to monitor website updates on an ongoing basis to make sure that site mismanagement doesn’t cause issues later.

In this post, we will audit and identify major issues that can occur when it comes to ongoing website updates.

Site Uptime

In an SEO audit, identifying site uptime issues can help determine problems with the server.

If you own the site, it’s a good idea to have a tool like Uptime Robot that will email you every time it identifies the site as being down.

Server Location

Identifying the server location can be an ideal check to determine location relevance and geolocation.

How to Check

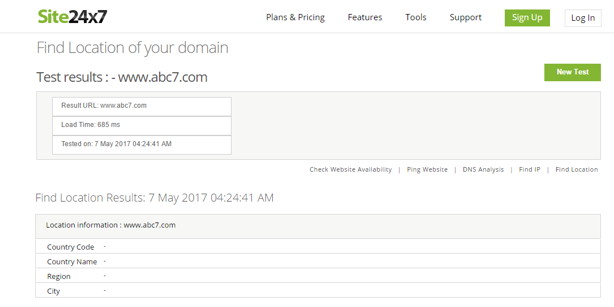

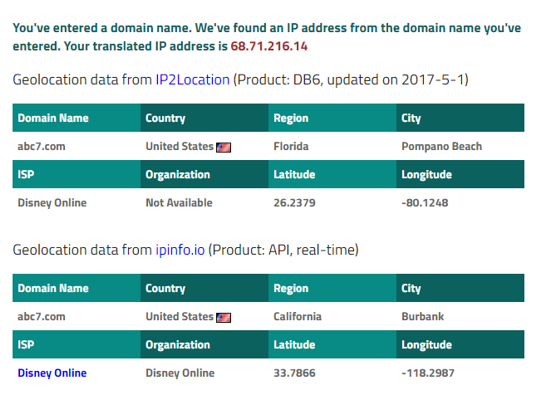

Using a tool such as site24x7.com or iplocation.net can help you identify the physical location of the server for a specific domain.

Site24x7

iplocation.net

iplocation.net

Terms of Service & Privacy Pages

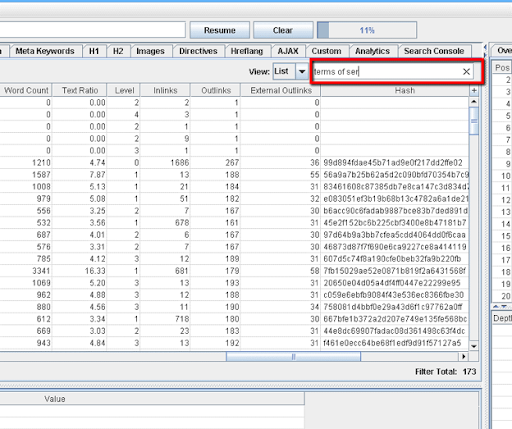

Quite simply, you can use Screaming Frog’s search function (on the right side) to identify terms of service and privacy pages showing up in your Screaming Frog crawl.

If they don’t show up on the crawl, check on-site, and make sure they are actually there and not hosted elsewhere (this can happen sometimes).

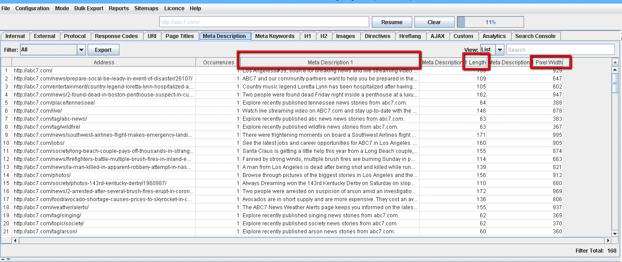

Duplicate Meta Information On-Site

In Screaming Frog, it’s quite easy to find duplicate meta information on-site.

What to Check

After the crawl, click the Meta Description tab. You can also check for duplicate titles by clicking on the Page titles tab.

In addition, this information is easily visible and can be filtered in the Excel export.

Breadcrumb Navigation

Identifying breadcrumb navigation can be simple or complex, depending on the site.

If a developer is doing their job correctly, breadcrumb navigation will be easily identifiable, usually with a comment indicating the navigation is a breadcrumb menu.

In these cases, it is easy to create a custom extraction that will help you crawl and extract all the breadcrumb navigation on the site.

How to Check

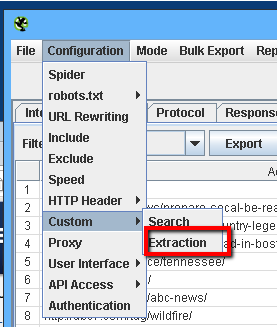

Click on Configuration > Custom > Extraction to bring up the extraction menu.

Customize the settings and set up your extraction where appropriate, and it will help you identify all the breadcrumb navigation on the site.

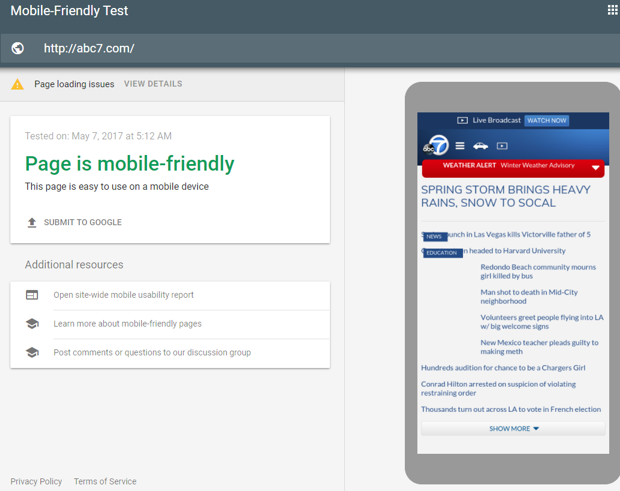

Mobile-Optimized

As of May 2019, Google has moved to mobile-first indexing by default for brand-new websites. So, it is important now more than ever to make sure your site is optimized for mobile.

How to Check

Use Google’s Mobile-Friendly Testing tool to find out whether your site is mobile friendly.

Site Usability

Usability is important to get right among your users. The easier a site is to use, the better.

There are user testing services available that will help you figure out what reactions are really happening when users use your site.

How to Check

First, check and see how users are really using your site through a service like UserTesting.com.

This will give you invaluable information you can use to identify where your site’s weaknesses lie.

Heatmaps are also invaluable tools showing you where your users are clicking most.

When you use heatmaps to properly test your sites among your users, you may be surprised.

Users may be clicking where you aren’t thinking they are. One of the best tools that provide heatmap testing functionality is Crazy Egg.

Use of Google Analytics & Google Search Console

When using Google Analytics, there is a specific analytics ID (UI-#####…) that shows up when it’s installed.

In addition, Google Search Console has its own signature coding.

How to Check

Using Screaming Frog, it’s possible to create a custom extraction for these lines of code, and you can identify all pages that have proper Google Analytics installs on your site.

Click on Configuration > Custom > Extraction, and use the proper CSSPath, XPath, or Regex to figure out which pages have Google Analytics installed.

For Google Search Console, it can be as simple as logging into the GSC account of the owner of the domain and identifying whether the site still has access.

Backlink Factors

Now, more than ever, it has become a necessity to create a natural link profile that is healthy and free of bad links.

Unfortunately, bad links are unavoidable in today’s internet economy. The key is to make sure that they don’t become a major part of your link profile.

Things become even more problematic when a competitor decides they want to hit you with a negative SEO brute-force bad link attack in an effort to cause Penguin to algorithmically devalue your site.

Penguin has become a critical part of Google’s algorithm, so it’s important to have an ongoing link examination and pruning schedule.

This helps to identify any potential links that will harm you before they cause trouble and before significant issues arise because of their linking to your site.

The factors that you can check using a link profile audit include:

- # of linking root domains.

- # of links from separate C-class IPs.

- # of linking pages.

- Alt text (for Image Links).

- Links from .EDU or .GOV domains.

- Authority of linking domain.

- Links from competitors.

- Links from bad neighborhoods.

- Diversity of link types.

- Backlink anchor text.

- No-follow links.

- Excessive 301 redirects to page.

- Link location in content.

- Link location on page.

- Linking domain relevancy.

How to Check

Using Majestic SEO, it’s possible to get some at-a-glance identification of the issues surrounding your link profile pretty much instantaneously.

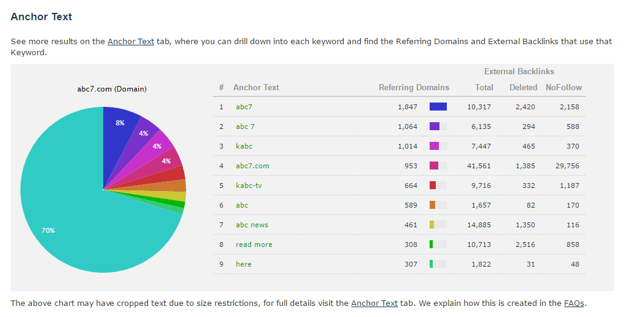

For example, let’s examine ABC7.com’s link profile.

We can see a healthy link profile at more than 1 million backlinks coming from more than 20,000 referring domains. Referring IPs cap out at more than 14,000 and referring subnets are at around 9,700.

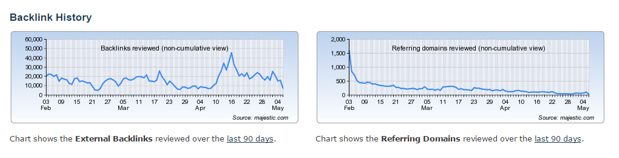

The charts below for the backlink history give you a good idea of link velocity and acquisition factors over the past 90 days.

Here is where we can begin to see more in-depth data points about ABC7’s link profile.

Utilizing in-depth reports on Majestic, it’s possible to get every possible backlink. Let’s get started and identify what we can do to perform a link profile audit.

Image Credits

Featured Image: Paulo Bobita

All screenshohts taken by author