May 3, 2015, it happened again.

Google quietly launched an update – now popularly known as the “Quality Update,” though in truth it has no official name.

It struck fear into the hearts of many webmasters, digital marketers, and SEO professionals.

You can go to bed one night, cozy in the knowledge that you have supreme visibility in the search results…

…Only to wake up the next morning and discover your rankings have been obliterated.

Traffic plummets.

Leads and sales decrease. It’s a slippery slope to losing revenue.

On the other hand, many wake up pleasantly surprised to see their position on Google search results boosted.

In general, for any Google algorithm update, the numbers get shuffled. Some win, some lose.

Search is a zero-sum game.

In fact, it took Google a while to actually acknowledge the update in the first place.

It was even known as the “Phantom Update,” or “Phantom 2,” because marketers definitely could see the results, but it wasn’t officially confirmed at the outset.

The History of Google’s Quality Update

Search marketers started calling the May 2015 update the “Quality Update” because – you guessed it – it directly impacted the part of the algorithm that helps Google measure site quality.

Specifically, in a statement, the search giant admitted the update affected the “core ranking algorithm” and “how it assesses quality.”

Google has a history of this type of update. It all started with Panda in 2011.

Following in Panda’s Pawprints

In the years leading up to the big Panda algorithm, Google made changes to its algorithm infrequently and predictably. An update would roll out, time would pass, and SEOs could adjust before the next update came along.

Then, in 2010, Google introduced Caffeine. Caffeine wasn’t an update, but rather a reboot. They overhauled the search engine for faster, more relevant results and better indexing. This also meant the update pace changed dramatically.

Instead of a slow and steady stream of changes, Google slid into its current modus operandi. Today, Google makes thousands of changes to its algorithm per year and lots of bigger changes rollout without warning. These haunt marketers like ghosts, which is perhaps why the unofficial term “Phantom Update” is so fitting.

Only the hugest updates garner any announcements.

One of these huge updates was Panda, an algorithm change that came in February 2011. Panda essentially set the bar for site quality. It aimed to weed out those sites with lower-quality content, including content farms that scraped the internet for information and created as many pages as possible for higher rankings.

With this update, Google made it abundantly clear that quality matters for ranking. You can’t manufacture or fake quality. Quality is authentic, trustworthy, thorough, factually supported, and accurate.

After Panda, Google has continued to make updates that affect how the algorithm sifts through sites and separates the chaff from the wheat, the low-quality from the high-quality.

The first of these was the Quality Update of May 2015, the biggest change in terms of the quality factor since Panda’s infant days as a baby cub.

So, What Did the Quality Update Do?

The Quality Update was all about quality (duh). But, how does Google define “quality” for websites?

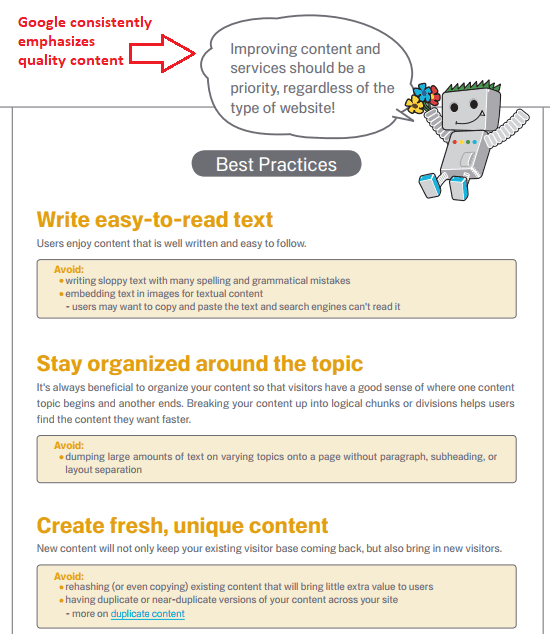

In their Search Optimization Starter Guide, they dedicate an entire chapter to the topic. In fact, read any Google-authored documentation, guide, or course, and you’ll find the same tenants repeated over and over:

Quality content matters if you want to rank.

That brings us to the reason the Quality Update was launched: to demote the rankings of low-quality content in organic search so users could get better, more trustworthy, and authoritative results.

Who Was Impacted by the Update & Why It Matters

The reason this update was launched in the first place begs the question: What sites felt the biggest impacts?

This change was not targeted, but lots of sites who had similar quality problems saw drastic organic traffic decreases after it rolled out. Here are a few major types of content and sites that Search Engine Land identified as affected.

1. Thin Content & Clickbait Articles

Thin content is just like it sounds. It’s skimpy. The information is slim. It doesn’t really provide any answers. Plus, it’s short (and not sweet).

Clickbait articles are similar in nature, but they lure you to the page with a headline that tricks you into clicking on it. The page you land on has thin content that doesn’t have much to do with the headline.

These types of content are frustrating for readers, so it’s easy to see why they got demoted in search results.

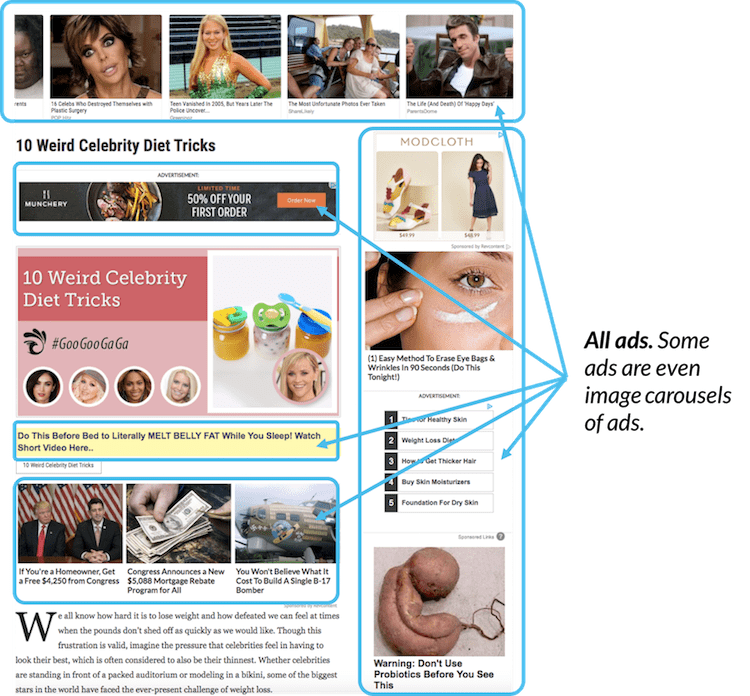

2. Pages with Heavy Advertising

In-your-face ads are annoying – nobody will disagree about that. When you’re trying to find information that’s important to you, getting slammed with a page full of ads is also confusing. It gets in the way of your ability to get the information you need.

These types of pages were also affected by the Quality Update. Any site that prioritized ads over their content was hit.

Here’s a great example from Moz of annoying, heavy advertising:

3. Content Farm Articles & Mass-Produced ‘How-To’ Pages

A great example of sites the Google update impacted negatively are the aforementioned content farms. They’re definitely not as prevalent in organic search results today, and Google’s updates most likely have plenty to do with that.

Here’s what content farms do wrong:

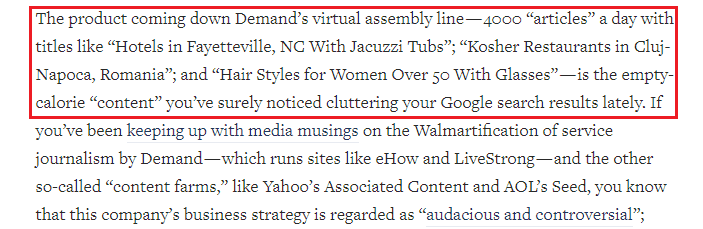

- Their goal is to maximize pageviews and earn revenue from on-page advertising. The content is just how they get the pageviews. It’s written for search engines, not users.

- As such, the content quality isn’t of much importance. Hordes of freelancers are paid next to nil to produce content at record speed.

- Since the writers aren’t paid for quality work, the content produced is rehashed, poorly-written, unorganized, or full of inaccuracies.

Here’s a great article from an editor who actually worked for a content farm, Demand Media. She was only paid $3.50 per article, which amounted to $7 an hour. Meanwhile, thousands of articles piled up per day from overworked writers.

HubPages, a site full of user-generated, farmed content, reported a drop in traffic (down 22 percent) across their entire domain shortly after the Quality Update.

In addition, CNBC reported that other sites like Answers.com, WikiHow, and eHow saw hits to organic traffic. (It’s worth noting that both eHow and HubPages are owned by Demand Media.)

Among all the farmed content sites, HubPages showed the most umbrage at their dramatic drop in Google traffic. In particular, they called it “pretty brutal” in their blog post and “a giant whack across the board” in the CNBC article.

How Do Sites Bounce Back from the Quality Update?

In a case like the May 2015 Quality Update, webmasters and marketers need to regroup and work on website improvements – which is what most of them did.

The process of bouncing back from algorithmic hits on a site or domain’s organic search rankings can take months. However, if these sites want any hope of clinching that coveted Google search visibility, they have to play ball.

Here’s a basic recovery process that many authority sites recommend, no matter the update scenario.

The Basic Recovery Process for an Algorithm Hit

Sites like Moz, Search Engine Land, Content Marketing Institute, and more recommended the following steps.

- Don’t wait to see if your numbers go back up. Start working on site-wide improvements immediately.

- Do an audit of your entire site. Look at pages critically and put yourself in the shoes of an average user.

- Pay attention to potential annoyances for users. Think insistent pop-ups, in-your-face/content-integrated advertising, deceptive links, or thin, unhelpful content.

- Create a plan to address the issues you see, and ones you discover from a deep audit.

- Continue forward with putting out high-quality content for every page.

How Did HubPages Respond?

HubPages was one of the most vocal sites after the May 2015 Quality Update rolled out. They also revealed a plan of attack to “get back in Google’s good graces.”

This included “aggressively editing” the pages with the highest traffic. They also said they were noindexing a huge quantity of pages.

Noindexing involves putting a snippet of code on pages that you don’t want Google – or any other search engine – to index. If search engines don’t index these pages, they become invisible to searchers.

In general, sites like HubPages with almost exclusively user-generated content will run into problems with quality if there aren’t good vetting processes in place.

More Google Quality Updates?

Just over a year later, in June 2016, reports began to surface that another Quality Update had occurred. Like the 2015 update, it was never confirmed by Google.

Sites that benefited most had unique, fresh, quality content. Those that suffered, didn’t.

You can read more about this in: News Sites Benefitting From June’s Google Quality Update [STUDY].

Was that the final Quality Update? Maybe, maybe not.

Although Google’s still not talking, Glenn Gabe, president of GSQi, wrote in a post in August that “Google seems to be pushing quality updates almost monthly now (refreshing its quality algorithms).”

First, in March, there was the Fred update. It got a name (by accident), but many more unnamed quality updates followed.

Gabe noted that his data indicated that Google updates happened on May 17, June 25, July 10, and August 19 with results that appeared to be connected to past Quality Updates. In a followup post in October, he shared data documenting an incredibly volatile fall 2017.

The Far-Reaching Effects of Google’s Quality Updates

Like it or not, Google has a gigantic say in how websites are discovered.

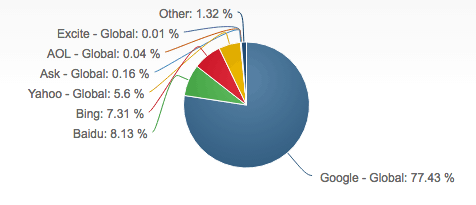

If you need a point of reference, look at Google’s utter dominance in the market, via Smart Insights:

Long story short: If you don’t abide by Google’s rules, you’re putting yourself at a major disadvantage.

Thankfully, Google’s rules are all about serving the user – the consumer, the customer. This is in line with what businesses should be trying to do, anyway. If it’s your number one goal, you should be OK in the face of the many inevitable future updates.

Today, marketers and SEO professionals are a little more prepared for algorithm changes both big and small. They’re seasoned, if you will.

This is what Google does. You have to stay on your toes to keep your site rankings healthy.

No cheating, no shortcuts.

Image Credits

Featured Image: Shutterstock, modified by Danny Goodwin

Screenshots taken by Julia McCoy