Last June 21, Search Engine Journal’s Philippine team was privileged to attend SEO Summit 2014. In relation to this, we had the opportunity to interview one of the speakers, Internet Marketing Inc.’s VP for SEO, Mr. Benj Arriola.

You started your career as a chemist before becoming a member of the SEO industry. Will you share with our readers what made you decide to make this career move?

The path is a long one, and it was not straight from being a chemist to an SEO guy. As a chemist I did work in the industry as a quality analyst and chemist at SGS and also in the academe as a college instructor at De La Salle University while finishing my master’s degree.

During that time, anything computer related was more of a hobby. I was building computers for friends and started selling them, and that eventually turned into a computer shop business in 1997. I ran that as my first business, and it matured into a web design, web development, hosting and domain name registration company by 1999.

Since the competition was getting tough in Metro Manila year after year, and new web designers and developers keep coming out with extremely good talent, price points and delivery time were constantly decreasing to be competitive to a point it no longer seemed practical. As a solution, many companies focused on gaining outsource jobs from the US that gives the Philippine companies a higher price tag that is still lower compared to US standards.

That direction brought me to the US in 2004 and as I started to work for web design and development companies in the US, all of them were doing SEO and this is where I learned SEO myself beyond the teaching of the companies I worked for. I tested my SEO skills on my own sites, joined competitions, and accepted some freelance clients. When I saw I could be successful in this industry and was enjoying it at the same time, the transition came naturally.

I started learning SEO in 2004 as part of my web design and development responsibilities at work, but it was in 2006 when I decided to make the full transition and do SEO full-time. And even if I went into SEO full-time in 2006, the career still continued to grow where you start as a specialist, you gain more responsibilities, train people, become a manager, a director, etc.

Currently I am the VP for SEO at Internet Marketing Inc. and it all started as a chemist back in 1994. 🙂

During the SEO Summit, you shared that one way for startups to succeed in SEO is to become a thought leader of their products or services. Can you elaborate on this and share some tips on how companies can do it?

We all know in SEO, links help in ranking since links are like votes of trust, authority, and credibility. This becomes a reflection of site quality, and it just make sense that it should rank well in search engines if Google intends to show the best results online.

Over time Google learned all the manipulative ways some abusive SEOs have used in the past, which are essentially those building their own links going to their own websites in various ways. They are not like votes of trust, authority, and credibility from others and are more like voting for yourself. Since Google wants real natural links and not artificial links, links that are true testaments that the link is from a fan, follower, an advocate, someone who likes, loves, adores your page, then that is exactly what you have to gain for it to be effective in SEO.

Being a thought leader builds the audience of people who will view you as the main authority in your industry. Talking about your industry with useful or entertaining information for your target audience gives reasons for other websites within your industry to link to you. To increase the effectiveness of your thought leadership efforts, do not concentrate on gaining links at all.

Instead just be a thought leader, try your best to be an authority and if people start talking about you, mentioning you, quoting you, then you know what you are doing is effective and links will just come in naturally.

Sean Si dubbed you as “The Godfather of Philippine SEO Scene” at the SEO Summit. That said, what would you consider as your biggest accomplishment?

Thanks to Sean Si, that does sound flattering and I understand why he would say that—which I believe is driven by the long history of me interacting with the Filipino SEO community, and always willing to teach others who want to learn and by answering numerous questions from Filipino SEOs asking questions online since 2005. When we talk about biggest accomplishment though, I feel it may not be related to the title, but the question is about the biggest accomplishment. So that is what I will answer.

There are many contests I have won, there are several big brand names I have worked with, and there are also many conferences I have spoken at, but I don’t consider any of those as the biggest accomplishments.

In SEO, normally you have to be strong in one of these skill sets or knowledge bases: (1) Web development, server administration and all related technical knowledge, (2) content writing, (3) marketing knowledge, (4) web design and usability and (5) web analytics. Whatever you are unfamiliar with, you will have to learn to do better SEO.

So what I consider as my biggest accomplishment is to simply be able to be in this industry at a certain proficiency level that people recognize, considering that my initial career was a chemist, which is not really a career related to any of these. I do consider this as the biggest accomplishment, although it may still be no big deal to many other SEO professionals since most come from a variety of other careers that are totally unrelated to SEO.

With the number of algorithmic updates Google has rolled out, what do you think lies ahead for the SEO industry, especially in the Philippines?

I personally know people working in the Internet marketing industry that solely believe algorithm updates exist to give SEO people a hard time so they resort to Google Adwords and make a profit out of it. I lean more on the reasoning of Google’s desire to give users better search results. Because as long as the users of Google are happy, then it keeps their audience alive. And if their audience is there, then their advertising platform is more attractive to advertisers.

In the past, updates were made to improve the quality of search results because they had to be refined as the algorithm gets better at understanding what results are more valuable to the user. But Google is a mathematical machine with a very complex way of quantifying quality into measurable metrics so it can rank websites in a certain way. Unfortunately some SEOs, instead of focusing on creating great quality websites valuable to users that should naturally rank well, focuses on the metrics Google was trying to quantify quality, which are referred to as ranking factors. SEOs were able to achieve great ranking back then regardless of the quality of the website, whether users liked it or not.

So what’s the result when this happens? Naturally, if a business like Google is dependent keeping users happy so the continue to use Google, they will definitely have to improve the search results. The more they maintain their user base, the easier it is to sell their Adwords PPC program. So Google algorithm updates come out all the time. Almost every month there is something new.

What lies ahead for the SEO industry would be even more updates. As long as there are SEOs that are focused on creating great quality content on awesome websites, there is probably an equal amount of SEOs focused on the ranking factors that they can use to game the system. This often leads to bad quality search results that Google will observe and find ways to automate the cleaning of the results in the form of an algorithm update.

As for the Philippines, everyone seems to be catching up well, but there are still a few people I get to talk with that are stuck in the old ways. Some very reliant on spammy tools, and we cannot blame them all since they got their knowledge from another person or was instructed at their job because that’s how the whole company does it. Eventually, companies and individuals stuck in the old ways of SEO will see the results of their work, and should naturally change if they want to stay in the industry for a long time.

It’s been two decades since Philippines made its first connection to the Internet. In your opinion, how much has the local SEO changed over the past years?

I’ve been on the Internet since 1991 through school and got my first dial-up ISP connection for home in 1994 when I signed up for Industrial Research Foundation (IRF), which is a division of Ph Net, a part of the Philippines’ Department of Science and Technology (DOST) before many of the commercial ISPs came out. I did SEO since 2004 when I moved over to the US. So I have been a part of these 2 decades you are talking about. Now you are making me feel old. LOL!

I have been interacting with a group of SEOs in the Philippines since 2005 and what I have observed that has changed over the past years in the Philippines local SEO scene are:

Increase of Group Organizations and Networking Events

The group SEO Philippines lead by Marc Macalua grew and disappeared. The SEO Organization Philippines group that started with some of the core members of SEO Philippines continue to grow. Regional hubs in Iloilo and Pampanga also exist. Growing populations of SEOs are also found in Cebu, Cagayan de Oro, Bataan, Bicol and Bacolod.

Emergence of New Thought Leaders

Some of you might know the older members of SEO Organization Philippines like Ed Pudol, Zaldy Dalisay, Kim Tyrone Agapito, Noel Bautista, Cell Jacela, then suddenly Jason Acidre and Sean Si were creating their own buzz online with some international recognition. Then the next wave of newer thought leaders like Jayson Bagio, Mark Acsay, Gary Viray and there are more out there. Sorry if I left someone out who may be worth the mention!

Increase of Foreign SEOs Investing in the Filipino Talent

Back in 2005, it was just a few main players. Probably the most visible people to me was Hans Koch and the late Michael Turner. These days there are so many people from the US and Australia that put up their own offices in the Philippines building their own SEO teams. Outsource with just plain online communication is challenging. Sometimes the only way to make it better is to set up an office in the Philippines and move over there to manage it.

Decrease of Spammy Methods

Unfortunately I cannot say the disappearance of spammy methods. There are still some out there who think of SEO as a software. Just use things like SENuke, Scrapebox, XRumer, Digixmas. (I know some people may debate that some of the tools I mentioned are not always used for spammy methods, like Scrapebox. True and I am aware of that, but I am referring to people who use it in a spammy way.) There are some who are reliant on low quality guest blog post that end up on low quality blogs. But even if these people still exist, they have definitely decreased.

Bonus Question: I noticed on your Instagram account that you travel often. What is the best place you’ve ever been to?

I’m a bad person to be asked this question. I like going to new places I have never been to just to get it off the bucket list, but I am not the travelling type that must go to certain places, so I do not necessarily put ranking factors on the places I visited

Secondly, I am bad at giving answers to questions that have the words: “what is my best…” or “what is my favorite…” because I often not know what is my best or favorite. I just know what I like and don’t like and there will be several answers to that. The words best and favorite are the superlatives where I have to narrow it down to one choice. But I will still try to answer this question.

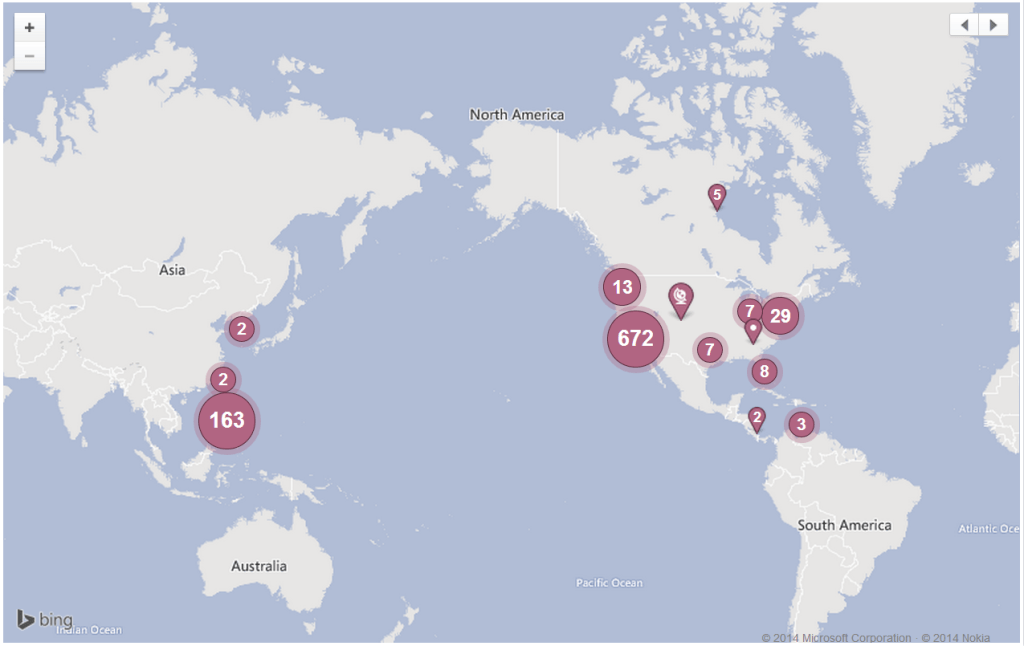

Since I do check in often on Foursquare, Yelp, Facebook, etc. I decided to look at my Facebook map of places I visited to remind me where I’ve been:

Ok, the best is probably Aruba. It is a 20 mile long, 5 mile wide island. Weather is similar to the Philippines but almost every area is near the sea so you get the nice cool breeze and the water is not too rough. Sunsets and sunrises are awesome. Good amount of activities to do. More relaxing, less hustle and bustle, and it is just a few hours to fly into from Florida. Of course that may change once I get to Europe, which I have never been to, as well as anywhere in the Middle East, Australia, South America, and Africa.

Featured Image: Microstock Man via Shutterstock