On August 13, 2019, Rand Fishkin — CEO of SparkToro, founder of Moz — published a blog post that stated, “Less than Half of Google Searches Now Result in a Click.”

Fishkin summed up the findings as proof that:

“Google’s ongoing attempts to answer more searches without a click to any results or a click to Google’s own properties are both proving successful. As a result, zero-click searches, and clicks that bring searchers to a Google-owned site keep rising.”

While the article also quotes an earlier study that showed that almost 6% of Google clicks went to a Google-owned property (which Fiskin labeled as a “huge portion”), most people got caught up on the “50% of queries resulted in zero clicks” part.

According to a phrase match search on Google, the “less than half” statistic went on to get quoted over 400,000 times.

Heck, I even mentioned it once in my article about the legality of featured snippets.

On March 22, 2021, Fishkin updated his study, now claiming that:

“From January to December 2020, 64.82% of searches on Google (desktop and mobile combined) ended in the search results without clicking to another web property.”

While some SEO professionals questioned the new data, thousands more search engine industry publications and blogs quoted the study for weeks.

The only problem?

The math doesn’t add up.

This Metric Has More Blind Spots Than A Buick

In July 2020, I published an article with the assistance of a statistician about the abysmal state of “studies” in the SEO business.

The statistician highlighted the 2019 SparkToro zero-click study in detail for an issue known as “availability bias.”

Roger Montti covered this issue shortly after the 2021 SparkToro study was released, so I won’t go into too much detail here.

However, the problem is that because the data for both studies was collected for other purposes (e.g., users of Avast Antivirus or the SimilarWeb dataset), it’s not an accurate representative sample of Google users.

Additionally, the 2019 data from JumpShot was composed of “millions of Android-powered devices and millions of PCs,” but excludes all iOS-based devices. Once again, this shows that the data is not a fair and random sampling of Google users.

This issue alone should kick these studies to the proverbial curb.

However, the more significant problem is the number of “blind spots” (i.e., details of the data we can’t see) in the slice of the pie that represents the so-called “zero-clicks” occurring — or not — on Google.

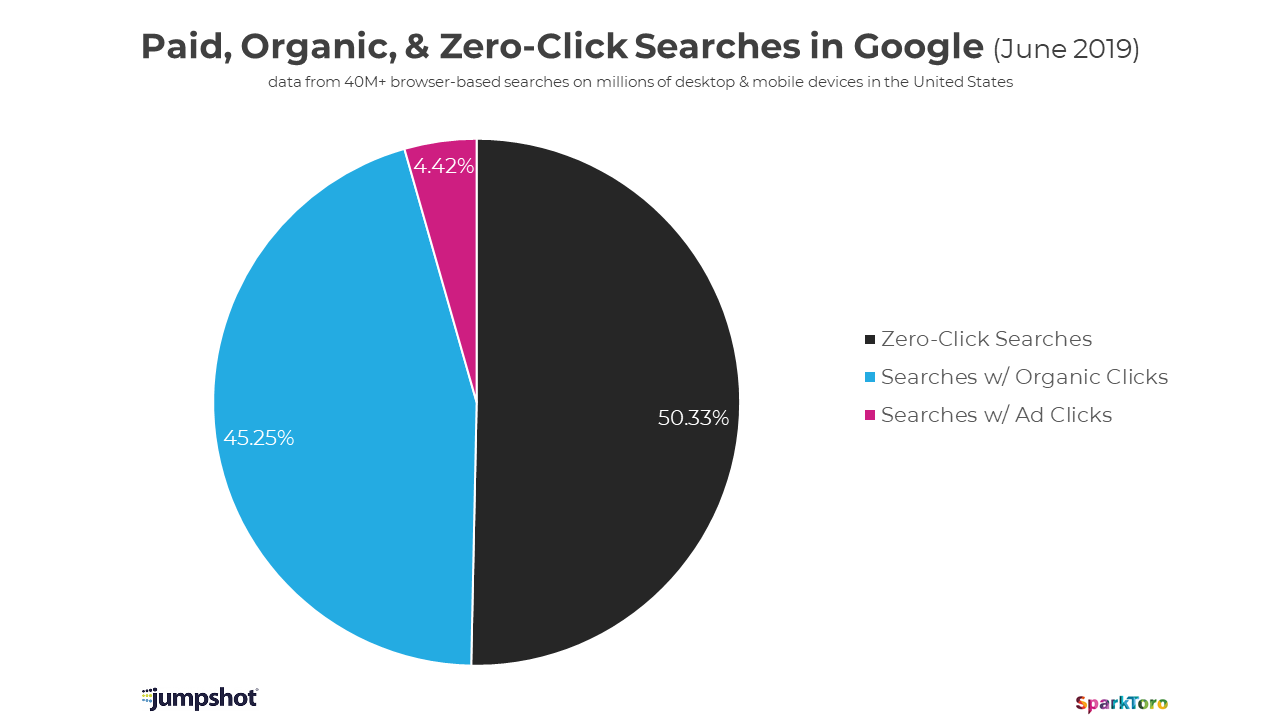

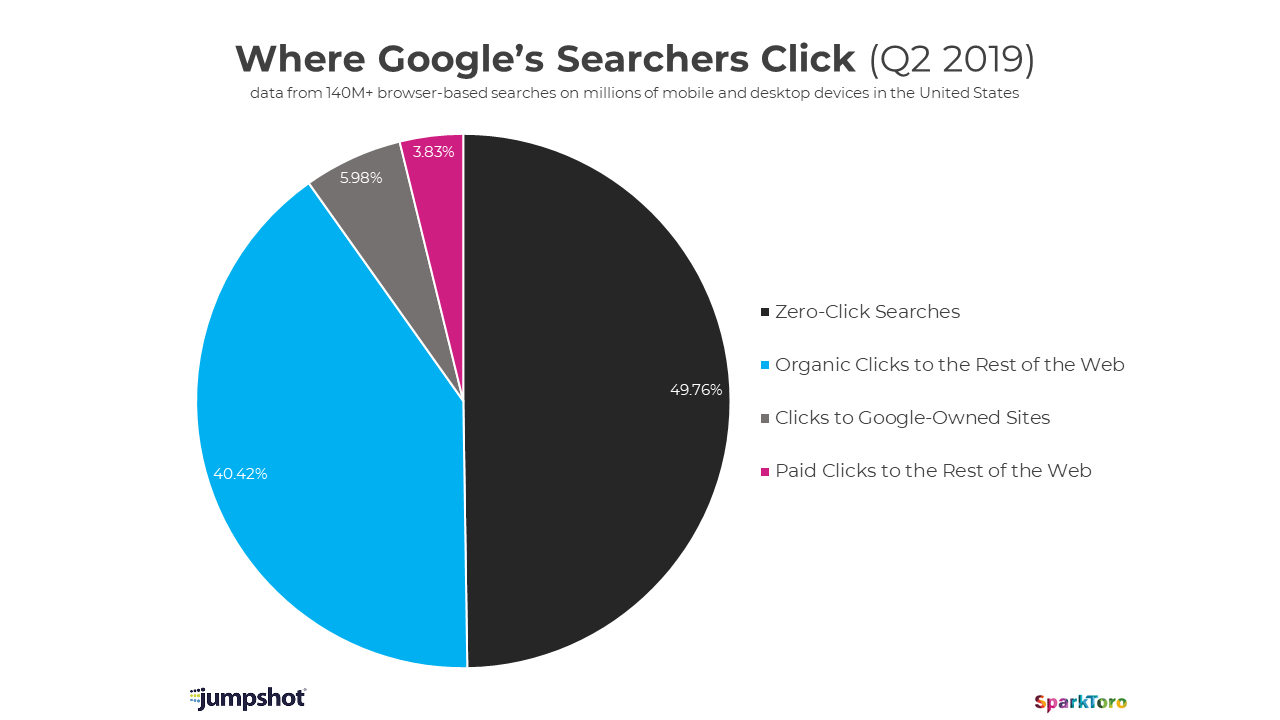

Before we dig into the data, let’s look at the original graphs presented in the 2019 SparkToro study:

I believe the point the SparkToro study is trying to make was that by the end of Q2 (June) 2019, the “zero-click” number had crossed the 50%.

However, the “Clicks to Google-Owned Sites” slice is missing from this graph, so it’s a little hard to compare.

Assuming that “Organic Clicks to Rest of the Web” and “Searches w/ Organic Clicks” represent the same information and looking at the difference in the numbers, it appears the “Clicks to Google-Owned Sites” slice was absorbed into the “Searches w/ Organic Clicks” slice (we’ll come back to this later).

More importantly, what’s in that “Zero-Click Searches” slice?

There were some caveats added to the original 2019 study some time after the original post that can help with this question.

According to the study, “zero-click searches” include:

- “Searches that end because a user was frustrated and couldn’t find an answer.”

- “Searches that are answered by the results” (i.e., Featured Snippets/Knowledge Graph).

- “Searches that end because something interrupted the searcher, or any other reason for a cessation of activity after the query.”

- “A click/action that takes a searcher out of the browser (for example, opening the phone app for a click-to-call, or the Google Maps app for driving directions).”

Additionally, it should be noted that the zero-click definition:

- Does not include “clicks to Google-owned sites,” which is listed separately as 5.98%.

- Does not include “Voice searches that show a screen of results are part of this analysis. Searches that are answered by a device’s audio (like Alexa, Siri, Google Assistant, etc. speaking an answer).”

Let’s look at each of these caveats a little closer using data available in other studies.

Before someone on Twitter points it out — yes, I’m quite aware of the irony of referencing other studies using the same biased data sources, but that’s the point.

This data was available in prior studies but was omitted from the SparkToro study for reasons not provided.

- “Frustrated User” Searches: An earlier study published in March 2017 (written by Fishkin while he was still at Moz and also using JumpShot data) stated that the “percent of queries on Google result in the searcher changing their search terms without clicking any results” was “a full 18% of searches.”

- Answered by the results (i.e., Featured Snippets or Knowledge Graph): “According to Ahrefs’ data, ~12.29% of search queries have featured snippets in their search results.”

This stat is from a different data set, but I present it to illustrate a possibility of how much of that “over 50%” could be Featured Snippet/Knowledge Graph search results.

According to the March 2017 Moz study, links in the Knowledge Graph get “~0.5% of clicks” but of course, those go on the “organic clicks” side of the graph.

Click-To-Call Searches: An older survey of 3,000 mobile searchers by Google in 2013 revealed that 70% of mobile searchers click-to-call a business directly from Google’s search results.

This data doesn’t align with how the JumpShot data was collected. However, again, I present it to illustrate that a substantial percentage of the so-called “zero-click” searches are getting a click.

Google Maps Searches: According to the March 2017 Moz study, “0.9% of Google search clicks” go to Google Maps.

Other Apps and “Interrupted searches:” Unfortunately, I was not able to discover any specific data on these types of searches in my research for this article.

What Does This Clarification of the Data Tell Us?

Think about it this way: the “Frustrated User” and the “Answered by the Results” users would most likely never click on a result anyway.

This is because the answer just wasn’t there, or perhaps because the response was so simple that a visit to a webpage to obtain that answer was unnecessary.

One could make an argument that the “Interrupted Searches” category belongs here, as well, since they never even had the opportunity for a click.

So, what we have here are really “Never Clickers” rather than “Zero-Clickers,” and those searchers could easily be over 20% of that 50.33% of searchers.

Then, you have an unknown percentage of users in that remaining 20-30% of that 50.33% slice who are clicking on a result. However, they weren’t counted because of the technology used to collect the data; specifically the Click-To-Call, Google Maps, and Other Apps searches.

These searches don’t belong in that slice at all because clicks are actually occurring.

In either case, Google is not “keeping the click for themselves” since that number is represented elsewhere. The clicks simply never existed in the first place.

“Some folks have pointed out that ‘zero-click’ is slightly misleading terminology, as a search ending with a click within the Google SERP itself falls into this grouping,” Fishkin states early in the 2021 study. “The terminology seems to have stuck, so instead I’m making the distinction clear.”

However, as we can see from above, it’s much more than “slightly misleading.”

It’s just incorrect.

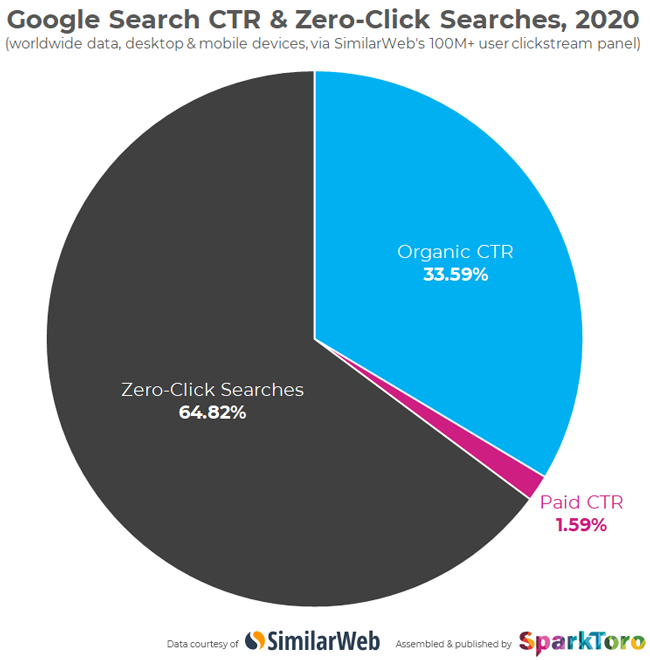

For the 2021 study, SparkToro switched to SimilarWeb data and, for some reason, switched to click-through rate (maybe the graph lists both):

Here, the graph states that less than two-thirds of searches are “zero-click.”

However, Fishkin writes that the “number is likely undercounting some mobile and nearly all voice searches” and therefore is most likely over two-thirds of all searches worldwide.

This is how Fishkin was able to use that figure in his headline.

However, unlike the 2019 study, there is even less information about what is included in the “zero-click” slice. I think we can safely assume that it has the same problems as the JumpShot data.

In conversations about this new study with a longtime actual working SEO professional, Bill Slawski shared some concerns over the quality of the data. Bill has written about Google patents for over 15 years with nothing but positive feedback, including from Google’s Matt Cutts and Paul Haahr.

Based on his looking through the company’s web page on its data sources, Slawski said:

“[SimilarWeb] provides some information about where [the data] is coming from, but nothing that actually indicates how they collect data about Google searches. They say it comes in part from 4.7 million web apps, but never name what those apps are specifically.”

Elsewhere on SimilarWeb’s data sources page, it claims that it collects data from various Google Analytics accounts. However, I can guarantee that Google’s own Google Analytics account isn’t one of them.

Slawski added:

“Digital ink is cheap. It costs next to nothing to list the steps they may have taken to collect this data. The failure to do so indicates to me that something is being hidden.”

What Is the Actual Point of the SparkToro Studies?

In both the 2019 and 2021 studies, Fishkin makes a case about Google’s monopolistic practices.

However, if that was the actual point of the two studies, that number (represented by clicks that go to Google properties) is not part of the zero-click searches number in the 2019 study.

It is largely ignored in the 2019 post and completely omitted from the 2021 post.

That clicks to Google properties number is either a separate slice equaling 5.98% for Q2 of 2019 or lumped in with the rest of the organic searches in June 2019.

The clicks to Google properties number was not broken out at all in the 2021 study. If that study’s definitions follow the same rules as before, it still wouldn’t be part of the zero-click total — but we actually don’t know from the SparkToro post.

(Note: A follow-up presentation by SimilarWeb with more data was scheduled for March 31; however, the link to that event from the SparkToro post is not working as of this writing, and the SimilarWeb website does not have any listings for the event.)

Now, if 5.98% is a massive number for you, that’s a whole different story. But it isn’t part of the zero-click story at all.

Given that this is Google’s website and Google provides the organic search listings to millions of websites for free, the fact that they might be keeping less than 6% of those searches for their properties doesn’t bother me so much.

Why on Earth Do We Keep Quoting These Studies?

The 2021 study caused Google to issue a response that stated that more clicks than ever are going to organic search results.

While this should be tempered by the fact that the use of search engines increased during the 2020-2021 pandemic, it supports the fact that the data presented is simply untrue.

Yet, despite all of these blind spots and sloppy data, hundreds of thousands of blog posts have repeated that “over 50%” and “over two-thirds” of searches get zero clicks.

The lone holdout, Montti, presented his case shortly after Google’s official statement was released.

While other publications covered Google’s response, barely any of them used the opportunity to point out any issues with the original study itself. In fact, some took the opportunity to pile on Google.

I don’t totally blame Fishkin, Moz, or SparkToro for this one. My fellow industry writers should take on some of the blame.

It’s easy to just go with something that sounds like it’s right because you feel like Google’s search results aren’t what they used to be.

But seriously, take a minute to think about this stuff before starting another post with, “We all know that fewer than 50% of searches get clicks.”

We don’t actually know that at all because no one has actually proven that statement so far.

Conclusion

With data like this, I always think back to a monologue from the first “Jurassic Park” movie.

Jeff Goldblum’s character – Ian Malcolm – confronts John Hammond about how “your scientists were so preoccupied with whether not they could [bring back dinosaurs] that they didn’t stop to think if they should,” but is completely ignored.

In this instance, I think Fishkin was so excited about presenting what he thought was compelling evidence of wrongdoing on Google’s part that he didn’t stop to think if he should.

Year after year, I keep waiting for companies like SparkToro and others to have this Alec Guinness from “Bridge on the River Kwai”, “What have I done?” moment, where they realize the damage they are doing.

But it never seems to arrive.

More Resources:

- 10 Facts You Think You Know About SEO That Are Actually Myths

- Google’s E-A-T: Busting 10 of the Biggest Misconceptions

- A Complete Guide to SEO: What You Need to Know

Image Credits

All screenshots taken by author, April 2021