At Google I/O 2021, Google announced a new technology called MUM (Multitask Unified Model) that it will use internally to help its ranking systems better understand language.

Since the announcement, there has been much discussion about if or when MUM would become a ranking factor.

What Is MUM?

Dubbed “a new AI milestone for understanding information,” MUM is designed to make it easier for Google to answer complex needs in search.

Google promises MUM will be 1,000 times more powerful than its NLP transfer learning predecessor, BERT.

MUM uses a model called T5, the Text-To-Text Transfer Transformer, to reframe NLP tasks into a unified text-to-text format and develop a more comprehensive understanding of knowledge and information.

According to Google, they could apply MUM to document summarization, question answering, and classification tasks such as sentiment analysis.

MUM is a significant priority inside the Googleplex, so it should be on your radar.

The Claim: MUM As A Ranking Factor

When Google first revealed the news about MUM, many who read it naturally wondered how it might impact search rankings (especially their own).

Google makes thousands of updates to its ranking algorithms each year, and while the vast majority go unnoticed, some are impactful.

BERT is one such example. It was rolled out worldwide in 2019 and hailed the most significant update in five years by Google itself.

And sure enough, BERT impacted about 10% of search queries.

RankBrain, which rolled out in the spring of 2015, is another example of an algorithmic update that substantially impacted the SERPs.

Now that Google is talking about MUM, it’s clear that SEO professionals and the clients they serve should take note.

Roger Montti recently wrote about a patent he believes could provide more insight into MUM’s inner workings. That makes for an interesting read if you want to peek at what may be under the hood.

For now, let’s consider whether MUM is a ranking factor.

[Recommended Read:] The Complete Guide To Google Ranking Factors

The Evidence Against MUM As A Ranking Factor

In his May 2021 introduction to MUM, Pandu Nayak, Google fellow and vice president of Search, made it clear that MUM technology isn’t yet in play:

“Today’s search engines aren’t quite sophisticated enough to answer the way an expert would. But with a new technology called Multitask Unified Model, or MUM, we’re getting closer to helping you with these types of complex needs. So in the future, you’ll need fewer searches to get things done.”

Then, the timeline provided for when MUM-powered features and updates would go live became “in the coming months and years.”

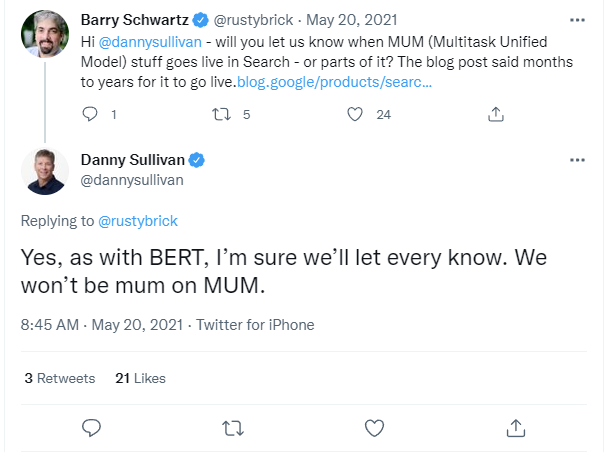

When asked whether the industry would get a heads up when MUM goes live in search, Google Search Liaison Danny Sullivan said yes.

The Evidence For MUM As A Ranking Factor

When RankBrain rolled out, it wasn’t announced until six months afterward. And most updates aren’t announced or confirmed at all.

However, Google has become better at sharing impactful updates before they happen.

For example, BERT was first announced in November 2018, rolled out for English-language queries in October 2019, and rolled out worldwide later that year in December.

We had even more time to prepare for the Page Experience signal and Core Web Vitals. Google announced them over a year before the eventual rollout in June 2021.

Google has already said MUM is coming and will be a big deal.

But could MUM be responsible for a rankings drop of many sites experienced in the spring and summer of 2021?

[Discover:] More Google Ranking Factor Insights

Implementing MUM To Improve Search Results

As promised, Google announced new and potential MUM applications publicly.

In June 2021, Google described the first application of MUM and how it improved search results for vaccine information.

“With MUM, we were able to identify over 800 variations of vaccine names in more than 50 languages in a matter of seconds. After validating MUM’s findings, we applied them to Google Search so that people could find timely, high-quality information about COVID-19 vaccines worldwide.”

In September 2021, Google shared ways that it might use MUM in the future, including new ways to search with visuals and text – as well as a redesigned search page to make it more natural and intuitive.

In February 2022, Google offered insight into how RankBrain, neural matching, BERT, and MUM lead to information understanding. In this post, the following was noted:

“While we’re still in the early days of tapping into MUM’s potential, we’ve already used it to improve searches for COVID-19 vaccine information, and we’ll offer more intuitive ways to search using a combination of both text and images in Google Lens in the coming months. These are very specialized applications — so MUM is not currently used to help rank and improve the quality of search results like RankBrain, neural matching and BERT systems do.”

In March 2022, Google posted an update about how MUM applied to searches related to a personal crisis.

“Now, using our latest AI model, MUM, we can automatically and more accurately detect a wider range of personal crisis searches. MUM can better understand the intent behind people’s questions to detect when a person is in need, which helps us more reliably show trustworthy and actionable information at the right time. We’ll start using MUM to make these improvements in the coming weeks.”

Later in the post, Google continued describing how MUM could improve search results.

“MUM can transfer knowledge across the 75 languages it’s trained on, which can help us scale safety protections worldwide much more efficiently. When we train one MUM model to perform a task — like classifying the nature of a query — it learns to do it in all the languages it knows.

For example, we use AI to reduce unhelpful and sometimes dangerous spam pages in your search results. In the coming months, we’ll use MUM to improve the quality of our spam protections and expand to languages where we have very little training data. We’ll also be able to better detect personal crisis queries all over the world, working with trusted local partners to show actionable information in several more countries.”

Our Verdict: MUM Could Be A Ranking Factor

While Google doesn’t use MUM as a search ranking signal yet, it most likely could in the future.

In multiple posts about MUM on The Keyword blog, Nayak promises MUM will undergo the same rigorous testing processes as BERT before Google implements it into search.

Featured Image: Paulo Bobita/Search Engine Journal