AI-powered search engines have made significant strides in the past year with two notable contenders shaking up the entire AI search engine race. The following seven AI search engines offer unique user experiences and the potential for sending significant search engine traffic.

1. ChatGPT Search

https://chatgpt.com/?hints=search

OpenAI launched ChatGPT Search on October 31, 2024 after three months testing with a prototype known as SearchGPT. OpenAI ChatGPT Search is not primarily a standalone search engine although it can be used in that way by users.

ChatGPT is currently available in a desktop app for Apple and Windows devices as well as for Android and iOS apps, with ChatGPT Search available through all interfaces, including with advanced voice mode.

ChatGPT Search is accessible in the following six ways:

- ChatGPT Search Standalone Page

- Within ChatGPT

- ChatGPT for Desktop

- ChatGPT for Apple iOS

- ChatGPT for Android

- ChatGPT Search Chrome Browser Extension

The focus of ChatGPT Search is conversational search with a strong emphasis on contextual understanding of search phrases, enabling it to respond to queries related to geographic location and recent events.

At a Reddit OpenAI AMA it was revealed that OpenAI uses a variety of services to produce search results, including Bing.

They said:

“We use a set of services and Bing is an important one.”

ChatGPT Search was improved in December 2024 with faster speed, better mobile performance, and new features including map integrations.

There is also a new voice mode feature that enables users to access real-time web information conversationally. Users can now have access information on the go when it’s needed, like finding local events or translating phrases.

ChatGPT Search And SEO

A positive feature of ChatGPT Search is that it shows prominent links to websites. There is no advertising clutter and generally no excessive summaries. A search for “What is the best reviewed television set that’s around 60 inches?” shows a summary of reasonable length followed by attractive excerpts and links to five websites. The search results accurately reflect the context of the question which is a search for the best reviewed television set “around 60 inches” which is an excellent interpretation of the context of that search query.

Screenshot Of ChatGPT Search Results

They are highly relevant search results that should direct engaged site visitors, the kind that matter the most. While some may grumble that ChatGPT Search only shows links to five websites, those search results are arguably better than organic search results have ever been because of the relevance and attractive presentation of website data.

Showing only five search results makes ChatGPT search results more competitive but to be realistic, the top 5 of the search results have always been the only ones that mattered.

ChatGPT Voice Search

ChatGPT Search is available as a voice search, which makes it more useful and in time may contribute to making ChatGPT a daily part of how users search for information.

“You can search while having a voice conversation with ChatGPT.

During a voice chat, you can ask ChatGPT to search for something and ChatGPT will provide you fast, timely answers by searching the web.

Please note that your searching with Voice is also subject to ChatGPT usage limits.”

Unlike most of the other AI search engines in this list, ChatGPT Search is embedded within a workflow (ChatGPT), which makes it easier to adapt to it. That same workflow plus search is also available as a desktop app, and on a mobile device. ChatGPT can even be accessed through a toll-free 1-800 number through any telephone, both analog and digital, plus through WhatsApp. These multiple forms of access differentiates itself from traditional search engines and shows OpenAI’s strategy of making ChatGPT Search available anywhere and at any time, even when a user doesn’t have access to a computing device.

ChatGPT Search is available globally to all logged-in free users, allowing even more people to access the power of ChatGPT Search. The generous search results should (arguably) make publishers and SEOs happy and for that reason ChatGPT Search is ranked as the number one AI search engine for 2025.

2. Grok

Grok is an AI chatbot created by xAI that can search the web, answer questions about current events, citing a combination of posts on X and the web, and it also offers image generation through it’s recently released Aurora model. Grok’s unique web citation features make it a standout for publishers and SEOs because it offers the chance to drive traffic through a clean user interface.

Grok is accessible through X and on a standalone web page. The main search page is clean and with no advertising.

Screenshot Of Grok Search Interface

An example search for the current weather in San Francisco shows search results that cite 15 posts on X and 25 web pages.

Screenshot Of Grok Answer About Weather

For this weather related query the X post citations weren’t particularly relevant but the web citations were perfect. Grok cites Weather Underground, AccuWeather, Weather.com and then it goes granular with citations to local television stations KRON4, ABC7News, and other websites.

SEO And Grok

A search query about television set reviews shows a summary and a graphical user interface (GUI) showing links to up to 25 web pages that contain the answer. This provides a clean user experience and if a user wants to visit a website they just have to click the Web Pages GUI. That’s an extra step that other AI search engines don’t require.

But the tradeoff is that the links to websites are clear and elegantly simple without any advertising or clutter, exactly the way SEOs and publishers love search results to be.

Screenshot Of Grok Link GUI

Above is the summary and answer to the question and below is a screenshot of the Grok search engine results pages (SERPs) showing title links and meta descriptions for up to 25 web pages, no advertising or anything else in the way.

Screenshot Of Grok SERPs

The above screenshot shows 5 traditional organic search results. Scrolling reveals an additional 20 links for a total of 25 links to websites. A Grok user must be motivated to read a web page about the answer and once they click to show the search results Grok shows uncluttered traditional search results. These kinds of search results are good for users and (arguably) better for publisher and SEOs than anything you’ll get from traditional search engines, earning Grok the number two ranking for AI search engines in 2025.

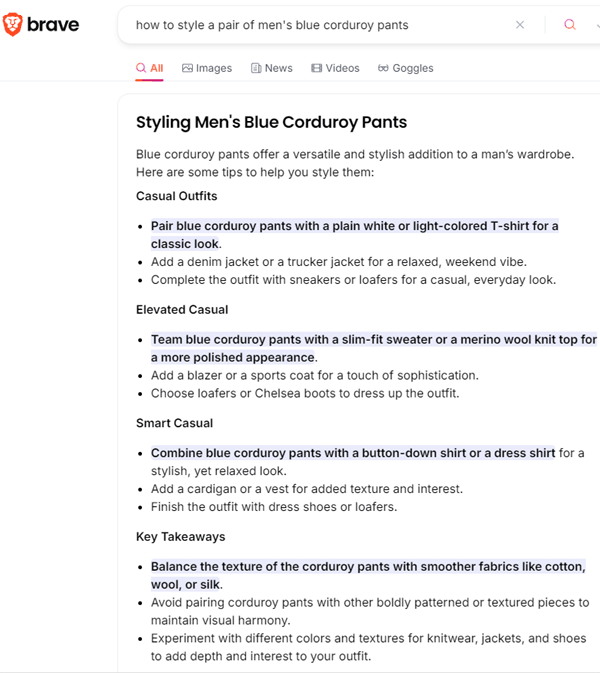

3. Brave AI Search

Brave is a privacy-first AI search engine that offers a clean and uncluttered user interface. Brave is a company that is committed to user privacy that also offers a privacy-first browser. Using the AI search engine is easy. Just go to the search engine and type the query. A drop-down navigation prompt will appear that allows a user to choose an AI search result that offers a useful and comprehensive summary of the answer.

Screenshot Of Brave AI Search Results

Brave AI Technology

Brave uses the open source Metas Llama 3 and Mistral AI Mixtral large language models (LLMs). The Brave Browser offers the ability to toggle Claude Instant from Anthropic. The open source LLMs are self-hosted. All user IP information is blocked from the LLM infrastructure and all search query information is immediately erased after a chat session is ended.

When using the Brave Browser built-in AI search feature, a user can select to use an external LLM from a partner like Anthropic, Brave automatically shields personally identifiable information like a user’s IP address from the third party and search query data is removed from external partner servers after 30 days. Brave currently offers Claude 3 models by Anthropic (both Haiku and Sonnet).

Brave AI search is perfect for users who value their privacy and want a top level AI search experience. Brave AI is easy to use, offers fantastic search results and offers the peace of mind that comes from knowing that you’re not going to be tracked.

4. Andi Search

Andi Search is a privacy-first AI search engine that offers an ad-free search experience. A recent benchmark called Talc AI SearchBench ranked Andi Search over You.com, Google Gemini, ChatGPT, and Perplexity.

The rankings and correctness scores:

- Andi Search 87%

- You.com 80%

- Google Gemini 71%

- OpenAI ChatGPT 62%

- Perplexity 59%

There are three things that make Andi stand out:

- Andi is a true AI search engine throughout the entire search results, not just at the top of the page the way Bing and Google AIO do.

- Images, summaries, and options are offered in a way that makes sense contextually.

- All on-page elements work together to communicate the information users are seeking.

Andi Is More Than A Text-Based Search Engine

Humans are highly visual and Andi does a good job of presenting information with text and images, which makes it easy to comprehend answers.

Screenshot Of Andi Search Results

Andi Search And Privacy

Andi is a privacy-first AI company. It doesn’t store cookies, doesn’t share data, and no information is available to any employee of the company.

It even blocks Google’s FLoC tracking technology so that Google can’t follow you onto Andi.

What Is Andi AI Search?

Andi is a factually grounded AI search engine that was purposely created to offer trustworthy answers while avoiding hallucinations that are common to GPT-based apps. It uses large language models to understand the questions being asked then fetches the web sources with the correct answers. This way of answering questions is friendly to websites because it’s not trying to hold on to users but rather it shows where to find answers.

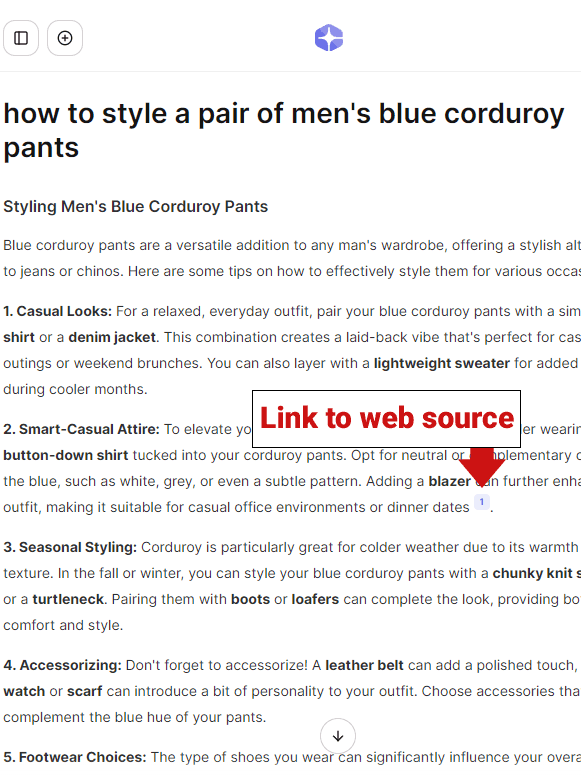

A search query of “how to style a pair of men’s blue corduroy pants” provides answers from the web (with images). A useful feature at the top of the page that enables users to toggle between web results, images and videos.

The business plan is to charge for premium versions in the future but at the current time it’s completely free to use.

Summary Of Andi Search

Andi is truly a rethink of how the search engines of today should function. It encourages users to rediscover the best that the web has to offer. The user interface is a bit cluttered and the search box is at the bottom of the page and not at the top where it’s expected, which causes a little confusion when first visiting the site. Although it’s clear in a testing phase, Andi search nonetheless offers a superior AI search experience with a high level of accuracy and usefulness.

5. Perplexity AI

Perplexity is a natural language conversational search engine that uses both traditional search with LLMs, is built on the Azure infrastructure and relies on GPT-3.

There is a free and a pro version. The free version offers no limits on quick searches and is limited to five “Pro Searches” per day. Free users can create a user profile so that the search engine can optimize for personalization.

The pro version (costing $20/month) offers 300 Pro Searches per day and a choice of different AI models including GPT-4o, Claude-3 and LLama 3.1, unlimited file analysis, access to Playground AI, Dall-E and other image creation AI models.

An interesting feature of Perplexity is the ability to set a focus for the type of search you’re doing.

Focuses offered are:

- Web

- Writing (text generation)

- Academic (searches for academic papers)

- Video

- Math

- Social (searches for discussions and opinions)

Summary

Perplexity offers a clean user interface that in many ways is better than Andi AI and while both respect user privacy, Perplexity is not self-described as a privacy-first search engine, stores user data as long as an account is active and will remove personal data 30 days after account deletion.

Nevertheless, Perplexity offers useful search results including a summary that many may find a lot more useful than using a standard search engine, even one that bolts an AI to the top of the search results like Google’s AI Overviews.

6. Phind

https://www.phind.com/search?home=true

Phind is a self-described “answer engine for developers” but it’s also useful as an AI search engine, offering an attractive user interface with search results that are likewise a pleasure to read. It accepts natural language search queries and provides lightning fast comprehensive answers in the form of a summary and links to web sources for the provided information. A drop-down menu allows paid Pro users to select more advanced LLMs like GPT 4o, Claude Sonnet & Opus.

The Phind search results themselves are useful and the websites it links to are authoritative and useful, offering the opportunity at the bottom of the page to ask a follow-up question. But it still hallucinates when I ask it the question: “What is a Google-friendly way to build links to a website?”

It cites a 2010 Google web page as supporting documentation for advice (like commenting on blogs) that doesn’t exist on the cited web page.

Overall, Phind is a useful AI search engine although it’s more heavily text-based than the other search engines on this list, which makes it more of a chore to scroll through large amounts of text.

7. YOU AI Search Engine

YOU is an AI search engine that combines a large language model with up-to-date citations to websites, which makes it more than just a search engine.

You.com calls itself YouChat, a search assistant that’s in the search engine.

Another outstanding feature is that YouChat can respond to the latest news and recent events.

YouChat can write code, summarize complex topics, generate images, write code, and create content (in any language).

You.com now offers four AI Modes which enable better responses to different kinds of search queries.

These are the new AI Modes

- Smart Mode

Default version of AI search - Genius Mode

Multistep reasoning, data visualization in charts and file uploads - Research Mode

Offers more citations and links - Create Mode

AI image generation

You is available at You.com, as apps for Android and iPhone and also as a Chrome extension.

You.com SERPs With Links To Websites

You.com offers a summary in response to a search query and contains small links to the sources of information.

Screenshot Of You Search Result

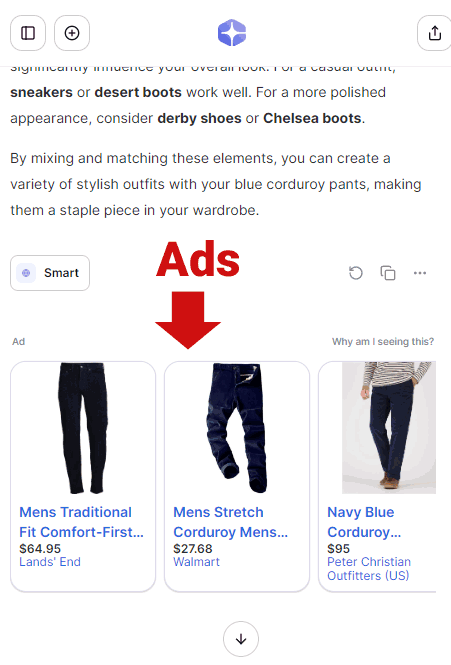

You.com Supported By Ads

You.com distinguishes itself from the other AI search engines on this list by showing advertising.

Screenshot Of You.com Ads

YOU is available in a free and paid versions.

The free version of You offers a limited amount of searches, while the Pro and Team plans offers more searches and costs $15 and $25.

You.com Summary

Like Phind, You.com offers an AI search experience that’s heavily textual and lacks images. Unlike the other search engines on this list, You.com shows advertising. The search results are useful but it also offers ads and other limitations, something the competition is free of.

AI Search Engine Future Is Now

AI Search is increasingly competitive, with startups like Andi Search offering remarkably useful and accurate search results. Open source LLMs play a larger role and create an opportunity for smaller search engines to compete head to head with bigger rivals like Google and Bing.

Give these search engines a try because they offer a search experience of tomorrow today.

More resources:

- Great Search Engines You Can Use Instead Of Google

- How Search Engines Use Machine Learning: 9 Things We Know For Sure

- How Search Engines Work

Featured Image by Shutterstock/A9 STUDIO