The person asking the question stated that they have a large amount of press releases that have accumulated inbound links from high authority sites, presumably from sites like news sites and other sites that might be important to the niche.

The question revolves on how to reorganize the site so that the entire press release section is blocked off from Google but in a way that the site could still benefit from the inbound links from the “high authority” sites.

“…We have a site with a large number of press release pages.

These are quite old…they have accumulated a large amount of backlinks but they don’t get any traffic.

But they are quite high authoritative domains pointing to them.”

The question continued:

“I was thinking… we can move it to an archive. but I still would like to benefit from the SEO power these pages have built up over time.

So is there a way to do this cleverly… moving them… to an archive… but then still… benefit from the SEO power these pages have built up over time?”

There’s a lot to unpack in that question, especially the part about the accumulation of links to a section of the site and the “SEO power” those pages have to spread around.

There are many SEO theories about links because it’s unclear how Google uses them.

The word “opaque” means something that is not transparent and makes it hard to see something clearly.

Google is not only opaque about how they use links, but the way they use links is evolving, just like the rest of their algorithms. That further complicates forming ideas about how links actually work.

Googlers have made statements about some of these ideas, like the concept of links conferring so-called authority to entire domains (Google’s John Mueller reaffirms that Google does not have a domain authority metric or signal in use at Google).

So sometimes it’s best to keep an open mind about links in order to be receptive to information that might counter what is commonly accepted as true, especially if there are multiple statements from Googlers that contradict those ideas.

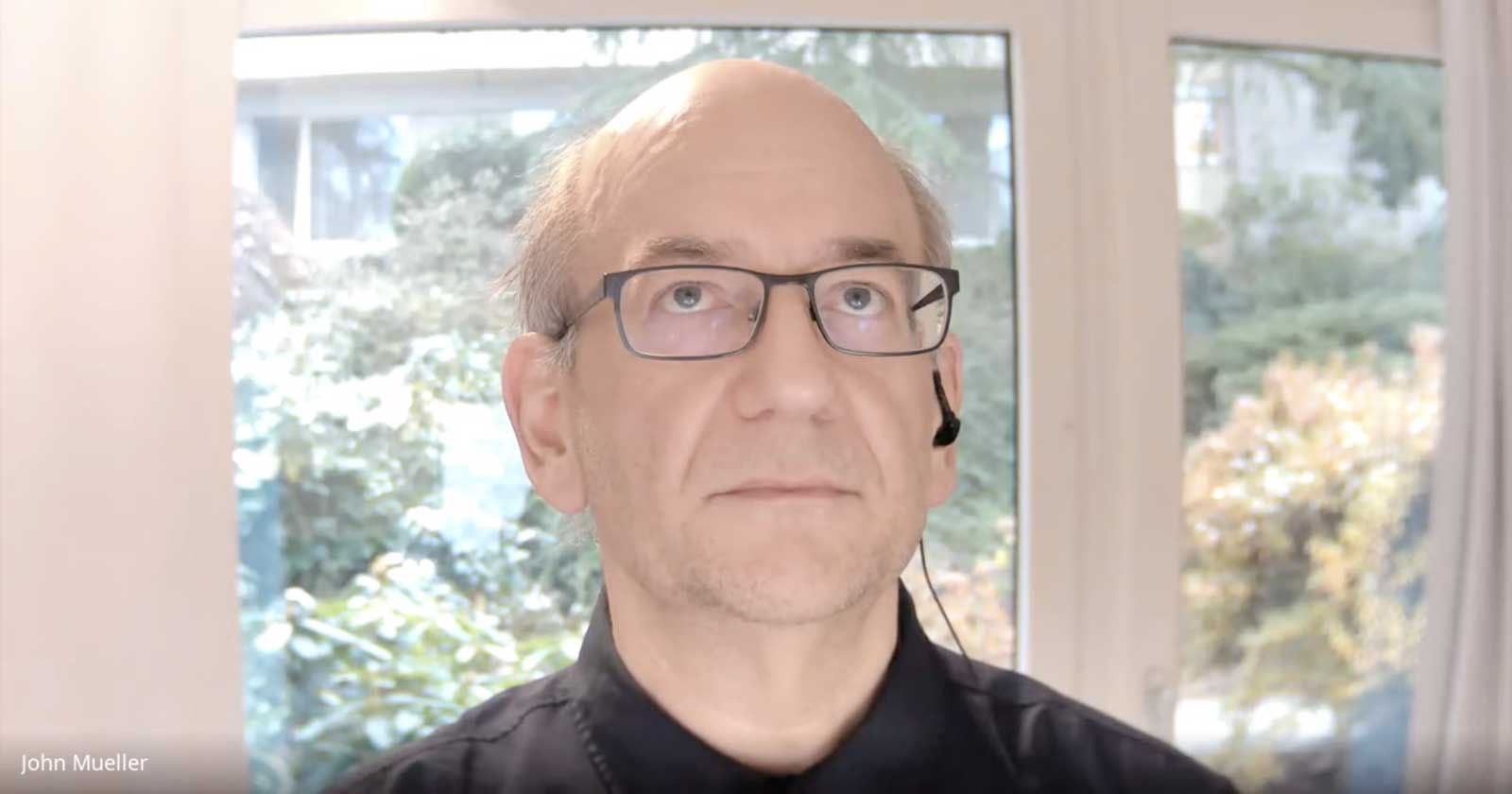

John Mueller paused to think before answering the question about best way to benefit from “SEO power” of old links.

Then he said:

“I mean, you can just redirect them to a different part of your site.

If you have an archive section for this kind of older content, which is very common, then… moving the content there and redirecting the URLs there, that essentially tells us to forward the links there.”

The person asking the question said that some of the content needed to be there for legal reasons and that Google doesn’t have to access those web pages all the time.

He said that he was considering disallowing the folder that contained the web pages.

Disallowing means blocking search engines from crawling certain pages using the Robots.txt protocol, which search engines are obliged to obey.

Robots.txt is a way, among several things, to tell search engines which pages not to crawl.

His follow up question was:

“Will this also mean that the built-up SEO Power will be ignored from that point onward?”

Google’s Mueller closed his eyes and tilted his head up, pausing a moment before answering that question.

John Mueller Paused Before Answering Question about SEO Power of Links

John said:

“So probably we would already automatically crawl less if we recognize that they’re less relevant for your site.

Not the case that you need to block it completely.

If you were to block it completely with robots.txt, we would not know what was on those pages.

So essentially, when there are lots of external links pointing to a page that is blocked by robots.txt then sometimes we can still show that page in the search results but we’ll show it it with a title based on the links and a text that says, oh, we don’t know what is actually here.

And if that page that is being linked to is something that is just referring to more content within your website, we wouldn’t know.

So we can’t, kind of, indirectly forward those links to your primary content.

So that’s something where if you see that these pages are important enough that people are linking to them, then I would try to avoid blocking them by robots.txt.

The other thing kind of to keep in mind, also is that these kind of press release pages, things that collect over time, usually the type of links that they attract are a very time-limited kind of thing, where a new site will link to it once.And then when you look at the number of links, it might look like there are lots of links here. But these are really old news articles which are in the archives of those news sites, essentially.

It’s also kind of a sign, well, they have links but those links are not extremely useful because it’s so irrelevant in the meantime.”

SEO Power of Links?

It’s notable that Mueller refrained from discussing the “SEO power” of links. Instead he focused on the time related quality of the links and the (lack of) usefulness in terms of relevance for old news related links.

The SEO community tends to think of news related links as being useful. But Mueller referred to “really old news articles” as being in archives and also being a sign that those links are not useful because of relevance issues.

In general, news has time-based relevance. What was relevant five years ago may not be as useful or relevant in the present.

So in a way, Mueller seemed to be indirectly downplaying the notion of “SEO power” of links because of issues related to where those links were coming from (archived news) and because of the passage of time making those links less useful because they are referring to a topic that may not be evergreen but was of the moment, a moment that has already passed.

Citation

Watch the Google office hours hangout: