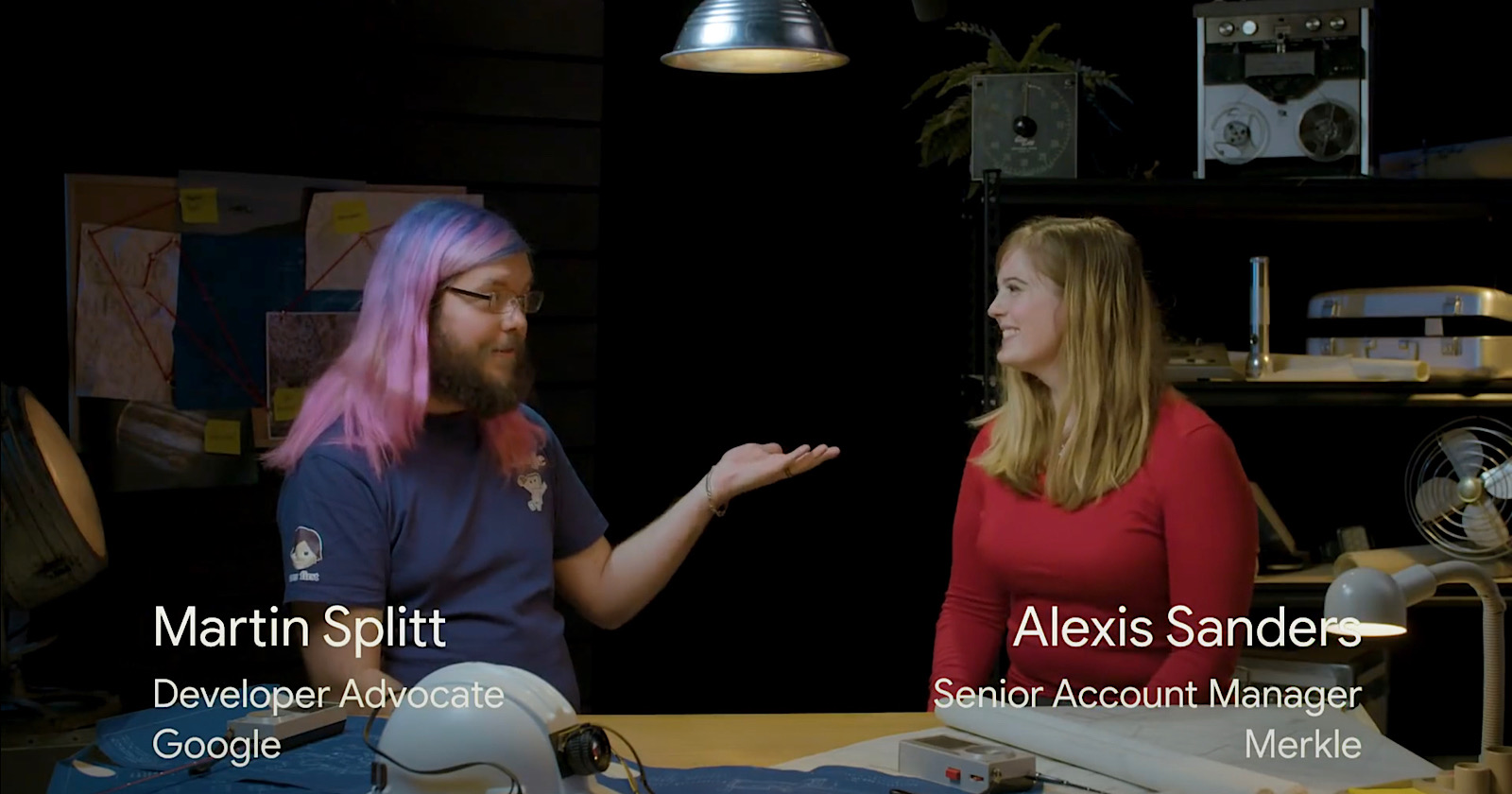

Google goes in depth on crawl budget, answering some of the most common SEO-related questions in the latest episode of SEO Mythbusting.

Joined by Merkle’s Alexis Sanders, Google’s Martin Splitt answers over a dozen questions that SEOs frequently ask about crawl budget.

Here’s a quick recap of each question and answer, along with its corresponding time stamp in the video.

What is crawl budget? (1:15)

Crawl budget is the balance between crawling as much content on a site as quickly as possible without overwhelming the servers.

The budget is number of requests Googlebot can make at the same time without overwhelming the server.

Related: How Search Engines Crawl & Index: Everything You Need to Know

What is crawl demand? (1:47)

This refers to how often Google wants to crawl a site based on its subject matter.

For example, a breaking news site will likely have a higher crawl demand compared to a recipe site.

How does Googlebot make its crawl rate and crawl demand decisions? (2:44)

Google determines how often to crawl a page based on how frequently the content changes. If the frequency of change is low then the site will not be crawled as often as others.

ETags, HTTP headers, last modified dates, and similar (3:43)

Google uses things like ETags, HTTP headers, and last modified dates to determine how often content should be crawled.

An ETag is a caching header that contains a fingerprint of the content to detect changes over time.

What size of sites should worry about crawl budget? (4:35)

This is primarily a concern for large sites with millions of pages. If your site has under a million pages then you do not have to be concerned about crawl budget.

Server setup vs crawl budget (5:00)

Crawl budget is frequently cited as a problem for site owners when the underlying issue is usually server setup or quality of content.

Crawl frequency vs quality of content (6:18)

It’s not an indication that content is high quality when it is more frequently crawled. It’s also not an indication that content is low quality when it is less frequently crawled.

Related: 7 Tips to Optimize Crawl Budget for SEO

What to expect to see in one’s log files if Google is testing one’s server? (7:45)

They might see an increase in crawl activity, followed by a decrease, followed by another increase. So a wave pattern, in other words.

Tips on how to get your site crawled accurately during a site migration (8:18)

Martin Splitt recommends progressively updating your sitemap noting what has changed and when. Beyond that, try to ensure both servers are running as smoothly as possible.

Crawl budget and the different levels of one’s site’s infrastructure (9:40)

The ways in which crawl budget affects different levels of site infrastructure depends on the site itself.

This is usually not something site owners should worry about, Splitt says.

Does crawl budget affect rendering as well? (10:37)

Yes, crawl budget does affect rendering. When Googlebot renders content it fetches additional resources, which comes out of the site’s crawl budget.

Caching of resources and crawl budget (11:46)

Google caches resources as aggressively as possible to avoid having to recrawl them every time.

Crawl budget and specific industries such as publishing (13:34)

E-commerce sites and large publishers should be most concerned about crawl budget.

What can be – generally speaking – recommended to help Googlebot out when crawling one’s site? (15:03)

Splitt recommends blocking resources from being crawled that do not absolutely need to be crawled. This will help Googlebot crawl more efficiently.

What are the usual pitfalls people get into with crawl budget? (16:52)

A frequent issue site owners run into is blocking resources in robots.txt that Googlebot really needs.

For example, some sites block their CSS file from being crawled, which Googlebot needs in order to render the content as visitors see it in a web browser.

Can one tell Googlebot to crawl one’s site more? (17:40)

No, this cannot be done. Site owners can limit how often Googlebot crawls, but there’s no way to trigger Googlebot to crawl more frequently.

See the full video below:

https://youtu.be/am4g0hXAA8Q