A robots.txt file is key component of your website’s SEO, but sometimes these files do more harm than good. For example, your robots.txt file may be blocking search engine spiders from crawling important pages on your site, or it may be preventing your site from being indexed altogether.

Google wants to make it easier for you to detect these kinds of errors, which is why they have announced today that they’re launching an updated robots.txt testing tool in Webmaster Tools. You can find the updated testing tool in Webmaster Tools within the Crawl section.

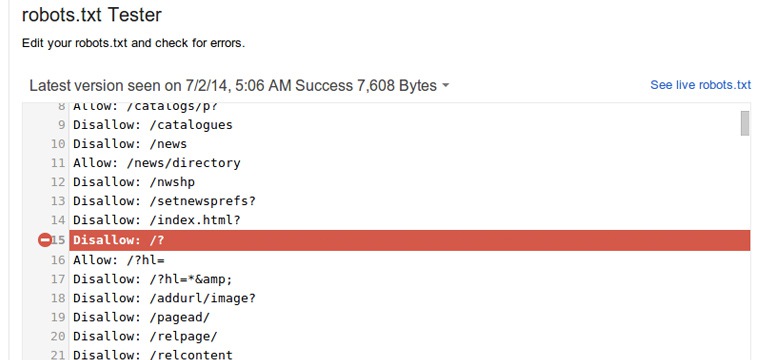

With the new tool you will see your current robots.txt file, and have the ability to test specific URLs to see whether or not they’re disallowed for crawling. The tool will guide users through complicated directives in the robots.txt file and highlight the specific one that led to Googlebot’s final decision.

You can make changes in the file with the new tool, and test the changes as you go. Once everything is working as it should, just upload the new version of the file to your server to make the changes take effect.

If you know of errors on some of your existing sites, Google recommends double-checking the robots.txt files of those sites. A common problem comes from old robots.txt files that block CSS, JavaScript, or mobile content. Once those problems are identified, it’s usually just a simple fix to correct the problem.

Google’s John Mueller chimed in on the discussion and said he recommends you try this new tool, even if you’re sure that your robots.txt file is fine.

Some of these issues can be subtle and easy to miss. While you’re at it, also double-check how the important pages of your site render with Googlebot, and if you’re accidentally blocking any JS or CSS files from crawling.

For more information about robots.txt, here’s a Webmaster Help page on how the files are processed.