The alternative health site, Mercola, published they have lost 99% of their traffic from the June 2019 Google Broad Core update. The article cites the Quality Raters Guidelines and asserts that Google’s algorithm is targeting sites that are described with negative sentiment in Wikipedia.

Could Google be using Wikipedia to lower rankings of websites?

Mercola Claims Wikipedia Responsible for Ranking Drops

Dr. Mercola cites several articles he’s read online in order to build his case that negative statements published on Wikipedia about Mercola.com are the reason why Google has stopped ranking Mercola for health related queries.

According to Dr. Mercola:

“Google is now manually lowering the ranking of undesirable content, largely based on Wikipedia’s assessment of the author or site.”

Selective Quotes Can Be Misleading

That statement is based on what’s written in the quality raters guide. The quoted part is instruction telling the quality raters to use Wikipedia to check on the reputation of a website.

But that’s a selective quote. A selective quote is where someone quotes a portion of a statement to prove a point. But the point falls apart when you read it in the entire context.

For example, it’s like someone quoting another person as having said, “I beat my son…” when in fact, the person had said, “I beat my son playing Monopoly.”

The full context of what’s in the Quality Raters Guidelines is instructions to use advanced search parameters in Google, instructions to check Yelp and other review sites, to check what people on social media say about those sites.

The instructions for researching a website’s reputation go far beyond checking Wikipedia.

What the Quality Raters Guidelines Says

“Use reputation research to find out what real users, as well as experts, think about a website. Look for reviews, references, recommendations by experts, news articles, and other credible information created/written by individuals about the website.

News articles, Wikipedia articles, blog posts, magazine articles, forum discussions, and ratings from independent organizations can all be sources of reputation information. information.”

Google even provides guidance on how to use advanced search operators:

“Using ibm.com as an example, try one or more of the following searches on Google:

● [ibm -site:ibm.com]: A search for IBM that excludes pages on ibm.com.

● [“ibm.com” -site:ibm.com]: A search for “ibm.com” that excludes pages on ibm.com.

● [ibm reviews -site:ibm.com] A search for reviews of IBM that excludes pages on ibm.com.

● [“ibm.com” reviews -site:ibm.com]: A search for reviews of “ibm.com” that excludes pages on ibm.com.

● For content creators, try searching for their name or alias”

It is clear that the mention of Wikipedia is within the context of teaching quality rater guidelines how to ressearch for reputation information for the purpose of providing feedback on the quality of search results.

There is nothing in those instructions, including the use of advanced search operators, that indicates Wikipedia is being used by Google’s algorithm.

To use this section to guess that Google is using Wikipedia for reputation ranking is an extreme leap.

This is not evidence of the use of Wikipedia by Google’s algorithm.

Quality Raters Guidelines and Google’s Algorithm

A mistake that many SEOs make today is assuming that what’s in the QRG reflects what is in Google’s algorithm. That’s a mistake.

The Quality Raters Guideline is a manual for quality raters that teaches them how to rate websites for the purpose of evaluating experimental changes to Google’s algorithm.

For example, John Mueller recently described the raters doing a side by side examination of search results with and without a change to the algorithm (watch video here).

“Essentially our quality raters, what they do is when teams at Google make improvements to the algorithm, we’ll try to test those improvements.

So what will happen is we’ll send the quality raters a list of search results pages with a version with that change and without that change, and they’ll go through and see like which of these results are better and why are they better.

And to help them evaluate those two results we have the Quality Raters Guidelines.”

The Quality Raters Guidelines instructs raters to use Wikipedia to check the reputation of a site. But it also instructs raters to use blogs, newspapers, review sites and advanced search operators to research the reputation of a site.

It’’s reasonable to take those instructions at face value that Google is instructing quality raters how to check if Google’s returning high quality sites.

It’s a huge leap to take the instruction to raters to check Wikipedia as meaning that Wikipedia is also used by Google to judge a site’s reputation.

Does Google Use Wikipedia for Reputation Analysis?

I’ve never come across any research or patents that describe using Wikpiedia for analyzing the reputation of a website. The research I have come across deals with solutions like using Wikipedia to classify YouTube channels and for identifying entities that share the same name.

Bill Slawski is an expert on search related patents. I did a quick search at Bill Slawski’s SEOByTheSea website for anything with Wikipedia and he has not published anything to indicate that Google uses Wikipedia for reputation analysis.

The Problem with SEO Hypotheses

A hypothesis is an explanation for something. A theory is based on proofs, like experiments.

In SEO, there are many hypotheses and theories. A hypothesis is when someone proposes that Google is using something, but lacks proof such as research or patents by Google (or any other research body like a university or Microsoft).

Hypotheses are built on zero to thin evidence, such as sketchy correlation studies. In my experience, most hypotheses have consistently proven to be false.

The fact at this moment in time is that there is a statement in Google’s Quality Raters Guidelines where Google instructs raters to check Wikipedia for the purpose of judging changes to Google’s algorithm. Period.

To read between the lines of those instructions to conclude that it’s directly related to Google’s algorithm would be a mistake.

Bill Slawski on Wikipedia for Reputation Ranking

I asked Bill Slawski, of GoFishDigital, if he knew of any patents related to the use of Wikipedia for reputation analysis and ranking.

“”Ben Gomes made a statement onthe quality raters guidelines” “They (the Quality Rater Guidelines) don’t tell you how the algorithm is ranking results, but they fundamentally show what the algorithm should do.”

I have seen mentions of Wikipedia in Google Patents, but none that say that Google might use information from there to help rank the quality of pages based upon a reputation of a company or a content creator.”

I then asked Bill about the using the Quality Raters Guidelines to find hints about how Google ranks websites:

“Those human evaluations are only an attempt by humans to let search engineers have some feedback about the quality of pages in search results. They are providing tools to help them provide feedback, and not to actually rank those pages in the same way that Google might be.”

Bill Slawski also referred me to Google research from 2018 that uses Wikipedia for understanding relationships between words and their context within sentences. his research is about understanding words within their context. It is not about using Wikipedia to judge and rank websites.

It is simply an example of Google research that has a reliance on Wikipedia.

The research is called, Open Sourcing BERT: State-of-the-Art Pre-training for Natural Language Processing.

Does Google Judge the Reputation of a Site?

In 2010, Google officially announced they were doing sentiment analysis in order to judge websites. The blog post authored by the former head of Google Search was called, Being Bad to Your Customers is Bad for Business.

The announcement referenced an article in the New York Times that left the impression that links to a bad merchant from sites saying negative things about the merchant had caused it to rank well.

This is part of the announcement:

“…in the last few days we developed an algorithmic solution which detects the merchant from the Times article along with hundreds of other merchants that, in our opinion, provide an extremely poor user experience.

The algorithm we incorporated into our search rankings represents an initial solution to this issue, and Google users are now getting a better experience as a result.”

The article then linked to a 2007 research paper titled, Large-Scale Sentiment Analysis for News and Blogs (PDF).

The research paper states:

“We determine the public sentiment on each of the hundreds of thousands of entities that we track,and how this sentiment varies with time.”

There is another version of that same research paper that is longer and more complete (Download PDF here)

The longer version concludes:

“There are many interesting directions that can be explored. We are interested in how sentiment can vary by demographic group, news source or geographic location. By expanding our spatial analysis of news entities to sentiment maps, we can identify geographical regions of favorable or adverse opinions for given entities.

We are also studying in analyzing the degree to which our sentiment indices predict future changes in popularity or market behavior.”

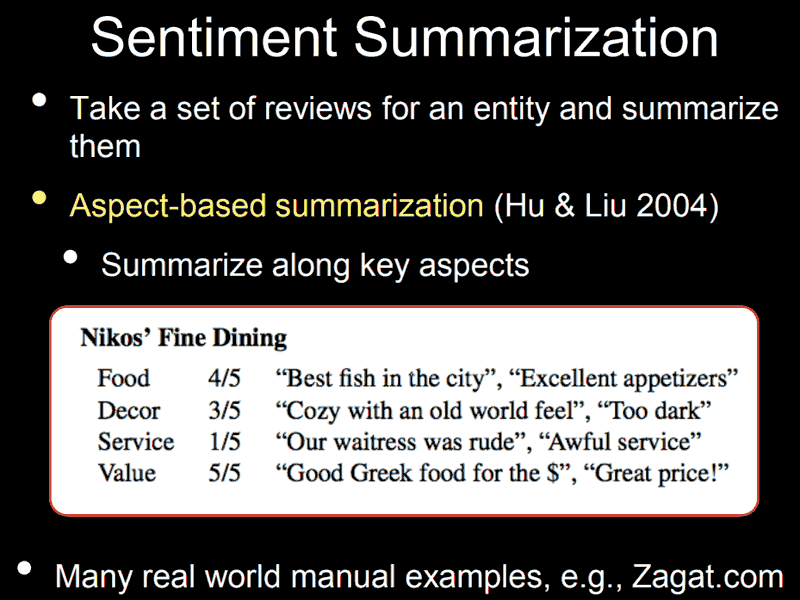

There is also a Google Research PDF from 2008 called, Leveraging User Annotations in Sentiment Summarization. It provides an overview of extracting the positive or negative sentiment in user reviews.

This is a screenshot of a presentation by a Googler about sentiment summarization.

This is a screenshot of a presentation by a Googler about sentiment summarization.The 57 page PDF of Leveraging User Annotations in Sentiment Summarization.

The 28 page PDF of Leveraging User Annotations in Sentiment Summarization.

Does Google’s Algorithm Use Sentiment Analysis?

So far, I have written about Reputation Analysis. However, this is generally called Sentiment Analysis. There was a lot of research into Sentiment Analysis in the middle 2000’s. Google is still publishing research on it.

One of the most recent publications is called, Multilingual Multi-class Sentiment Classification Using Convolutional Neural Networks

The paper proposes a language independent way to gauge how people feel about things like products and businesses (sentiment analysis).

The research paper states:

“This paper describes a language-independent model for multi-class sentiment analysis using a simple neural network architecture… The advantage of the proposed model is that it does not

rely on language-specific features such as ontologies, dictionaries, or morphological or syntactic pre-processing.The social media has revolutionized the web by transforming users from being passive recipients of information into contributors and influencers. This has a direct impact on businesses, products and governance.

Many of the users’ posts are opinions about products and brands that impact other consumers’ buying decisions and affect brand trustworthiness. Negative reviews circulated online may cause critical problems for the reputation, competitive power, and survival chances of any business.”

There is no proof that Google uses such a system for sentiment analysis. However, the fact that this research paper exists makes it a good proof of concept that this kind of sentiment analysis has been researched and is theoretically possible. Most interestingly, it relies on social media like Twitter and there is no mention of Wikipedia at all.

Takeaway: No Proof Google Uses Wikipedia to Judge Websites

- There is no patent or research paper by Google that states a process for using Wikipedia to extract sentiment information for ranking purposes.

- It is incorrect to use guidance in the Quality Raters Guides for how to research a website as evidence that Google’s algorithm does the same thing.

Read the Mercola article here: Google Buries Mercola in Their Latest Search Engine Update.