Facebook announced a new AI technology that can rapidly identify harmful content in order to make Facebook safer. Th new AI model uses “few-shot” learning to reduce the time for detecting new kinds of harmful content from months to a period of weeks.

Few-Shot Learning

Few-shot learning has similarities to Zero-shot learning. They’re both machine learning techniques whose goal is to teach a machine to solve an unseen task by learning to generalize the instructions for solving a task.

Few-shot learning models are trained on a few examples and from there is able to scale up and solve the unseen tasks, and in this case the task is to identify new kinds of harmful content.

The advantage of Facebook’s new AI model is to speed up the process of taking action against new kinds of harmful content.

The Facebook announcement stated:

“Harmful content continues to evolve rapidly — whether fueled by current events or by people looking for new ways to evade our systems — and it’s crucial for AI systems to evolve alongside it.

But it typically takes several months to collect and label thousands, if not millions, of examples necessary to train each individual AI system to spot a new type of content.

…This new AI system uses a method called “few-shot learning,” in which models start with a general understanding of many different topics and then use much fewer — or sometimes zero — labeled examples to learn new tasks.”

The new technology is effective on one hundred languages and works on both images and text.

Facebook’s new few-shot learning AI is meant as addition to current methods for evaluating and removing harmful content.

Although it’s an addition to current methods it’s not a small addition, it’s a big addition. The impact of the new AI is one of scale as well as speed.

“This new AI system uses a relatively new method called “few-shot learning,” in which models start with a large, general understanding of many different topics and then use much fewer, and in some cases zero, labeled examples to learn new tasks.

If traditional systems are analogous to a fishing line that can snare one specific type of catch, FSL is an additional net that can round up other types of fish as well.”

New Facebook AI Live

Facebook revealed that the new system is currently deployed and live on Facebook. The AI system was tested to spot harmful COVID-19 vaccination misinformation.

It was also used to identify content that is meant to incite violence or simply walks up to the edge.

Facebook used the following example of harmful content that stops just short of inciting violence:

“Does that guy need all of his teeth?”

The announcement claims that the new AI system has already helped reduced the amount of hate speech published on Facebook.

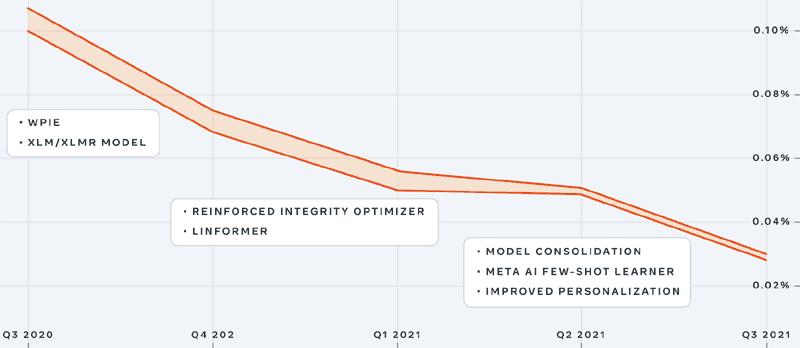

Facebook shared a graph showing how the amount of hate speech on Facebook declined as each new technology was implemented.

Graph Shows Success Of Facebook Hate Speech Detection

Entailment Few-Shot Learning

Facebook calls their new technology, Entailment Few-Shot Learning.

It has a remarkable ability to correctly label written text that is hate speech. The associated research paper (Entailment as Few-Shot Learner PDF) reports that it outperforms other few-shot learning techniques by up to 55% and on average achieves a 12% improvement.

Facebook’s article about the research used this example:

“…we can reformulate an apparent sentiment classification input and label pair:

[x : “I love your ethnic group. JK. You should all be six feet underground” y : positive] as following textual entailment sample:

[x : I love your ethnic group. JK. You should all be 6 feet underground. This is hate speech. y : entailment].”

Facebook Working To Develop Humanlike AI

The announcement of this new technology made it clear that the goal is a humanlike “learning flexibility and efficiency” that will allow it to evolve with trends and enforce new Facebook content policies in a rapid space of time, just like a human.

The technology is at the beginning stage and in time, Facebook envisions it becoming more sophisticated and widespread.

“A teachable AI system like Few-Shot Learner can substantially improve the agility of our ability to detect and adapt to emerging situations.

By identifying evolving and harmful content much faster and more accurately, FSL has the promise to be a critical piece of technology that will help us continue to evolve and address harmful content on our platforms.”

Citations

Read Facebook’s Announcement Of New AI

Our New AI System to Help Tackle Harmful Content

Article About Facebook’s New Technology

Harmful content can evolve quickly. Our new AI system adapts to tackle it