Magento is often regarded as a challenging e-commerce platform to achieve technical excellence with.

Despite its complex rewrite engine, codebase, and dynamic content and URLs, leading global brands such as Burger King, Coca-Cola, and Tom Dixon all use the platform.

Magento boasts a number of great achievements including increasing sales and user time on site, as well as streamlining order processes. From experience this is true. However, out of the box, Magento has a number of issues.

The majority of Magento’s known SEO issues are fairly simple to fix. However, a lot of them require assistance from developers.

Tom Dixon uses a Magento Enterprise implementation to sell products globally.

Tom Dixon uses a Magento Enterprise implementation to sell products globally.At the moment there is a lot of activity within the Magento community as webmasters are preparing to migrate away from Magento 1, which will no longer be supported after summer 2018.

I’ve been fortunate enough to work with a number of brands utilizing Magento, ranging from consumer electrical goods to luxury, designer jewelry, helping them optimize their website during and post the build stage.

This article will explain many of the common Magento SEO issues, as well as how to overcome them. Whether or not you’re using Magento Community or Enterprise versions, the complexity of the platform means you may well be affected by a number of these issues.

General Magento SEO Issues

In a mobile world where site speed is becoming more and more important, Magento is a slow platform and in some cases, this slow load speed and performance can negatively impact organic search performance.

This isn’t just because a slow website is bad for users, but a slow website also impacts crawlability of the website.

To speed up your Magento installation, here are some common fixes that can be applied prior to, and after setup:

- Use a server with sufficient RAM and configure it correctly.

- Disable Magento logs (default) and enable log cleaning in the back-end.

- Utilize a content delivery network like Cloudflare, and if you have a high-traffic website it could be worthwhile to use their Argo product.

- Compress front-end assets and images using a compression service. This won’t compromise on quality but by reducing the weight of assets to load it can make a big difference, especially on mobile devices.

There is a lot more to site speed than just the above points, and I’d definitely recommend that you work toward making your store load as quickly as possible on all devices.

Product SEO Issues

Simple and Configurable Products: Canonical Fixes

Like most e-commerce vendors, you will want to use simple products alongside configurable products.

Because a lot of products are configurable – for instance, a sweater may come in a number of different colors – a lot of Magento users will create the same sweater as a simple product to show the different variations of the sweater on the product listing page.

If you’re taking this approach, it’s also unlikely that you’re rewriting the content on each of these pages.

To resolve this duplication issue, but keep your product listing pages looking full, you need to use the canonical tag – linking back to the core configurable product – to indicate to Google that they are duplicate variants of the one item.

Product Title Tags and Headers

By default, Magento tends to create under-optimized title tags and misuses page header tags.

The Magento title tag is just the product name, which could both ambiguous and unhelpful.

My preference is to always manually assign a title tag. That way you can optimize it how you want it.

That being said, large e-commerce vendors may have hundreds or thousands of products and asking someone to write all those title tags won’t be an effective use of their time.

I’d recommend that for products you set a convention to include product variables, such as product type (sweater), color (navy), and gender and potentially even brand. Then for key products within the range manually optimize their title tags.

While header tag usage is only a minor consideration, for me it is one box that a lot of developers leave unticked when building a website or Magento template. I’ve seen product-listing pages with countless H1s, others with no header tags at all.

While there are more important things to fix and consider, to be 100 percent technically excellent this box needs to be ticked and the page’s header tags follow the correct hierarchy.

Product URLs

There is an option in your Magento settings to only use top-level product URLs, rather than including every category and subcategory hierarchy in the URL itself. I’d suggest you do this.

If you’re using the hierarchical URLs, you may face a duplication issue as you will have multiple variations of the product on individual URLs. This isn’t an issue just faced by Magento, but by a number of e-commerce platforms (especially in WooCommerce).

This approach also helps reduce indexation bloat and allows for you to have a single version of a product that can be nested under different categories.

If you’re already utilizing category paths in your URL strings, make sure that you’re using canonicals to identify the main version of each product.

If you’re moving away from category paths to top-level product URLs, make sure that you update all internal links and have your developer write the necessary redirect rules and that they are implemented when the switchover happens.

Faceted Navigations & Parameter URLs

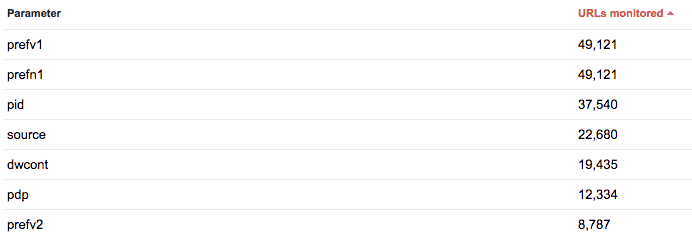

Faceted navigations are notorious for creating duplicate content issues and indexation bloat, regardless of platform. Magento is no different and the parameter URLs generated by the faceted navigation are often indexed.

The faceted navigation isn’t the only culprit when it comes to generating parameter URLs, a number of extensions also use them to create directories – something you need to review if you use Manadev.

Indexation bloat is an issue, as Google only crawls a portion of your website at a time, and you want it to focus on the core pages that provide user value (and have value to the business). If there are hundreds or thousands of URL parameters for it to contend with, you aren’t optimized for crawling.

So what can be done to resolve parameters appearing in Google’s index?

Handling Parameters In Google Search Console

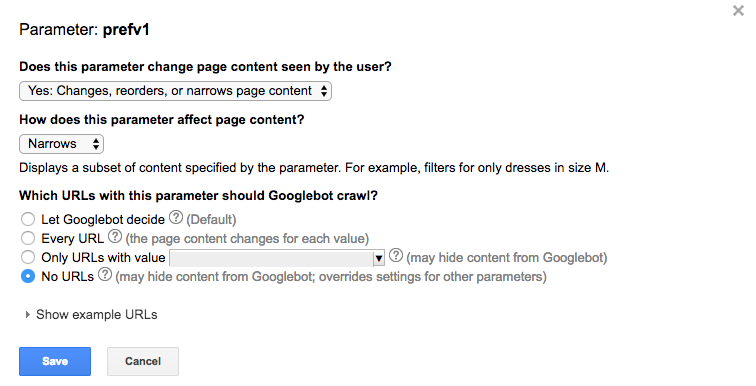

A quick win to resolving indexation bloat being caused by the URL parameters is to amend how Google handles the URL within Google Search Console.

By declaring how the parameter changes the content for the user and by telling Google bot to not crawl (bottom tick box) any of the content, you can prevent the issue.

You could also block the parameter in the robots.txt file but this can cause issues with PPC and other programmatic campaigns, so I would check before doing so.

Nofollow Faceted Navigation Links

Another favorite implementation of mine is to nofollow the links behind the faceted navigation.

By adding a nofollow to these links, Google won’t crawl them. That being said, if they are linked to from the sitemap or from other areas of the website, the URLs can still be discovered and appear in Google’s index.

AJAX Navigation

This can be a nightmare solution to implement – especially if you don’t have an experienced Magento developer on call.

Using an AJAX navigation will allow users to alter the content on the category/product listing page without changing the URL. There are a number of other technical issues this can cause, such as creating excess JavaScript, impacting site speed.

Magento URL Rewrites

URL rewrites, and how Magento creates URLs, is an issue I see probably 90 percent of the time when working with new Magento clients.

A common issue is that Magento can produce category or product URLs that revert back to the original /catalog/ path, and duplicate the URLs produced based on the title of the page.

These URLs should be blocked (via Robots.txt) and should be monitored, especially when refreshing as some can revert back without reason and no 3xx redirect will be applied.

Number Appendages

Another common issue with Magento’s rewrites is the platform’s habit to add a number to the end of the URL.

This happens when the URL is already being used, and the rewrites are replacing the old URL without changing it. This can cause a number of duplicate URLs (all appended by a number) and can in some cases be a big issue if undetected and unresolved.

This can also be caused by updating products via a CSV. If this is the case, it’s a simple fix and all the developer has to do is removed the rewrites from the rewrite table.

Pagination

Product listing pages (are correctly) broken up into pages, which is good for the user but can lead to duplicate blocks of text.

In 2011, Google introduced the rel=”next” and rel=”prev” tags to allow webmasters to indicate paginated pages.

To go one step further, I’d also introduce a robots=”noindex,follow” tag to the paginated pages.

Conclusion

It’s important to remember that every Magento implementation is different. Custom templates may bring about new SEO challenges that can only be identified on a case by case basis.

A technical SEO should be included at the earliest stage of the site build process and remain involved throughout the development and post launch to make sure every aspect of the Magento site is optimized.

Image Credits

Screenshots by author. Taken July 2017.