At the end of May, I wrote a round-up post of expert opinions on the latest big Google updates: Panda 4.0 and Payday Loan 2.0. Now that it’s been a month or so, I thought I’d ask another group of experts for their opinion on how the update has continued to be reflected in their clients and their own SEO progress. I asked our experts the following:

“Now that Panda 4.0 has been out for about a month, have you seen any long-standing changes to website traffic or SERPs? If so, what is your recommendation for first action steps to make the best out of this Google update?”

From Marcus Tober, CTO and Founder of Searchmetrics:

Unlike with other recent updates, I didn’t see many big changes after the release of Panda 4.0. A couple of well-known brands that landed on the loser list recovered, like yellowpages.com, which returned to nearly the same visibility it had before. When there are quick recoveries like this, it usually means that Google is still tweaking the update, or these sites were never actually affected by Panda. Google did confirm they made several updates prior to Panda, which could have caused the drop in the sites that recovered quickly.

Unlike with other recent updates, I didn’t see many big changes after the release of Panda 4.0. A couple of well-known brands that landed on the loser list recovered, like yellowpages.com, which returned to nearly the same visibility it had before. When there are quick recoveries like this, it usually means that Google is still tweaking the update, or these sites were never actually affected by Panda. Google did confirm they made several updates prior to Panda, which could have caused the drop in the sites that recovered quickly.

However, in general we only saw enhancements made by Google versus major changes, which makes sense. Matt Cutts told me the update to Panda 4 was created by a new team at Google, and that it was a re-work of Panda. So they have to see what the impact is on the user, and have to learn and adapt. Between major Panda iterations, changes can take a couple of months to understand. I expect the same here.

From Joost de Valk, Creator of Yoast:

We’ve gotten a series of new review clients, as well as people commenting on our blog posts, who suffered severely from the last update. To be fair, so far I’ve seen only a few false positives, and those were fixed by allowing Google to spider CSS & JS and thus probably not “truly” Panda. Most of the other sites I’ve seen get hit actually surprised me, I would’ve thought earlier iterations of Panda would’ve already hit those.

We’ve gotten a series of new review clients, as well as people commenting on our blog posts, who suffered severely from the last update. To be fair, so far I’ve seen only a few false positives, and those were fixed by allowing Google to spider CSS & JS and thus probably not “truly” Panda. Most of the other sites I’ve seen get hit actually surprised me, I would’ve thought earlier iterations of Panda would’ve already hit those.

From AJ Kohn, Owner of Blind Five Year Old:

I’ve seen a number of sites see material gains in traffic (50%-400%) and one who was put into Panda Jail. If you’re trying to lose weight the best advice is boring: diet and exercise. So I have similar boring advice for Panda: quality content and good user experience.

I’ve seen a number of sites see material gains in traffic (50%-400%) and one who was put into Panda Jail. If you’re trying to lose weight the best advice is boring: diet and exercise. So I have similar boring advice for Panda: quality content and good user experience.

Eliminate thin content pages (don’t be timid here) or those that are targeting fractional keywords. Don’t rely on aggregated or syndicated content and ensure that the user experience makes it easy to digest your content and complete tasks.

Do the hard work and deliver content and experiences that satisfy user intent.

From Mindy Weinstein, SEO Manager at Bruce Clay Inc.:

Just as was expected, websites with thin, syndicated, or scraped content were hurt. However, other sites benefited from Panda 4.0 by gaining greater visibility, so the update was a welcomed change for some.

Just as was expected, websites with thin, syndicated, or scraped content were hurt. However, other sites benefited from Panda 4.0 by gaining greater visibility, so the update was a welcomed change for some.

It is important to remember what Google is trying to accomplish with Panda—they want to improve the quality of their product. They are not trying to punish websites, instead they want to serve up better search results for users.

If a website has been affected by Panda 4.0, the first action step is to audit the content. This kind of audit is not easy, as you have to look at the website as objectively as possible. That might mean you need to bring in another person to help give an unbiased opinion. Ask such questions as is the website content thin, duplicate, or simply of little value? Is it hard to read? If so, you might need to start consolidating content or getting rid of it altogether. You will also need to create new content. Make sure you have a good strategy in place and focus on developing content that is engaging and interesting to users. You want your contribution to the web to be unique and valuable.

From A.J. Ghergich, Founder of Ghergich & Co.:

If you see a large drop in organic rankings, the first step is to determine if it was Panda or Penguin that brought you down, or a combination of both.

If you see a large drop in organic rankings, the first step is to determine if it was Panda or Penguin that brought you down, or a combination of both.

For Penguin: 9 out of 10 cases I look at were penalized because of WAY TOO MANY keyword rich links pointing to a site.

For Panda: Most cases I see are doing one (or both) of these things wrong:

- Thin Content: producing original but crappy articles for the purpose of SEO that lack true understanding of the topic. You also may be aggregating a ton of content from external sources. The hallmark of this content is little to no social shares because no actual human being would post this on their Facebook account.

- Doorway Pages: over-optimizing your internal links and also cross-linking from too many sites under your own control.

For example:

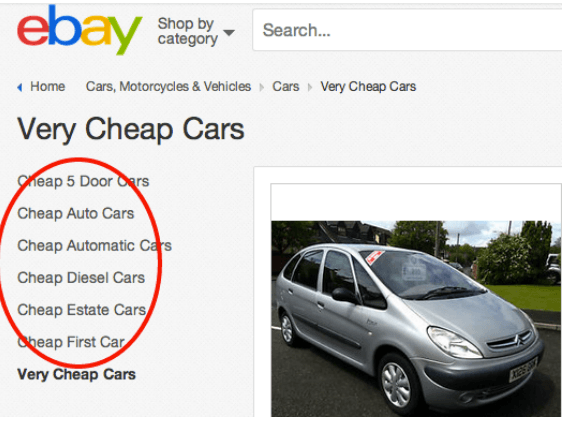

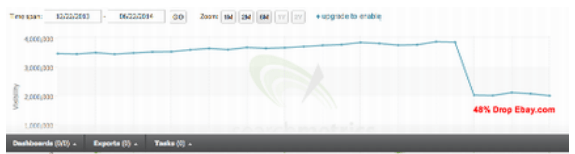

I can’t think of any real reason why eBay has a “Very Cheap Cars” page http://www.ebay.co.uk/bhp/very-cheap-cars that links out to other ‘made for search engine’ pages with keyword rich anchors. The only thing that comes off looking very cheap is eBay’s SEO. eBay recently paid a big price for these tactics and were penalized by Google.

ATTN: Stop practicing SEO like it’s 2005. Being a big brand is no longer going to save you, and it shouldn’t.

Note: There is some debate as to whether the eBay penalty was a manual action by Google or algorithmically applied via the latest panda update. Only Google knows the answer to this. However, eBay is certainly guilty of creating the kind of thin, over optimized content that is a big no-no in the post-Panda world.

Takeaways:

If eBay is getting busted (manually or algorithmically) for creating these types of pages, what chance do you think you have of getting away with anything similar? Only produce high quality content even if that means producing drastically less content.

Do not over-optimize your internal site structure and create thin doorway style pages in an attempt to game Google. (No, your footer is not a good place to stuff some keyword rich links!)

Don’t let ‘Pandageddon’ happen to a site you love!

From Glenn Gabe, Digital Marketing Consultant at G-Squared Interactive:

I help a lot of companies with Panda, and have since February of 2011. Panda 4.0 was HUGE. Everything about it was big. The recoveries were big (for companies I was helping deal with Panda hits) and the fresh hits were severe. I’ve had a number of companies reach out to me since P4.0 rolled out that have lost 60%+ of their Google organic traffic overnight. Some have lost 80%+. Like I said, it was huge.

I help a lot of companies with Panda, and have since February of 2011. Panda 4.0 was HUGE. Everything about it was big. The recoveries were big (for companies I was helping deal with Panda hits) and the fresh hits were severe. I’ve had a number of companies reach out to me since P4.0 rolled out that have lost 60%+ of their Google organic traffic overnight. Some have lost 80%+. Like I said, it was huge.

Regarding first steps for those that are hit, it’s impossible to tackle Panda with band-aids. A full audit must be conducted (through the lens of Panda). Those audits typically produce a number of important action items. Panda targets low quality content, but “low quality” can mean a lot of things. It can mean thin content, duplicate content, low-quality affiliate content, scraped content, technical problems causing content quality issues, etc. An audit will surface problematic areas to address. That’s the first step (it’s a big step, but it’s critically important).

Execution is key once the audit is done. Panda rolls out monthly, so companies have a chance of recovery once per month (typically). So, making the right changes as quickly as possible is the key to recovery. Unfortunately, many companies need to wait months to see recovery, so getting the site in order is critically important. I wrote a case study where a company did everything right with fixing its site and waited 6 months to recover. That was extreme in my opinion, but that shows how hard it can be to recover.

See some of Glenn’s posts on panda (with case studies!) at G-Squared Interactive’s blog, Search Engine Watch, and SEJ.

From Tommy Walker, Editor of ConversionXL.com and Host of The Inside Mind:

We’re not really noticing any decreases that aren’t consistent with YoY trends. It’s been our editorial policy since Day one to publish nothing but extremely well researched content with an 1,850 word minimum and links to academic research, case studies, and behavioral psychology reports.

We’re not really noticing any decreases that aren’t consistent with YoY trends. It’s been our editorial policy since Day one to publish nothing but extremely well researched content with an 1,850 word minimum and links to academic research, case studies, and behavioral psychology reports.

From Jim Boykin, Founder and CEO of Internet Marketing Ninjas:

Yes, we’ve worked with many sites over the years that have been effected by Panda, but Panda 4.0 only hit two of our clients. Those two clients were warned that there were patterns in their website that fit patterns that Google Panda penalizes for. Both of these sites had over 100,000 pages of content, and both had tons of location pages. For example, one client also had pages for every zip code. Both clients had tens of thousands of near identical pages where only the location was changed on the pages. For example, the Denver, Colorado page was the same as the Troy, New York page. Both of these sites also had tons of “near identical” product pages as well.

Yes, we’ve worked with many sites over the years that have been effected by Panda, but Panda 4.0 only hit two of our clients. Those two clients were warned that there were patterns in their website that fit patterns that Google Panda penalizes for. Both of these sites had over 100,000 pages of content, and both had tons of location pages. For example, one client also had pages for every zip code. Both clients had tens of thousands of near identical pages where only the location was changed on the pages. For example, the Denver, Colorado page was the same as the Troy, New York page. Both of these sites also had tons of “near identical” product pages as well.

The solution basically is in greatly reducing the size of the site, ensuring that every page is unique and useful. For example, one site we’re bringing from 220,000 pages indexed in Google down to 3,900 pages.

We did that by blocking the majority of pages via robots.txt. We’re blocking everything that’s near duplicate until we can write unique content for each page.

We also used the canonical tag on many of their pages that were near identical as well.

And for pagination issues, we implemented the rel=previous, rel=next.

So, now we’re busy writing content…..There’s no way we’re going to be putting up 220,000 pages again, but these clients are fine with us writing 100-200 pages of content for them each month, and focusing on the locations where they’ve traditionally sold the most products. We predict even if the penalty is removed, this one site will still see a 64% reduction in traffic via our killing 99% of the URLs.

By writing original content, if we have 1000 new pages up, we predict we could pick up about 1/2 of the 64% reduction. It may take two years, and a lot of content, to get the traffic back to where it once was.

We make sure all pages are unique to each other; not just changes in a few words, but each page hand written. Some pages have 200 words, and some have 600 words (and everything in between). Writing location pages that mention real landmarks in those locations, and adding a few external links to trusted resources in that location.

Luckily, these clients understand that they once had “regular traffic”, then they added hundreds of thousands of pages and had mega traffic, and now they’re back to “regular traffic” for now. It’s a game they played, and they won for a while. Now they realize they must write unique useful content, and it’s going to take time to get great again.