With all of the buzz about Social Media Marketing, I think too many companies overlook the importance of having a rock solid technical structure (SEO-wise). Sure, Social Media Marketing is important, but let’s not forget that SEO can be driving quality traffic 24/7, and for the long-term. When it comes to building SEO strength, you absolutely need a clean and crawlable structure so the search engines can easily crawl and then index your content. If your site can’t be crawled or indexed properly, you’re essentially dead in the water. You can build links until the cows come home and it won’t make a difference SEO-wise.

I perform a lot of SEO Audits at G-Squared, and across a wide range of web sites. I’m a firm believer that a thorough technical audit is the most powerful deliverable in SEO. It enables you to understand the strengths, weaknesses, risks, and opportunities of your website in a relatively short amount of time. In addition, the audit yields a remediation plan, which can help build your SEO roadmap moving forward. After presenting a remediation plan, there are times that changes take months to implement, while other times, changes can be quickly implemented. My post today includes two examples that fit into the second bucket (although the second example could be more complex to change, based on the size of your web site).

The Cost of One Line of Code

When performing audits, you never know what you’re going to find. And what you find could very well turn around the performance of the website SEO-wise. Sometimes you come across serious, and glaringly obvious, examples of how the wrong code could kill SEO for a website. And believe it or not, that sometimes could be just one line of code. In this post, I’m going to explain two examples I’ve come across recently where one line of code was destroying a site’s SEO performance. Let’s take a closer look at each situation below.

WordPress and NoIndex/NoFollow

As part of an SEO audit, I typically review the core domains in use by a company. That often includes any blogs that are being used. For larger audits, the discovery stage of the project includes interviewing key people at the company at hand. One person I spoke with during a recent audit was baffled by how poorly their blog content was performing in Natural Search. This person wasn’t technical, and wasn’t extremely familiar with SEO, which helped explain why the core problem I found went undiscovered for so long. According to this person, not only was traffic to the blog non-existent, but he couldn’t even find examples of the posts ranking (at all). Yes, the first red flag had been raised.

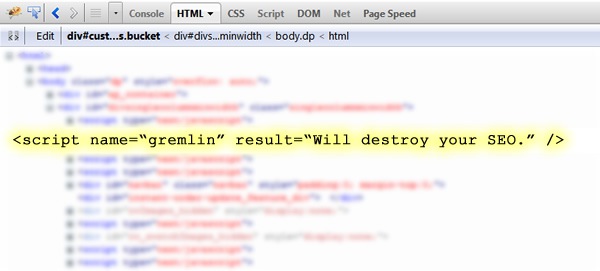

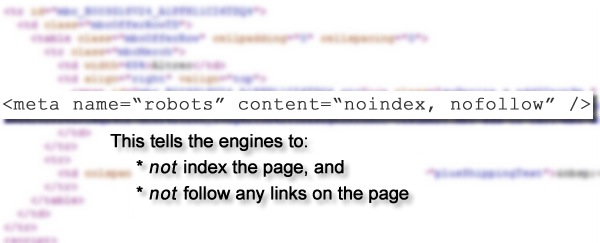

Upon further review (about 15 seconds on the blog), I pulled up the source code. I searched for “noindex” and low and behold, there was a meta robots tag using noindex and nofollow on every page of the blog. Yes, the blog was telling the search engines to not index any content and to not follow links on any of the pages. Clearly, this is the kiss of death for a website or blog. A site: command in Google revealed “0” pages indexed for the blog.

The development team quickly removed the tag and Google and Bing began indexing the blog content within a few days. Now the blog is driving quality traffic to the site and has built up moderate to strong rankings across a few hundred blog posts. If the company didn’t have the audit conducted, they would have kept thinking that the blog (and blogging) just didn’t work. Yes, one line of code was killing their chances of strong rankings, traffic, and conversions.

Canonical URL Tag and eCommerce

OK, so that was a good example of how noindex and nofollow could hurt a blog or website SEO-wise. Now let’s take a look at an example of how one line of code could kill SEO for an ecommerce website. Based on my experience with ecommerce SEO, application development, and CMS packages, I end up getting a lot of calls from ecommerce retailers. Recently, I performed an SEO audit for an ecommerce company that was convinced SEO wouldn’t work for them. They spent the past few years trying “this”, then trying “that”, adding a dab of “this”, and a pinch of “that”, and all for nothing. Their natural search performance was abysmal. The never hired an SEO consultant or agency before, and everything they had implemented was completed on their own (with very little experience with SEO).

Based on the time and effort they already put into the website to build SEO strength, they wanted someone to perform an extensive SEO audit. Specifically, they wanted to know if there were any technical limitations or barriers on the site that could be causing serious SEO problems. Smart move.

It wasn’t long into the audit that I found a huge problem, and it had to do with the canonical URL tag. If you’re not familiar with the canonical URL tag, it’s one line of code you can place in the html of your web pages that can help the search engines better understand the canonical URL’s on your web site. For example, (when one piece of content ends up resolving at several URL’s (causing duplicate content). The tag informs the engines that Page X holds the same content as Page Y, and that all of the Search Equity built to Page X should be attributed to Page Y. The canonical URL tag is especially important for ecommerce websites, since the nature of ecommerce applications (and CMS packages) can generate a massive amount of duplicate content.

The canonical URL tag looks like:

<link rel=”canonical” href=”http://www.domain.com/canonical-url.html” />

Here is a Quick Example:

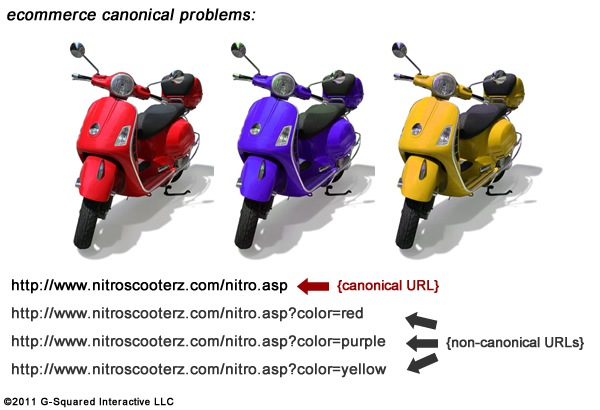

Imagine you launched a new product that you’re selling on your website. It’s the G-Squared Nitro Scooter, and it’s available in three amazing colors. Your ecommerce CMS enables visitors to change the color of the scooter when viewing the product page, but the URL changes when that happens. Therefore, you can have four pages for every one you should have on the site (the original page and then one for each color). All of the content is the same, the metadata is exactly the same, etc. This is a great example of when the canonical URL tag could help.

Simply adding the tag to each variation of the scooter page would tell the engines that all of the SEO power should be attributed to the original page that loads (the original product page). Now imagine this is happening across all 1,250 of your products. The problem could get large, and ugly, very quickly.

Bad Implementation, Bad Results

After the canonical URL tag was released, there were many websites that implemented the tag incorrectly. Some companies used it incorrectly on product pages, category pages, site-wide, with pagination, etc. When you break it down, the right implementation can greatly help your SEO efforts, but the wrong implementation can drive a dagger into the heart of your SEO performance. And that’s exactly what I found when completing the ecommerce SEO audit I mentioned earlier.

The site had almost no organic search traffic. When checking rankings, I could barely find any pages that were ranking for target keywords. There was obviously a serious issue. Upon checking the source code of various product pages, I noticed the canonical URL tag was being used. The first problem was that the same href was being used on every page of the website. That means the ecommerce website was telling the engines that every page on the site should be attributed to one other page. Think about it, how could 1000+ pages of unique content be the non-canonical versions of one other page? They couldn’t, and this implementation was seriously confusing the search engines.

But It Gets Worse

The second (and possibly more serious) problem was that the destination URL being used in the canonical URL tag for every page was broken. Yes, this made matters even worse. The URL was added in a way where the product ID (which was the querystring that helped the site resolve the right product) was left out of the tag. Note, when the product ID was left out the URL, each page threw a 500 error (application error). So, the site was telling the engines that all pages should pass their Search Equity to a page that didn’t resolve on the site. Bad move.

Example of broken canonical URL tag:

<link rel=”canonical” href=http://www.domain.com/canonical-url.html?productid= />

Based on what I explained so far, can you tell why the site was in pathetic shape, SEO-wise? The correct use of the canonical URL tag for this web site would be to add it to each product page and reference the canonical URL for each specific product. That would ensure each non-canonical product page directed its power back to the core product page (the canonical URL). Instead, the web site was pointing thousands of pages (with unique content) to one page that threw an application error.

Don’t Let One Line of Code Kill Your SEO

If you are having trouble with SEO, it very well could be a technical issue or barrier that’s residing on your web site. If that’s the case, building more content and links won’t have any impact SEO-wise. You need to identify the technical issues, form a plan for fixing them, and then implement those changes as quickly as you can.

In closing, it’s amazing how just one line of code can kill your SEO efforts. But what’s more amazing is how some companies let that one line of code remain on the site.

![Two Examples of How One Line of Code Could Kill Your SEO [Case Studies]](https://cdn.searchenginejournal.com/wp-content/uploads/2011/11/Cade-Studies.jpg)