One unfortunate part about the world of SEO is that sometimes things go wrong.

This can happen because you:

- Get over-aggressive with SEO tactics.

- Do things that are considered wrong by Google because you don’t know any better.

- Use shady/spammy tactics.

If SEO has gone wrong for you, this chapter will go through what you need to do get back on track.

Diagnosis

There are two major ways to learn that you have a problem.

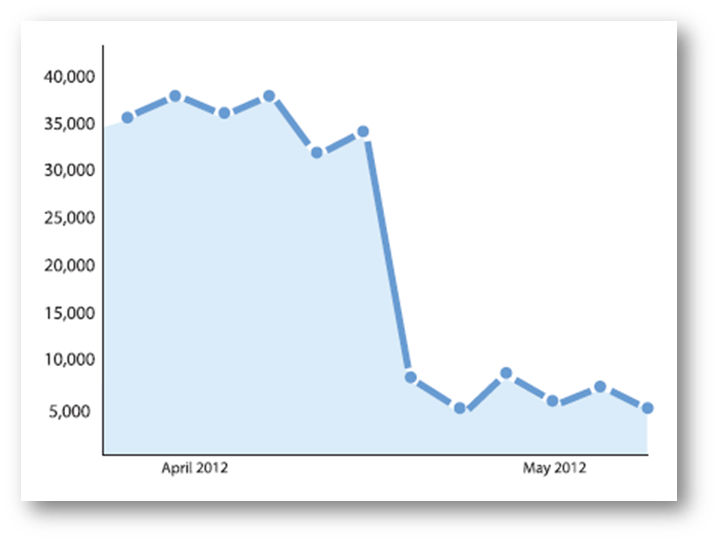

The first way you learn of a problem is seeing a large drop in the organic search traffic to your site.

Sometimes that drop can be catastrophic in nature, and it might look something like this:

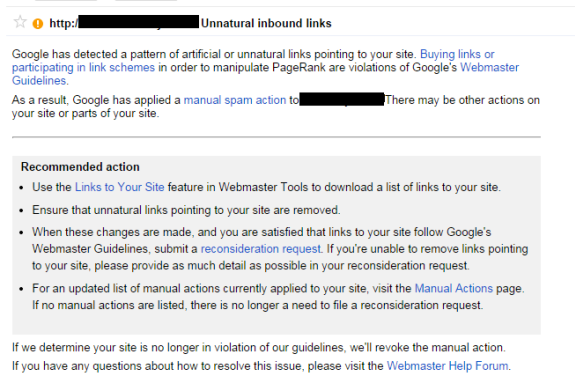

The other way you might learn about a problem is if you get a message directly within Google Search Console telling you about it.

If you haven’t signed up for Search Console, do so immediately.

Here’s where you can find these messages in Search Console:

Overview of Manual Penalties

When you get notified about a problem within Search Console, this is considered a manual penalty.

What that means is that a person at Google actually analyzed your site, and as a result, assessed a penalty to the site.

When this happens, the message in Search Console normally gives you some high level description of the problem.

The three most common manual penalties are:

- Sitewide link penalties.

- Partial link penalties.

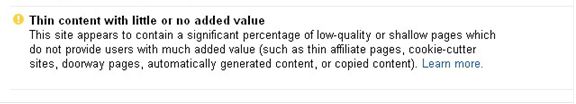

- Thin content penalties.

Here is an example of such a Search Console message focused on links:

Here is an example of a manual thin content penalty notice:

Once you have a manual penalty, you must follow a basic three-step process:

- Determine the cause of the penalty. (For example, if you have a link penalty, you need to determine which links Google doesn’t like.)

- Remedy the problems.

- Submit a Reconsideration Request to Google asking them to remove the penalty.

Overview of Algorithmic Penalties

These are caused by algorithms that Google uses to identify sites that they consider to be poor in quality, and then lower your rankings.

The most well-known of these are:

- Panda (now part of the main Google algorithm): Focuses on identifying poor quality content.

- Penguin (now part of the main Google algorithm): Targets poor quality links.

- Search Quality: A lesser known algorithm that evaluates site/page quality.

- Top Heavy Ads: Focuses on sites that use too much advertising.

- Payday Loans (Spammy Sites): Identifies spammy SEO practices that Google has seen as a common practice on payday loan sites, but the algorithm is applied to any site using those practices.

Technically, Google considers these to simply be algorithms, and not penalties, so we’ll go with their terminology.

But the practical impact on you is the same: you see a drop in your traffic.

If you have one of these algorithms hurting your traffic, you need to try to figure out what the cause is, as Google doesn’t tell you about these with a message in Search Console.

Google used to announce updates of algorithms like Panda and Penguin, but that doesn’t typically happen any longer, so that will leave you with the challenge of working it out on your own.

This will require a strong understanding of what the algorithms do, and then a harsh look at your site to see if you can figure out what the problem is.

Links Google Doesn’t Like

If you’ve received a manual link penalty, this section will help you determine what types of links may be causing the problem.

You can also use this section to understand the types of links that Penguin is likely to act on, but for Penguin it’s important to understand that affects are more subtle.

I’ll discuss Penguin specifically in more detail a bit further down in this guide.

As you saw earlier in this guide, Google considers links to be an important part of their ranking algorithm.

For that reason, many publishers are anxious to get as many links as they can, but unfortunately, there are certain types of links that can hurt you.

Basically, what Google really wants you to do is obtain links that are editorial in nature.

What that means is the links can’t be something that you paid for, provided compensation for, or that otherwise were given to you for reasons other than the linking party genuinely wanted to reference your site.

This is because Google relies on these links to act as votes for your content, and each vote is an indicator that your site has some level of importance.

More votes signifies more importance.

However, all votes for the content of your site are not created equal. Some are far more important than others.

The reason why Google doesn’t like certain types of links is that the nature of those links may indicate to Google that they are non-editorial in nature.

If you have too many of these links pointing to your site, it starts to impact the quality of their search algorithms, and this is why Google takes action on them.

With that in mind, here are some of the most common links that can cause problems:

- Paid Links: Any form of payment is considered a problem by Google. If you’re buying ads and getting links to your site in return, the best policy is to implement a “nofollow” attribute on those links so Google won’t think you’re trying to spam their search results.

- Web Directories: These are sites that organize websites into hierarchical directories, and these are largely useless today. Even the decent ones that we would have spoken about 3 or 4 years ago (e.g., Best of the Web, Business.com, DMOZ.org) are likely to offer no SEO value today. (Note: this commentary does not apply to local business listing directories, which still offer value from a Local SEO perspective.)

- Article Directories: These are sites that allow you to submit your article content, and they usually include a link back to your site. However, these links are not editorial in nature. You can simply upload the article and no one reviews it, so for that reason, it doesn’t really act as a true endorsement for your site. Don’t use them.

- International Links (from countries where you don’t do business): There is really no reason for you to have many links from countries where you don’t operate, so if you have lots of these, that could be a problem.

- Coupon Codes: If you have been handing out coupon codes to other publishers and getting a link in return, that’s considered to be very similar to a paid link. If you have these types of links, you’ll have to deal with them.

- Poor Quality Widgets: If you created a neat widget that publishers can place on your site, and in return you get a link, this might be a problem, especially if you’ve obtained a large number of links this way.

- Affiliate Spam: If you are paying a publisher for clicks to your site, or a revenue share or commission on sales generated by traffic they send to you, that’s considered a purchased link.

- Comment Spam: If you’ve been going to blogs and forums all over the web, and implementing links back to your site, Google isn’t going to like that, so avoid it altogether.

- Link Exchanges: There is nothing wrong with exchanging links with close business partners or major media sites. However, if a large percentage of your overall link portfolio comes from link exchanges, that will raise a red flag. So do this only in moderation.

- Other Non-Editorial Links: The list above isn’t a complete list of problem links. To make the final diagnosis, you have to try and evaluate whether it makes sense for Google to consider a link editorial.

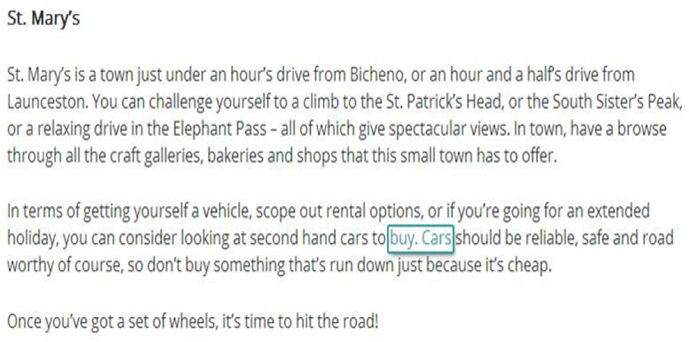

- Bad Anchor Text Mix: The words used in the anchor text of links helps Google understand better what your pages are about. In the past, SEO practitioners abused this by going out and obtaining many links using “exact match”, or “rich” anchor text (i.e., the anchor text of the link was close to or exactly the main keyword associated with your page). Too much of this rich anchor text is a clue to Google that you are being over-aggressive in your SEO. Here’s an example of bad anchor text:

This is obviously contrived.

The writer couldn’t even take the time to write the article in such a way as to put the words “buy” and “cars” in the same sentence.

Note that it isn’t bad if some links to your site use rich anchor text, but if you’re getting a large percentage of links that do so, that’s not normal, and Google will see that as a problem.

Cleanup Up Link Related Problems

If you’ve received a manual link penalty, or are worried that your site is in danger of having that happen, you should work on cleaning up your link profile.

Here’s the basic nine-step process for doing this:

- Build a complete list of links to your site. Google Search Console provides a list of links, but unfortunately, that list isn’t complete. For that reason, we recommend that you also obtain data on links to your site from Moz’s Link Explorer, Majestic, and Ahrefs. The reason we use all three of these sources, plus Search Console, is that each will find links that none of the others do.

- Deduplicate the list as much as possible, as each tool will show many of the same links that the other tools do.

- Begin analyzing all of the links. Generally speaking, you don’t need to look at more than two or three links per domain linking to you.

- Mark links that you see as problematic as you’ll need to address them.

- Repeat three and four until you’ve been through links from each of the domains linking to you.

- Reach out to sites that you want to remove links from, and request their removal.

- Repeat the outreach to those that don’t respond to increase your chances of success. Don’t make this request more than three times, and spread it out a bit so you aren’t a complete pest.

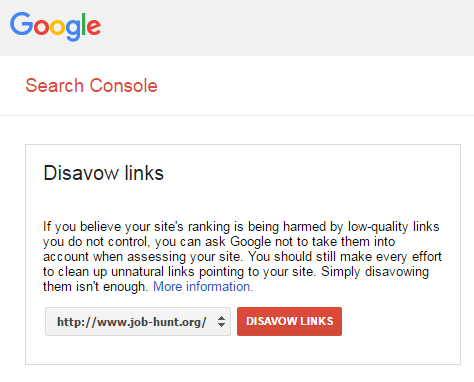

- For those links that you can’t get removed, use Google’s disavow tool to tell Google you want to discount those links.

- Once steps 7 and 8 are complete, the hard part of the labor is done.

In step 8, I reference Google’s disavow tool.

This tool allows you to list all the links pointing to your site that you think might be bad, and to tell Google to not credit them to your site.

Basically, it acts as a shortcut to removing potential links.

This might lead you to ask, why should I take the time to manually request link removals then? Can’t I just list all the bad links in the disavow tool?

The reason you should still request removals is that Google likes to see the extra effort.

From their perspective, when they’ve penalized you it’s because you were over the line in what you were doing, and they want to see clear evidence that you won’t do it again.

When they see that you make the manual removal effort, it acts as a signal that you are sincere in your intent to not violate their guidelines again. This is particularly important in the case of manual penalties.

As the last step in a recovery process, if you cleaned up your links because you received a manual link penalty, you will need to fill out a reconsideration request. You can read more about that below.

One last point: Recovering from link problems is aided by attracting high value links as well.

This is something you should be trying to do on an ongoing basis.

How to do that is beyond the scope of this section of the guide, but learning how to do this is a cornerstone to the success of any SEO strategy.

Penguin Recovery Process

Penguin used to generally lower rankings for sites that it determined were using poor link building practices.

But, as of the Penguin 4.0 release on September 23, 2016, Penguin simply discounts links that it doesn’t like.

In other words, there is no direct punitive aspect to it.

However, don’t trivialize that impact.

If you’re aggressively investing money in adding links, and those links don’t count, that investment is wasted – probably not something you want to do.

The other thing that happened with the Penguin 4.0 release is that it got rolled into the main part of the Google algorithm, so Penguin updates are no longer announced.

As a result, there is also one scenario in which a Penguin action may seem like it’s a penalty.

If you’re busily obtaining links that the Penguin algorithm currently does not discount, they’ll be helping you improve rankings and organic traffic.

Perhaps you have found a way to obtain links that are actually non-editorial in nature, but they currently work.

But, if at a later date, a Penguin update comes out that starts to discount those links, you will suddenly lose all the benefit you were getting from those editorial links.

This will feel exactly the same as a penalty, though in fact, it’s not that at all. This is one of the reasons I suggest that you focus only on earning editorial links in any link building strategy that you implement.

Unlike a manual penalty from Google, if Penguin has begun to discount your links, there is no value in filing a reconsideration request.

You just need to work on getting high quality, 100 percent editorial links, and this is always the best strategy related to earning links.

Doing this is hard, I know, but that’s why it works.

Content Google Doesn’t Like

Just as there are types of links Google doesn’t like, there are also types of content that Google doesn’t like.

Some of the most important types of these are:

- Thin Content: If you have a large number of pages with only a sentence or two on them, and they make up a large percentage of your overall site, that can be a problem.

- Curated Content: Sites that simply curate content from third parties and add little unique value are also problematic.

- Syndicated Content: It’s OK if you have some syndicated content on your site. But if a large percentage of the pages are syndicated from third-party sites, and you add little of your own value, then that will be seen as a problem.

- Scraped Content: If you’re scraping content from other sites, that will definitely be an issue. This is one of the more egregious forms of poor quality content.

- Doorway Pages: These are pages that have been created largely for the purpose of capturing search engine traffic and immediately driving sales. These are often pages that are poorly integrated into the site (i.e., have few, or no, links to them), and focus on driving an immediate conversion.

- User-Generated Content that is not properly moderated: This almost always leads to large amounts of low value content. If you allow users to add comments or reviews to your site, this can be a great thing, but take great pains to use human review to screen out poor quality contributions.

- Advertising Dominated Content: This is content where the value added content is somewhat obscured or dominated by the presence of ads. In other words, these pages may have multiple ads above the fold, and the user needs to scroll before they see much of the value added content they were looking for.

- Ecommerce Sites with the Great Majority of Pages Being Product Pages with Nothing but Manufacturer’s Supplied Descriptions: This is common with many lesser brand ecommerce sites. Be careful, though. Simply rewriting those product descriptions, but saying more or less the same thing, isn’t enough.

Google wants to see the unique value add of your site. They aren’t going to let you receive search engine traffic if you simply aren’t adding much unique value to users who visit your site.

To be clear: the mere existence of your site, or having a nice navigation hierarchy, aren’t examples of unique value.

Better examples of quality content are:

- Unique articles that you’ve created that help users solve problems of interest to them.

- Reviews of products placed on your site by users. Important note: reviews that you repost from other sites don’t count as unique content.

- How-to videos that walk users step by step through something they want to learn about.

- Interactive content that engages users and attracts lots of attention.

- Data-driven studies that reveal key information that others haven’t seen or created before.

- Access to expert advice and/or interaction with experts.

These are just a few examples.

The bottom line is that Google wants to see what it is that makes you special.

It’s OK if you have the best plumbing site that services Rhode Island, or the best marriage counseling site in Pasadena.

Just produce unique content related to what you do, and that is specific to your local market.

If you serve broader markets, such as all of Europe, then the challenge content-wise is greater, and you have to be prepared to step up to meet it.

Continually Improving Content Quality

It’s easy to say that if you’ve been hit by a thin content penalty, or Panda, that you need to improve your content quality.

However, it would be better to say that you need to improve your content quality whether or not you’ve been impacted by either of these.

You should be thinking about this all the time.

If you publish a website, then continual improvement of the quality of the content on it needs to be a core mission of your website team.

It’s a competitive world out there and Google loves quality content.

There’s no win in letting your competition get an edge on you.

Invest the time and energy to make your site the best it can be, from the perspective of adding value to users who come to it.

Keep the focus tight to the marketplace you serve.

If you’ve been hit by a manual thin content penalty, and you believe you fixed the problem, then the next step is clear: file a reconsideration request.

Panda Recovery Process

Google’s Panda algorithm is also a part of the main Google algorithm.

However, unlike Penguin, it can take actions that lower overall site rankings if it detects content quality problems on your site.

Sadly, there is no simple way to tell if a traffic drop is due to Panda.

If you suspect that it is, you’ll need to closely examine the content across your site to see if you detect quality problems.

Also, a reconsideration request won’t help you here. All you can do is fix the content quality issues and then wait and see.

It may take Google a few months to re-crawl your site, see the improvements, and then rerun the Panda portion of their algorithm on it (even though it’s now considered part of the main algorithm, it still works this way).

Frankly, the best way to deal with content quality has little to do with Panda or any manual penalty.

You should be obsessed with it.

You need to have a continual focus on improving content quality on your website, and have ongoing programs to keep making your site more and more valuable to users.

It’s a competitive world out there. It’s likely that one or more of your competitors is beginning to think this way. This is exactly the behavior that Google wants.

Here is way to frame it: “Be the Answer That Users Want, and You Become the Answer That Google Wants.”

If you use this mental approach, you’re changes of staying clear of worries about Panda, or any manual content penalties will go way up.

Reconsideration Requests

If you’ve been hit by a manual penalty, and you believe you’ve fixed the problem, you must file a reconsideration request.

Google won’t notice that you’ve fixed it, and you must notify them before they will take a look at it.

There are some key elements to a reconsideration request.

Here is a summary of the most important ones:

- Fix your problems: Don’t file a reconsideration request until you have made a thorough effort to clean up the issues reported by Google. Just don’t. If the reviewer at Google sees that you haven’t taken their concerns seriously, they will reject your request, and the bar to getting a future reconsideration request approved could potentially get higher. They’re human, and if you don’t take their complaint seriously, you may simply upset them.

- Keep your reconsideration request short and to the point. Explain that you saw the penalty, that you made a good faith effort to fix the problem, explain what you did, and tell them that you will endeavor to meet their guidelines going forward.

- Don’t complain about the fact that you were penalized. Don’t complain about the impact on your business. From their point of view, they gave you a penalty because you were doing things to negatively impact their business, and they deal with thousands of these requests every day, so you’re more likely to aggravate them than get their sympathy.

- Remember they are human. You can’t use a reconsideration request to become their friend, but you can write it in such a way that you are being considerate of their time, and not make yourself a burden on them.

Those are the basics, but it bears repeating:

Don’t send a reconsideration request until you’ve made a serious effort to address their concerns.

Otherwise, you’re wasting the reviewers time, your time, and delaying the eventual recovery of your website.

Other Types of Penalties

There are many other penalties that are beyond the scope of this guide to cover.

For the most part, these arise from more advanced forms of trying to deceive Google, so hopefully you will never encounter these.

Here is a brief list of some those other types:

- Cloaking and/or Sneaky Redirects: This happens when you serve different content to Google then you do to users. Google considers this to be a major no-no.

- Hidden Text and/or Keyword Stuffing: SEO practitioners used to find ways to put text on webpages that users couldn’t see (such as white text on a white background) to feed content only to Google. Or, they would repeat the main keyword over and over again on webpages. Don’t do these things!

- User-Generated Spam: You might get this message if you are accepting user generated content (UGC) on your site, and you aren’t carefully moderating it. UGC is a great way to add unique content to your site, but you should only do it if you are actively screening out bad submissions.

- Unnatural Links from Your Site: If you appear to be selling links to other publishers with a goal of providing them SEO benefit in return for money, Google may spot that you’re doing this and give you this message.

- Hacked Site: This is Google trying to flag you that you have a problem because a third party has hacked the code for your website. The best way to keep this problem from happening is to be ruthless about keeping all the software involved in publishing your site up to date.

- Pure Spam: Google will give you this message in the Search Console if they believe your site is using aggressive spam techniques.

- Spammy Freehosts: This is related to where you are hosting your site. Make sure you are working with a reputable hosting company!

Summary

Recovering from a penalty (or an algorithm, like Panda or Penguin) should only be viewed as the first step.

Treat it like a warning shot across the bow.

Just because you’re able to recover doesn’t mean that you can’t get hit again.

In the future, you should avoid the behavior that led to the problem.

But look beyond that.

All the work that you did to recover should be a clue as to what you need to do to thrive in Google.

If you had to deal with thin content, then take that as a signal to keep focusing ongoing energy on improving your content.

Or, if you have a link-related problem, keep investing energy in doing the types of things that attract high quality editorial links to your site.

Then you can move past survival, and into a world where your traffic keeps growing over time.

Image Credits

Featured Image: Paulo Bobita

All screenshots taken by author