Recent events such as unnatural link warnings from Google, over optimization filters andblog networks being deindexed by Google, the concern of links being able to harm a website has risen again.

The biggest concern is the issue of ‘negative SEO’, which is sabotaging your competitor’s rankings to help you move past them on the search engine result pages.

Some webmasters are very concerned about what negative SEO is and how they can protect themselves. This post will tell you what to do about negative SEO.

Negative SEO: It’s Very Real

…under certain conditions.

First, let’s imagine that the search engines tolerate a certain threshold of optimization for a site before they penalize it for being over optimized.

Let’s call this the ‘over optimization cup’.

To visualize this, just imagine that every site starts with an empty cup. Once the cup gets overfilled, a site is at risk for getting penalized.

A larger, more authoritative site such as Amazon has a much larger cup; that means they can tolerate more dirt in their site buildup. ‘Dirt’ can be defined as spammy links, blatant keyword stuffing, duplicate content or anything that isn’t considered squeaky clean white hat SEO.

In contrast, a smaller, newer site has many forces working against it. For example, it might not have much content or links pointing to it. So right off the bat, we can see a few reasons why the search engines wouldn’t rank this site in the same category as Amazon:

- Thin content – e.g. duplicate content

- Manipulative on-site SEO – e.g. excessive footer links or keyword stuffing

- Spammy link profile – e.g. blog network links, mass directory, forum, or social bookmarks

In comparison, Amazon has an overwhelming number of quality links, loads of user generated content, excellent site design, and a quick page load time. It’s easy for Google to crown Amazon as an authority because of all the positive signals the site has.

It’s also easy for Google to identify the small sites as cockroaches in the niche. And when the cockroaches mess around too much, they get stomped on.

Right now, some of you might be jumping up and down because you think you can take your competitors site out of commission by pushing it over the top with some negative SEO. And in some cases, it might work (more on that later).

But if you’re going after a giant like Amazon, you’re not going to succeed.

Here’s why.

Larger Sites Have A Higher Threshold for Spam

Naturally, larger sites have a stronger overall presence (such as # of quality inbound links, in-depth content and domain age). This means their over optimization cup is much larger because the search engines trust these sites more.

To put things in perspective, think of a small site as a sailboat and a large site as a battleship.

Which one can take more abuse?

The battleship.

How To Protect Yourself

There’s no groundbreaking cure to defending your site against negative SEO – all you need to do is follow the standard SEO guidelines of having:

- A website with useful content that helps people.

- Solid site architecture.

- Great website design for usability and trust purposes. Nowadays, design is marketing.

- A relatively clean link profile that shows the search engines that you aren’t doing anything over manipulative.

None of this is stuff is very new but with the uproar about over optimization, people are scrambling to figure out how to stop negative SEO. If you have any doubts on your website, be sure to refer to Google’s very own guide on how to build a high-quality website.

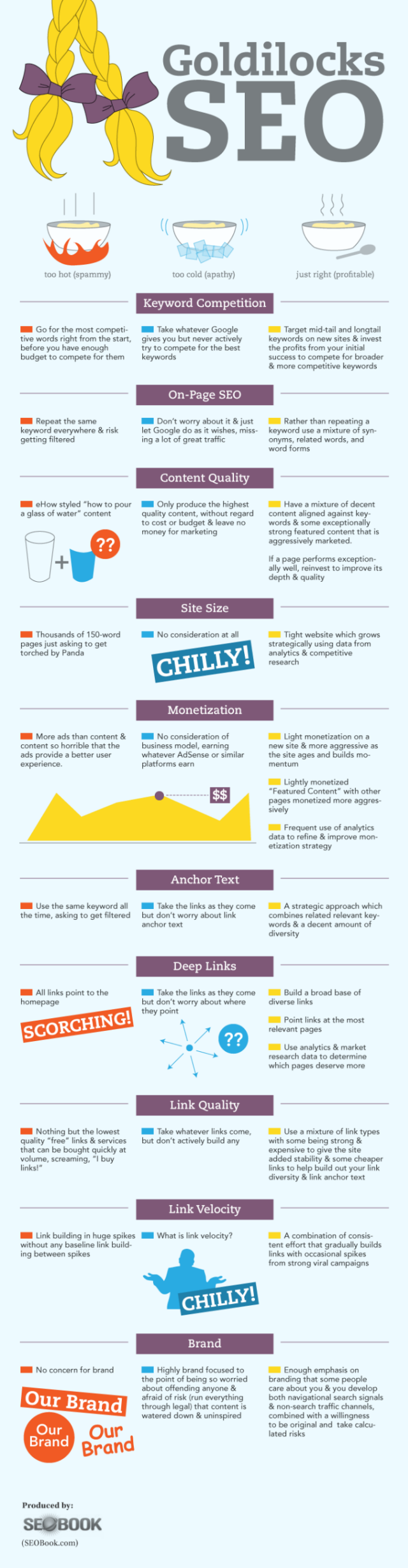

Furthermore, if you need a better idea of how to do SEO in moderation, this infographic is a great resource:

Don’t Waste Your Time With It

By now, you might be thinking that negative SEO is something you should add to your arsenal of tricks so you can ultimately make more money and laugh as your competitors go down.

Don’t do that.

Not only is it unethical, it’s a misuse of time.

Executing a negative SEO campaign requires:

- Time

- Money

- Tools

- A lack of morals

But why is it a misuse of time? Because there’s no guarantee that you will succeed. So instead of allocating valuable resources based on the possibility of taking down a competitor, it would be more efficient to pour those resources into your own site.

Negative SEO: Myths, Realities, and Precautions

SEOmoz did a Whiteboard Friday on different negative SEO techniques:

Rand Fishkin did an excellent job defining some characteristics of high risk and low risk websites:

High risk:

- You have engaged in spammy link building

- Manipulative on-site stuff

- Your site has few brand signals

Low risk:

- Clean backlink profile – no manipulative linking (at least intentional). Note: Everyone is going to have some bad backlinks given the number of different scrapers, crawlers, and other different bots.

- Clean, high quality design/interface

- A site that doesn’t feel SEO’ed – Whenever you have that sixth sense that a site feels manipulative, it probably is. Examples of sites that don’t feel SEO’ed: Zappos/Amazon/TechCrunch/SEOmoz

- Strong brand signals – e.g. brand name searches

- Lots of people searching for your brand name – e.g. social media, press

- Lots of user and usage metrics

If you’re ever wondering if a certain website is ‘high risk’ or ‘low risk’, simply refer to the characteristics above to help you classify a site.

Conclusion

With all the ruckus about over optimization right now, it’s important to arm yourself with knowledge so you can react properly to the situation. In this case, all you need to do is to continue to follow SEO best practices and deliver great value to your customers. To give yourself more of an edge, try being remarkable. It’ll take you much further than a negative SEO campaign.

What are your thoughts of negative SEO?

Image Credit: Fotolia and Jai Mansson