Google recently announced the launch of Content Experiments, a split-testing feature within Google Analytics. This tool is replacing Google Website Optimizer, which will be sunset on August 1. What does this mean for marketers? How do the tools compare?

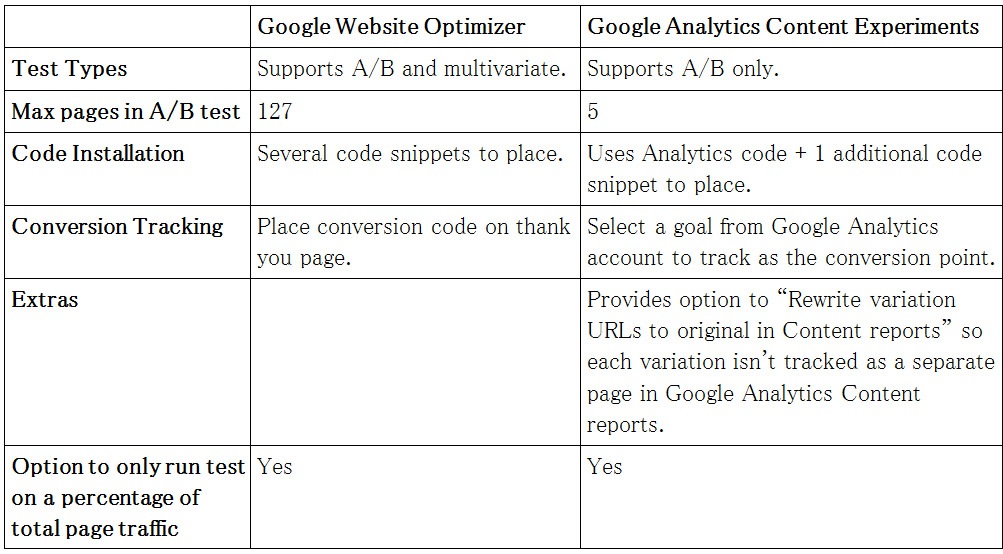

Google Analytics Content Experiments vs. Google Website Optimizer:

The two tools use somewhat similar interfaces and share some features, but there are several significant differences between Content Experiments and Website Optimizer. Here’s a handy table for your reference:

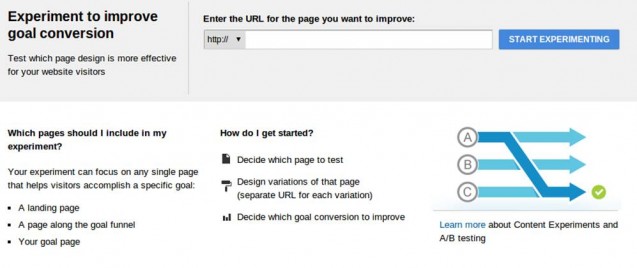

This screenshot shows the welcome page before you setup a Content Experiment:

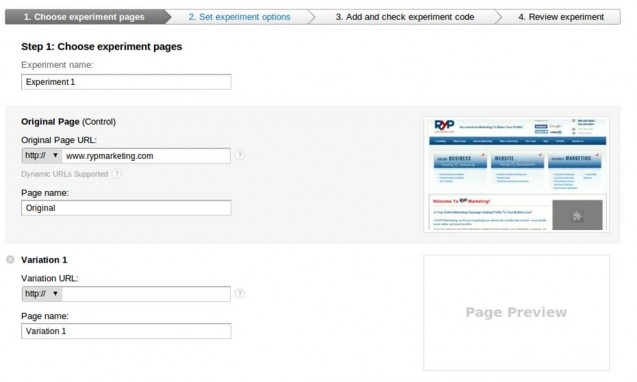

Step 1:

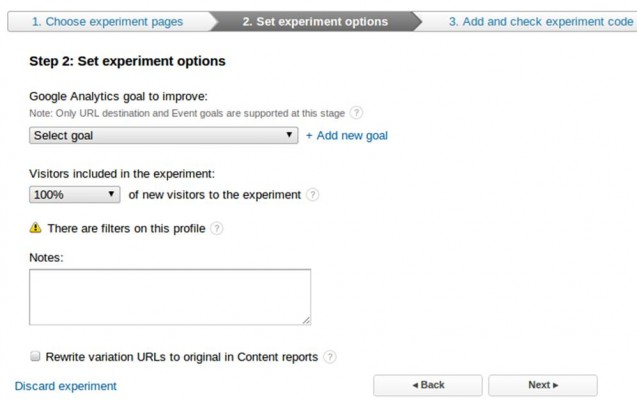

Step 2:

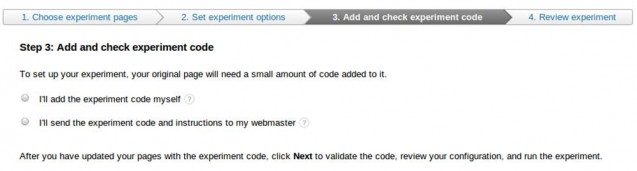

Step 3:

Notes About Conversion Tracking

In Content Experiments, you can choose one of the goals in your Google Analytics account as the conversion point for the split test. Currently, only URL destination and Event goals are supported (visit duration and pages per visit goals cannot be used), but this should accommodate the vast majority of tests. The option to use event goals should make it easier to run split tests where the conversion is an action such as clicking on a button, clicking on an external link, or playing a video.

What About Multivariate Tests?

Content Experiments doesn’t support multivariate tests at this time. That’s a shame, since multivariate testing can be a very powerful tool when used correctly. Google Analytics tweeted out two third-party blogs that reviewed Content Experiments – both of them also mourned the lack of multivariate testing:

- Adrian Vender at Cardinal Path said “As of right now, Google Analytics Content Experiments only supports A/B testing and not multivariate testing…This is unfortunate since I’m personally a big fan of MV testing…Fingers crossed!”

- Daniel Waisberg at Online Behavior included Multivariate Testing capability on his “wishlist for future versions”.

What About Filters?

If your Google Analytics profile has filters on it, then all visitors will see the test, but the test results you see will be filtered. In Google’s own words: “There is at least one filter applied to the profile you are using for this experiment. The full percentage of site visitors you include see experiment pages, but the results of the experiment are restricted to the profile filter. For example, if the profile is restricted to visitors from the United States, then visitors from all regions see the experiment, but results are based only on visits from the United States.”

What About SEO?

Switching from Website Optimizer to Content Experiments shouldn’t make any difference from an SEO perspective. Google has included a handy section in the Content Experiments help files on SEO concerns. In short, “Google does not view the ethical use of testing tools such as Content Experiments to constitute cloaking”. They also recommend (as would I) to:

- Use rel=”canonical” to tell Google which of the test pages is the main page that should be indexed.

- Once the test is complete, use 301 redirects to redirect users and Google to the main/final page. (There could be links or bookmarks pointing to one of the variations.)

So…Which Is Better?

What I like about Content Experiments:

- Simpler code integration.

- Events goal support makes it easier to test when the conversion is an external link or other action tracked via onclick.

- Integration with Google Analytics.

What I don’t like about Content Experiments:

- Doesn’t support multivariate tests.

Based on what I’ve seen so far, I think that Google Website Optimizer is a more robust testing tool, but Google Analytics Content Experiments will probably be easier to use for the typical webmaster. Some webmasters who use Analytics but haven’t used Website Optimizer may notice the feature and be more likely to run tests.

For marketers who want more robust features (such as multiple conversion points, multivariate tests, etc.) I recommend Visual Website Optimizer. For basic tests, Google Analytics Content Experiments should be an excellent option (and it’s free!)