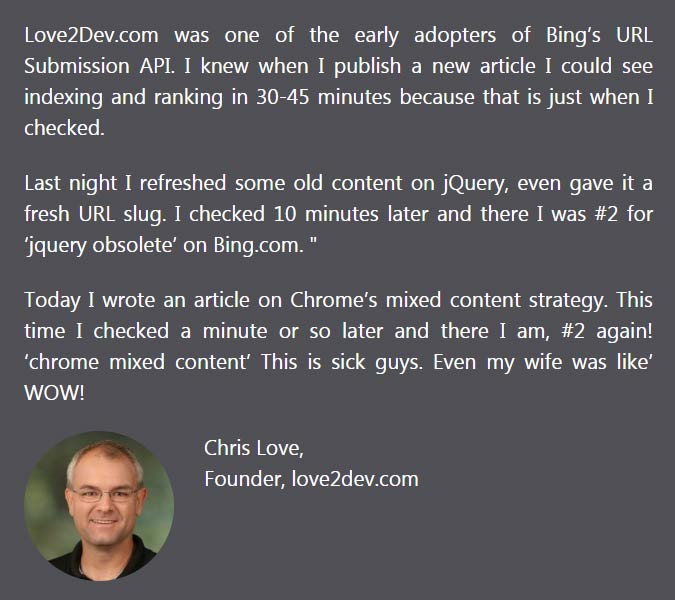

It is said that the Bing Instant Indexing API can literally have your pages ranking in minutes. I interviewed Chris Love (@ChrisLove) who was one of the first to try the Bing API. Chris shares his experience using the API to get fast rankings on Bing.

Chris Love is a progressive web app and SEO expert. His testimonial was featured on Bing’s Instant Indexing API announcement.

How to Use the Bing Instant Indexing API

How does one go about using these APIs in a WordPress blog? Is there a plugin or script that can help?

I am not a WordPress developer, but I know the Microsoft Bing Team has worked with Botify to integrate the submission with their software.

The API is platform agnostic, which means you can call it from any language or development stack, including the command line.

Good APis give you the freedom to create the UI you want

Who is the Bing API best for, in your opinion? Is it best for publishers and eCommerce stores whose content/inventory is in constant flux?

If you want your content indexed this should be part of your publishing process. So anyone who cares about reaching new and existing customers.

As far as fluid content a submission API like this is essential for success.While the rate is gated for the first few days or weeks being able to update 10000 URLs a day can be quite handy for larger sites.

Because search engines are publishing more and more meta data from the target URL in the search results this can give publishers the opportunity to quickly update price, availability changes and of course those important typo corrections. That last one is big for me. 🙂

Chris then discusses how the Bing Instant Indexing API was useful for sites with constantly changing information:

As I learned more about why this URL helps the search engine I was fascinated with some of the content Bing needs to index to keep data current. So many pages change through out the day via automated processes. A good example might be demand based pricing, like an airline.

How about a site migration to a new URL, do you think the API will be useful for speeding a site migration?

According to the documentation and some of my own experience the API does support the rapid indexing and consumption of 30X redirect changes.

This means a site migration can be updated with Bing within minutes, not weeks.

I know with clients they always want to see changes immediately, even when the process is rather long due to caching and the traditional spidering’s casual discovery process.

From Bing’s point of view they no longer waste valuable CPU cycles fetching deprecated URLs. They can automatically trigger any link authority updates and remove the old URL from the crawling canon.

Bing said that the pages submitted aren’t automatically accepted, that they still need to meet it’s “selection criterion.” Is that a reference to spam and low quality pages?

I would think so.

Being a curious developer I have done some minor spidering projects to see what I could find over the years. There are many crazy things out there.

Search engines need to trim the fat if you will and concentrate on the good stuff.

I think I read that about 5% of the available URLs are ‘indexed’ in the search cannon. That’s because so much content out there is just weak or spammy.

I can’t say how Microsoft scores or categorizes what qualifies as low quality. So focus on creating good content your target market wants and needs and you should be safe.

What’s your opinion of the bulk URL uploading capabilities?

As an engineer I know how the API probably works under the hood. So technically when you upload a single URL it triggers the same workflow as a batch submission.

The only difference is it triggers a single workflow as opposed to multiple parallel workflows.

As far as calling the API it is the same end point. You just supply a slightly differing payload.

This can improve efficiency if you have a larger site with the aforementioned fluid content situation. In that case maybe a CRON job to submit updates every hour means your systems are less taxed, even for a ‘blog’ heavy site like mine.

My article publishing workflow can trigger updates on a couple of dozen pages that might link to it. They need to be submitted and knowing what slugs are updated means I can place a single call rather than a series of calls.

Any insights you want to share for those who want to use the Bing API?

The beauty of this API is you can add it to your automated workflow, as part of the publishing process. You just need to configure your system once and it pretty much just runs without any more fuss. I forget the Bing Submission API is part of my workflow.

At the same time, there is a nice endorphin hit when you publish a new article with a few long tail keywords you targeted and seeing it in the search results a minute later.

That instant feedback lets you know your article has passed important quality checks not only for the search engine but for your target reader.

Read More About Bing Instant Indexing API

Bing URL Indexing API – Users Claim Instant Ranking in 10 Minutes