Ask ten SEO professionals whether it’s better to have all your content on one site or split it up, and you’ll get eleven answers (yeah, I’m usually able to see both sides of things and offer both opinions accordingly!)… Yet under certain circumstances, it just makes a great deal of sense to go with multiple web sites. Done right, the payoff can be huge. It’s a great way to control some of the back-link numbers.

Ask ten SEO professionals whether it’s better to have all your content on one site or split it up, and you’ll get eleven answers (yeah, I’m usually able to see both sides of things and offer both opinions accordingly!)… Yet under certain circumstances, it just makes a great deal of sense to go with multiple web sites. Done right, the payoff can be huge. It’s a great way to control some of the back-link numbers.

Yet to me, it’s not just about building links. With the right client (read that someone with deep pockets who trusts your recommendations), it’s an opportunity to get even more value. Much more…

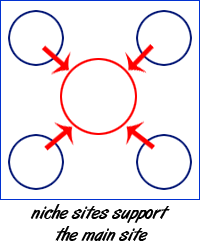

For those of you who are not familiar with the process, what I’m talking about here is where your client has one main web site, and X number of “micro-sites” or “satellite” sites. Or in some cases, a number of very large web sites that all drive traffic to the main site or to each other, depending on your needs and the scope of the client’s offerings.

Shameless Side Note: if you Sphinn this article, I’ll be your BFF!

Mesothelioma Clients Are Manna from Heaven

Mesothelioma Clients Are Manna from Heaven

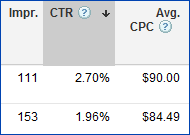

One of the first times I had an opportunity to create a multi-site strategy was back in November of 2006. I was brought on board to help take a legal web site and lift it up from the 15th page of Google. For those of you who have never heard of mesothelioma, let alone why it’s such a hot-button market for SEO, let me just say this:

CPC up to $98

And

1 Conversion can net $2,000,000 revenue for the site owner

Nuff said?

Gray Hat – Black Hat Competition

Given the stakes in this arena, my initial analysis found all sorts of methods and techniques being used by the big players, and they’d been doing it for several years. Lots of gray hat methods. And so much black hat going on it makes my head spin. (Not that I’m complaining – I don’t do drugs anymore, so I kinda like the head rush…)

A Real Challenge

My task then, was a real challenge because competitors had been doing SEO for years. And they all have deep pockets. And I wanted to find a way to overcome the longevity issue, and surpass them without using black hat. So I had to formulate a sound method that would stand the test of time and ongoing heavy competition.

Results Even a Mother Could Be Proud Of

Results Even a Mother Could Be Proud Of

I’m happy to say that as a result of my multi-site strategy, within just a short order, my clients were showing up on the first page of Google for a few of their most valued phrases. In fact, to this day, we still own the #1 and #2 positions for those same exact phrases. Even though all the while, the competition has not let up on their efforts.

Since then, we’ve also gotten up there for several other important phrases. And a number of other clients in different industries and markets have come along where I’ve been able to apply and build on those same key concepts.

Square Pegs Don’t Fit In Round Holes

Square Pegs Don’t Fit In Round Holes

One size does not fit all in this process. Every client is going to be different, so the type of niche sites you suggest they build (and thus you get to optimize) will vary greatly. For companies that have a multi-regional, national or international reach, the obvious opportunity here is to have a site set up for each of several geo-locations.

Or if the company has X number of divisions or departments, perhaps you set up a site for each of those. Does your client have five major services they offer? Well heck, that’s six web sites, plus a blog of course, just waiting to be optimized and cross-linked!

The whole concept here is that if you do the footwork, and you can get buy-in from your client, with enough time and leverage, you can (pretty spectacularly in some cases), end up with several highly optimized, highly authoritative web sites, and all passing quality link value. (No I didn’t say link juice, because I don’t drink that Kool-aid thank you very much…)

Proper Planning Prevents Poor Performance

Before you embark on such an endeavor, there are a number of key points that need to be thought through; else you end up in the black-hat arena… And surely, you’re of the same mind-set as I am, and wouldn’t ever consider violating the cardinal rule of client services business ethics, right?

So here then, are eight of the most important key points to building multiple niche sites and taking control of at least part of your back-links…

1. Key Player Buy-In

Just because you see the potential value (back-link or otherwise) in having eighteen highly optimized web sites out there promoting your client offerings doesn’t mean they do. Or that their VP of marketing does. Or that their development team is up to the task when it comes to execution.

So the first thing you need to do is map out your vision, gather supporting information, and present a plan to your client that they can buy in on. You’ll need to be well prepared to answer any questions that get thrown your way, especially if their head of Marketing is just knowledgeable enough about SEO to be dangerous.

You’ll also need to have a specification document prepared that the developer(s) can execute – especially if there’s any way to automate aspects of the seeding, and making the content management as effortless as possible.

Items to consider for automation

This is vital when you are dealing with large sites – more than a couple of my clients have sites with tens of thousands of pages and several have sites with hundreds of pages. Yet it’s also just as useful if you have three or more sites that each only have a couple dozen pages. And the ability to grow the depth of content become infinitely more manageable.

- One unified log-in for the CMS of all sites

- Auto-Seeding image Alt-attributes

- Auto-Seeding unique Page Titles, Meta Keywords, Meta Descriptions, and Footers on Product Detail pages, News Article Detail pages and Event detail pages

- CMS based entry system for unique Titles, Meta Keywords, Meta Descriptions and Footers on all other pages

- Auto adding cross-site links

- Automating sitemap.xml file generation

2. Time & Cost Commitment

Before you go off half-baked, understand that the whole purpose of this process is NOT to just slap up a bunch of worthless sites and fill them with links. It’s to end up with several truly optimized web sites that are each robust enough to become authoritative in their own right over time to make it worthwhile.

Otherwise, you’ll be wasting everyone’s time, and a good amount of your client’s marketing budget.

Beyond the design, development and hosting fees check to be sure your client is willing to pay for you, someone on their staff, or a 3rd party agency’s time when it comes to content as well. We’re talking long-term commitments. So think it through.

On the higher end when it comes to costs, one of my multi-site clients pays us $50,000 a year ongoing for organic SEO. And that fee does not cover the costs of technical coding or graphic design work that in this case comes from a 3rd party company. And they have a part time content writer who also contributes.

3. Logistics Organization

Given the need to orchestrate several web sites for the same client, you’ll need to have a plan when it comes to issues like monthly and quarterly report generation, on-site optimization, and individual site link-building through other methods.

Don’t forget PPC management – how will you handle that? Will it be one account with several campaigns, or separate accounts for each? How will you track conversions that come through one of those niche sites organically or by way of PPC dedicated to that niche site?

When it comes to tracking information and results, personally, I rely heavily on Excel spreadsheets. One spreadsheet with multiple tabs works well in this scenario. And we use Google Analytics with conversion tracking. Lots of Reporting…

4. Niche Relevance

Since we’re going for maximum authority and ranking on every site, each one needs to have true relevance for the niche focus you assign it. If you miss this mark, the visitor experience will be very poor, your PPC campaigns will have a lower quality score, and ultimately, your client will miss conversion opportunities. Heck, it could also harm their brand identity if you’re not careful.

5. Keyword Planning

Here’s where it can get really ugly, really fast. Since you’re now going to be responsible for several web sites, it means you have the potential for an exponential number of keyword phrase combinations to use as compared to one site. If you plan it properly, you’ll have several unique phrases on each site, however there will most likely be some bleed-through.

This can ultimately cause problems – imagine having one of the smaller niche sites take over the top position in the SERPs, then having the client come to you one day and grill you about that! That may not be a problem in some situations, yet it’s definitely something to be aware of and think through as you do your work.

And yes, that DID happen to me at one point.

6. Unique Content

This is obvious to most of us, yet can’t be over-emphasized in this multi-site scenario. Especially if you’re going to turn over the content writing to your client or some print-media hack who thinks they know everything or worse, feel threatened that you’ll take over their work…

Seriously – as important as it is when we’re talking about duplicate content issues on one site (http://www.client.com vs. http://client.com vs http://client.com/index.htm ) just think about how insanely poor your SERP weights will be if you multiply that by six sites. Then factor in using the same “About” content on every site, and the same “Services” content.

No, You Can’t!

No, you can’t use the same content and just change six words to make it “unique” for each site. Don’t you already know how a page has to be at least 60% unique in order to not be considered duplicate content? (Think majority of the readable content)

7. Link Building

Reader sarcasm moment: Well heck, finally – he’s talking about link building – six hours into an article on link building. Okay. Well then this better be REALLY good info, for all the waiting I’ve had to do reading about anything BUT link building so far…

And back to the article, thank you very much: Okay, so you’ve got 93 wonderfully optimized individual web sites, each with their own niche focus. And you get lots of points for having done such a good job that each site will average a 46% conversion from that site’s PPC campaigns. That’s all good and fine. Except this article is just as much about Link Building that you control, to take some control over the authority building for your client’s main site. Right?

Right! (duh) So – how many links do you place on each niche site that point to the main site? Are they all in the main navigation? Buried in the footer? In the content?

Well it depends. (yeah- I know -you hate reading that when you come to what is known as a highly authoritative SEO blog don’t you? I mean, after all, you come here needing answers. Cold hard facts, based on proven tests and case studies. Because heaven forbid you, dear reader, should actually have to do any of that testing or experimenting yourself, right?

Wrong. The fact is that I actually care about you. Really. Though my articles are often dripping with sarcasm, it’s loving sarcasm. Because I want you to succeed. Heck, I want you to flourish, even! And the more you put in your own footwork, the more you will learn, and the more skilled you will thus become. And that means you’ll be able to increase your hourly rates. And hire a team to do the mundane stuff. (See #8 below).

Of course, what this whole key point is all about, is that you’re going to need to apply all the standard rules of SEO when you decide where, how often, and in what manner to link back to the main site. And since each multi-site endeavor is unique, there is no one formula that applies to every situation.

Basic Linking Rules

Don’t over-link, don’t bury your links in hidden text or through -zIndex CSS – you know – all those SEO Best Practices rules.

Make sure that the page you place the link on is really complimentary in phrases and content to the page you’re pointing the link too, when you’re putting those links into the content itself.

Be sure to provide a link in the site footer where the link is the name of the company. That should be on every page of the niche site, not just the home page.

If it’s appropriate, (and you aren’t looking to funnel all contact activity on that site just through that site’s contact form) provide a link or three on each site’s contact page that points to the contact page or home page or one or more individual bio pages on the main site.

But be very clear in a descriptive way about what that link is about and informing the site visitor that it’s a link to the main site.

Turbo Charging Link Count

Maybe you have news articles on the main site. Lots of them. Well, maybe on a niche site, you have the synopsis of the articles and a link to the full article back on the main site. (Ooooh I just gave you a big, juicy succulent rib-eye steak there, unless you’re a vegetarian, in which case I just gave you a gift certificate for two good for a year’s worth of groceries at your local farmers market, okay?).

That one is kind of like your own RSS feed system. Just be sure that you don’t distribute the link spread to every site in the same way (we want to avoid duplicate content on those niche sites). So either limit this method to just one niche site, or divide it up – having one category of links on one site, another category on another, and so forth…

That same method can work for Upcoming Events, Press Releases, Product details pages within a niche category…

8. SERP Tracking

Given how important it is to stay on top of the whole process at this level, and all the issues you’ll face accordingly, a mission critical responsibility you’ll have will be to run keyword position reports on a frequent basis. Where the site is at in the SERPs for every phrase across all your sites is one of the single most time-consuming and yet helpful processes you will need to do. How else will you know which sites need authority building love?

Yeah Yeah Yeah…

You can use one of the many automated rank checking tools out there if you want.

Yet since you and I really are like-minded, you totally rail against any such solutions since they violate Google’s terms of service. And you also, as I do, understand that the reason Google doesn’t like those suckers is because it skews the data and adds to the server load in an artificial manner.

And besides – since you’re working for a client who has such deep pockets that they can afford to pay you to do all that work manually, you’re like me and you’ve hired an entry level worker who wanted to break into the SEO field, and you thus have no problem paying them to do that work. Because you are still charging your client $150 an hour for that work anyhow right?

And while that worker is earning your company gobs of money, you’re online reading my articles anyhow, and that means you’re being paid to be here right now in fact! So stop your griping about manually checking the SERPs already, and go optimize those sites!

That’s A Wrap!

Okay – so some of you, if you got this far, are scratching your heads, thinking – this was a decent enough article, yet it really wasn’t about link building as much as it was about a multi-site marketing strategy…

Well to that, let me just say this – some people in our industry believe that all you have to do is focus on one aspect of the broader SEO method toolbox. Just get enough third party sites to link back, and you can skip the whole on-site thing. Or just write enough 40,000 word long highly optimized pages. Or just hire a bunch of article monkeys so you have a plethora of fresh content…

Well that’s all good and fine for some. Personally, I want to be the best damned SEO Ninja on earth some day. Or at the very least, I want to be really wealthy one day, having built my little SEO empire. Or, barring that, heaven forbid, I want to know that for those clients who can afford to pay to get the best results, I pulled out all the stops. And I got them the best results over the long-haul. And did so regardless of how the search engines changed their algorithms along the way.

And when those did change, I didn’t have to go to my clients and explain why they fell off the first page organically.

So you see, to me, if you’re going to do something as crazy as build several web sites for one client, you might as well go all the way, given the opportunity. And if you do, it won’t be so crazy after all…

Find the Holes in the Swiss cheese

So what did I miss? I know I didn’t cover everything. And if you found any flaws in my recommendations, please – let me know – leave a comment – share it with everyone, so we can all become better too…

And don’t forget – if you Sphinn this article, I’ll be your BFF!

Alan Bleiweiss has been an Internet professional since 1995. Just a few of his earliest clients included PCH.com, WeightWatchers.com and Starkist.com. Specializing in SEO since 2001, Alan manages a team of Internet Marketing specialists who currently handle solutions for some 40 clients with PPC budgets ranging upward of $300,000 a year and SEO budgets in the six figures. Follow him on Twitter @AlanBleiweiss or read his blog at Search Marketing Answers.