In 2015, Google revealed to the world that it uses machine learning technology in its search engine.

Safecont.com was born that same year. It is the world’s first SEO-focused SaaS to deploy machine learning to detect issues with website content and architecture, among other SEO issues.

Safecont is a tool for analyzing web content and architecture.

It uses machine learning technology to identify a website’s most problematic areas and avoid being penalized by search engines or experiencing ranking problems.

By training artificial intelligence algorithms, we can detect low-quality content that may result in algorithmic downgrades, manual actions, or other complications.

Safecont is a scientific and technological tool that uses the power of mathematics to deliver quality results.

It has been mentioned by leading internet marketing expert Jim Sterne in his book “Artificial Intelligence for Marketing: Practical Applications,” published by the renowned academic and educational publisher WILEY.

How Safecont Works

Safecont browses through the client’s website using a proprietary web crawler.

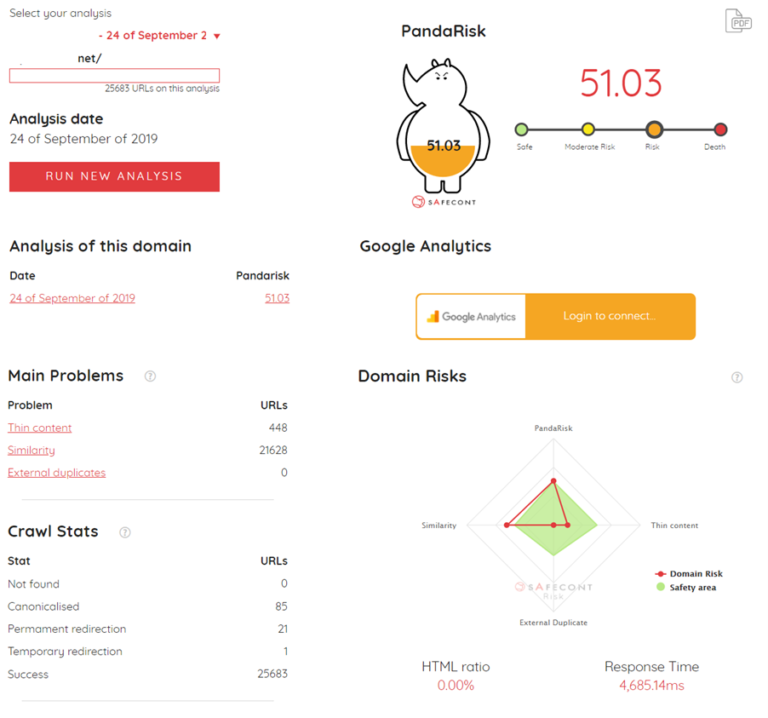

It extracts data from the domain’s URLs and analyzes it using machine learning models specifically designed to detect problematic content and penalty risks.

These models are built using information compiled through URL data sets exhibiting specific damaging patterns that made them incur a significant traffic loss.

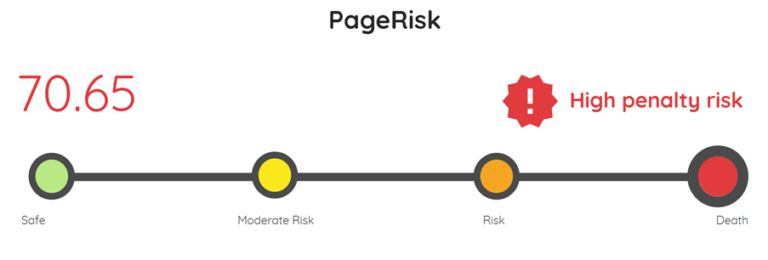

After performing a comparative analysis of client URLs against URLs with problematic patterns, machine learning algorithms highlight common patterns and allocate the URLs a risk score ranging from 0 to 100.

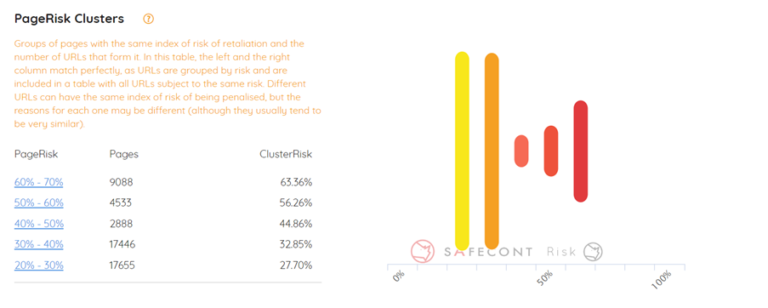

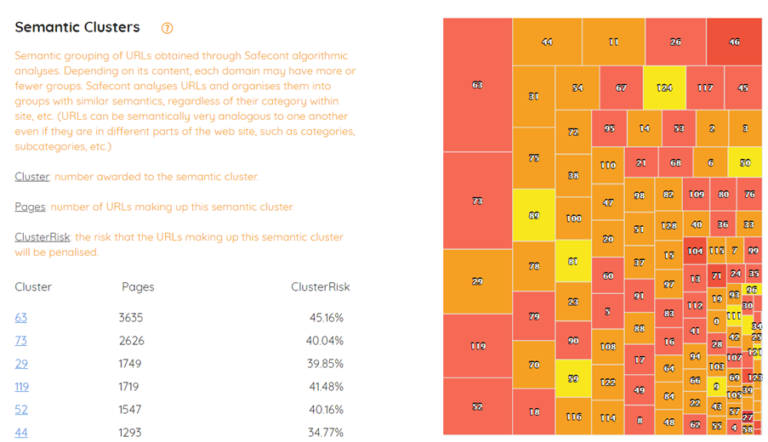

Additionally, Safecont uses a range of clustering methods to group a domain’s URLs based on some of these patterns.

This makes it easy to establish whether traffic issues caused by problematic content, architecture, or internal links are affecting a given URL, a subset of URLs, or the domain as a whole.

Put simply, if a site experiences a decline in traffic caused by a specific problem following a search engine algorithm update, and your site has a similar problem, then there is a high probability that you will also experience a drop in traffic. By aggregating all the information contained in thousands of URLs and their organic traffic data, we have been able to build a reliable search engine risk assessment model.

It’s a match! SEO and Machine Learning were destined to meet. This technology allows the generation of error detection patterns that are impossible to detect without it.

In technical terms, the process involves:

- Crawling: Our crawler fetches and parses information from each URL.

- Extraction of interesting signals for each URL by using several machine learning tools and distributed computing frameworks like Pandas, Scipy and Spark ML.

- Two different clustering algorithms divide the data into different groups, based on their risk level and network structure.

- PageRisks and Semantic Proximity: Safecont uses a meta-classifier combining the results of four classifiers to determine when a URL has problems.

What Other Features Does It Include?

1. Metrics for the Detection of Problematic Content Affecting SEO

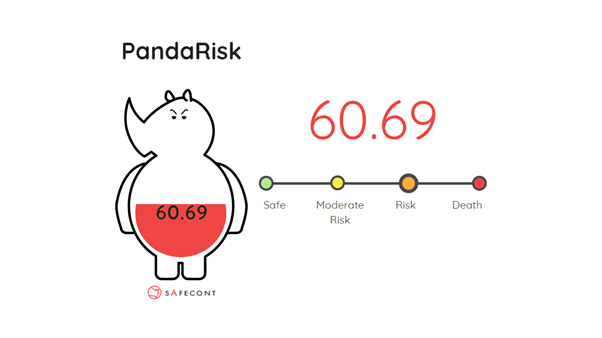

- PandaRisk.

- ClusterRisk.

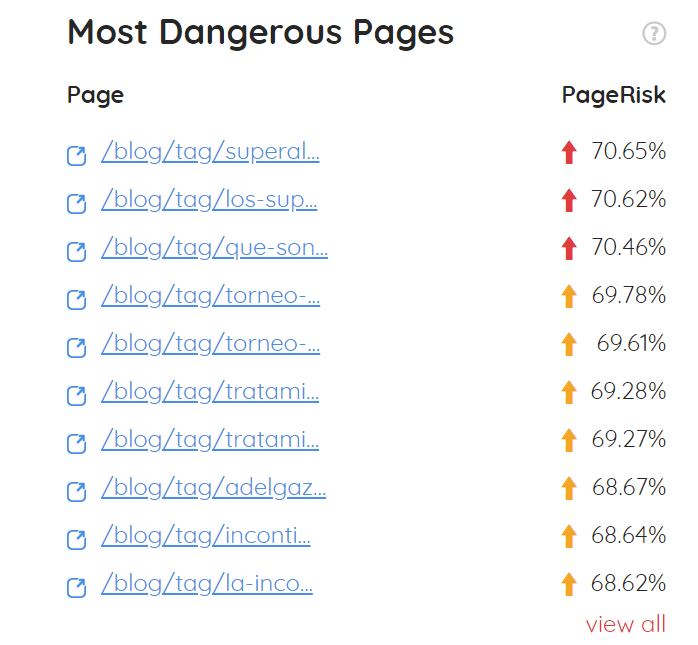

- PageRisk.

- ThinRatio.

- Internal texts Similarity.

- External texts plagiarism.

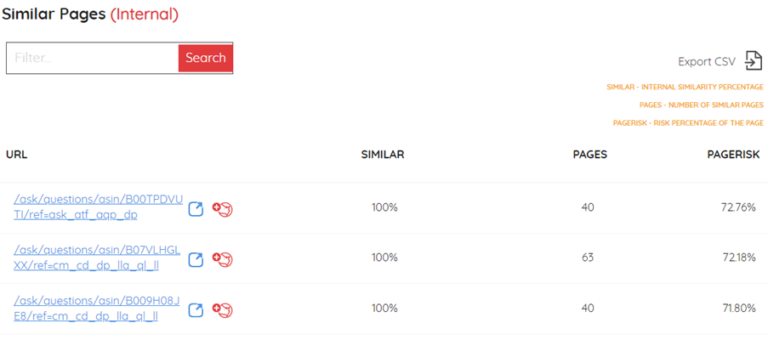

2. Analysis of Internal Duplicate Content

With five years of development under our belt, we are able to analyze and compare hundreds of thousands of URLs faster and on a larger scale than any of our competitors.

Although not always done intentionally, many websites feature duplicate or near-duplicate content disseminated across different URLs.

This arises because of the site’s inherent structure and architecture, uncontrolled lists, tags, space or mode division multiplexing, defective URLs that load the same content from different pathways, and so on.

Forcing a search engine to browse repeatedly through the same or similar content impairs a website’s perceived authority and impacts negatively on its indexing and ranking.

3. Analysis of External Duplicate Content

By running a comparison with other domains across the web, we detect content copied by other websites and attribute a risk score quantifying the danger that this plagiarized content presents for your ranking.

Information is seized for each webpage that features identical content.

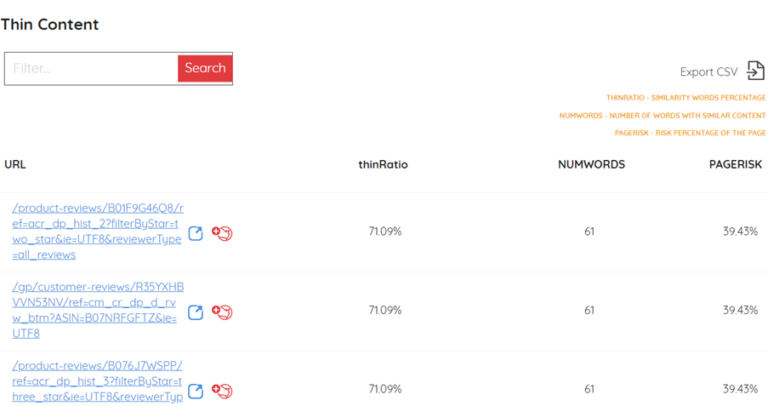

4. Thin Content Detection (Very Little or Low-Quality Content)

5. Semantic Analysis (Semantic Proximity, Semantic Clustering)

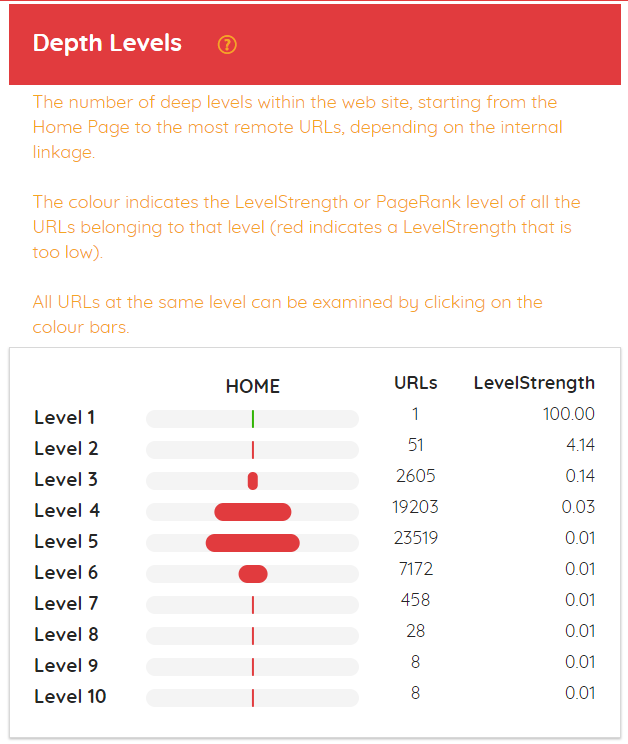

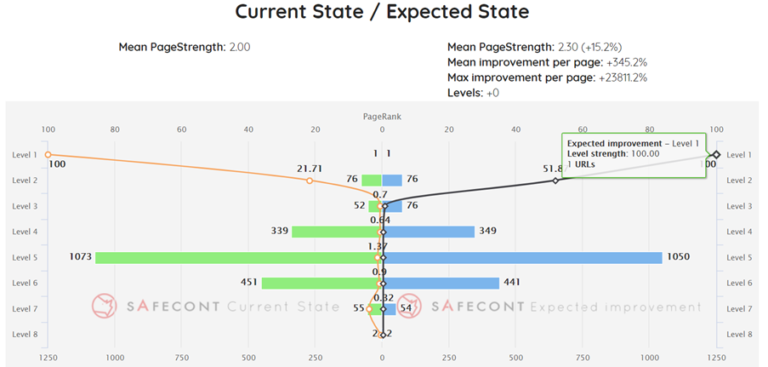

6. Web Architecture Analysis

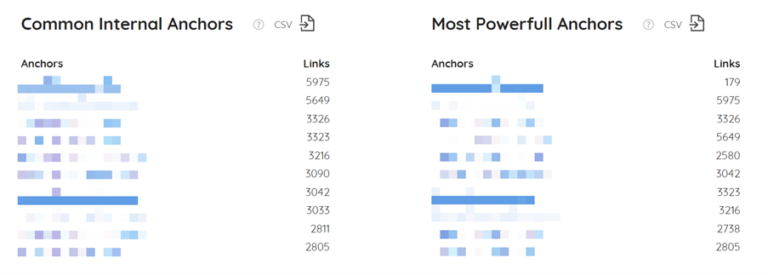

PageRank distribution and accumulation based on depth level, most common anchor texts, most powerful anchor texts, etc.

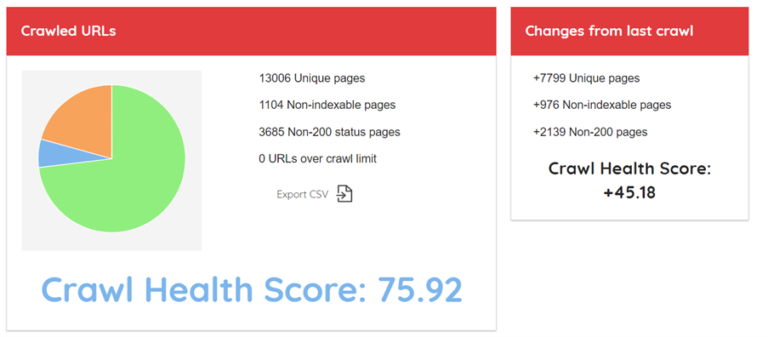

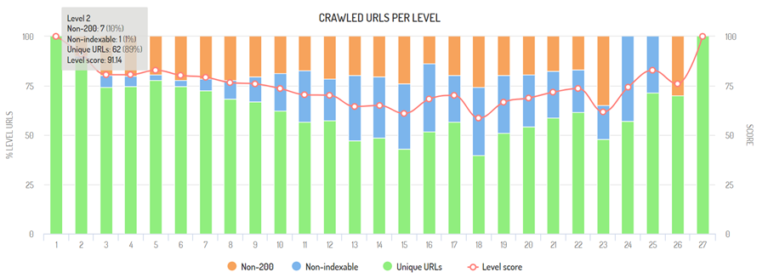

7. Crawl Analysis

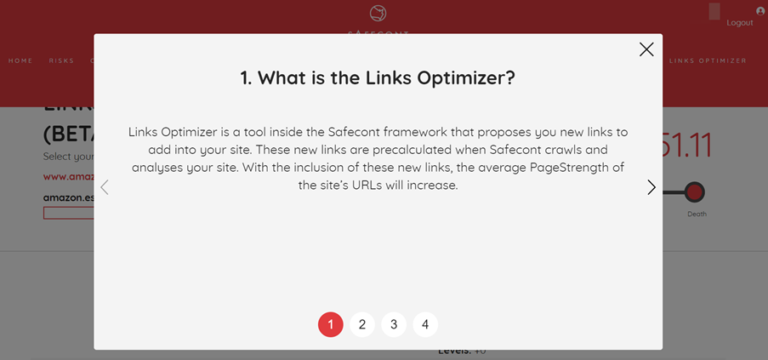

8. Internal Link Auto-Optimizer (Beta Version of an Interlinking Tool That Improves a Website’s Internal Page Authority Distribution)

What’s Our Aim?

The company began its journey in 2015 and has been growing continuously ever since.

After completing a full year of development, we started marketing our product, focusing primarily on the Spanish and European markets.

In time, our client profiles have evolved from small users to companies posting several hundreds of millions of dollars in revenue.

In 2019, Safecont was a finalist at the European Search Awards in the category for “Best Software Innovation.”

Safecont is a financially sound software company seeking to expand internationally or reach other kinds of profitable agreements.

This is a complex undertaking, which is why we are open to international partnerships likely to either help us achieve our aim or prove interesting in a different way, like merging with another SaaS.

Besides interesting partnership arrangements, we are open to all kinds of commercial agreements, proposals, or collaborations with the potential to facilitate Safecont’s expansion.

Our technology can be divided, bundled, and adapted to other software and technologies.

Safecont is a fully developed scalable SaaS with endless possibilities on the international market.

You can trial it by following this link or get in touch with us directly through our website.

Discover the power of Safecont

The opinions expressed in this article are the sponsor's own.