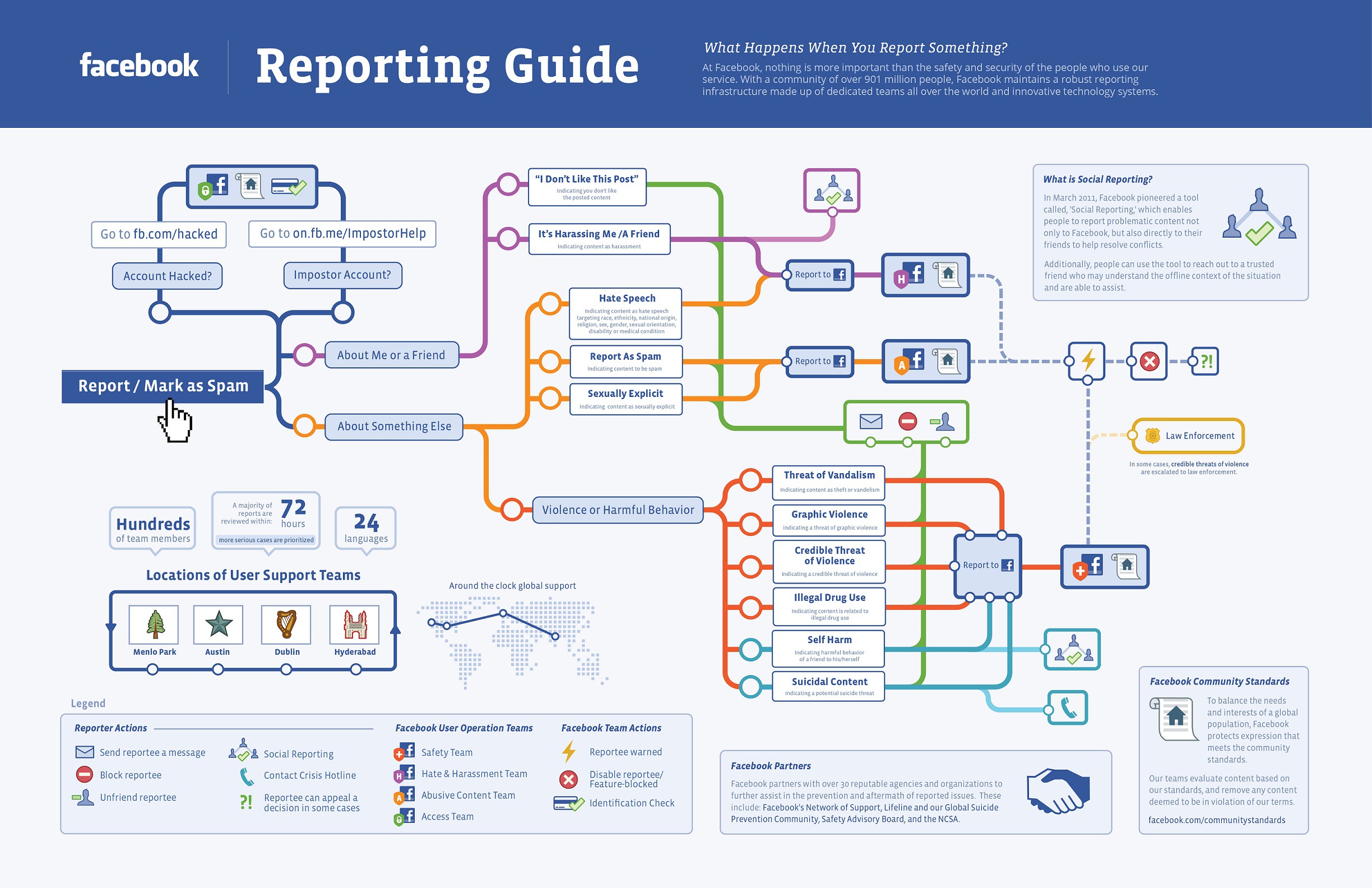

When one of Facebook’s 900 million plus users clicks the “Report” button, the world’s largest social network has multiple security teams ready to take immediate action. On Tuesday, Facebook posted the following information on their blog concerning the “Report” button:

“There are dedicated teams throughout Facebook working 24 hours a day, seven days a week to handle the reports made to Facebook. Hundreds of Facebook employees are in offices throughout the world to ensure that a team of Facebookers are handling reports at all times.”

The post emphasized that the social network currently handles reports in 24 languages and has offices located around the world to ensure the site and its users are safe and secure 24/7. Based on the type of report that Facebook receives, User Operations forwards the report to the Safety team, the Hate and Harassment team, the Access team, or the Abusive Content team. For example, graphically violent material is forwarded to the Safety team, and a user that has lost access to their account or had their account hacked is referred to the Access team.

Once the appropriate Facebook team has properly reviewed the report and completed its investigation, one of three possible outcomes occurs:

- The content is deemed acceptable and within Facebook policies, so no action is necessary.

- The content is found to violate Facebook policies and is removed from the site.

- The content is extremely offensive or illegal, and the investigation is escalated to include law enforcement.

When a user is found to have violated the Facebook Community Standards, User Operations may disable certain privileges, prevent further sharing, or revoke/limit future access to Facebook.

In addition to the Facebook teams, the company also works closely with outside groups and experts, including law enforcement, suicide prevention agencies, the Safety Advisory Board, and the National CyberSecurity Alliance.

By releasing this information, Facebook is attempting to reassure 900 plus million users that they are actively protecting them, while simultaneously discouraging the “bad guys” from breaking the rules in the future.

Sources Include: Facebook Safety

Image Credit: Facebook Safety